Symptoms

You've configured a port-channel on your Arista switch, cabled everything up, and nothing. The bundle interface sits in a down state, individual member links may show physically connected at layer 1, but traffic isn't flowing and the channel never comes up. The range of symptoms is wide depending on which root cause you're dealing with:

Port-Channel1

showsnotconnect

inshow interfaces

- Member interfaces report

connected

individually but the bundle won't aggregate - LACP PDUs appear in packet captures yet negotiation never completes

- On MLAG pairs, one peer thinks the port-channel is active while the other reports it inactive

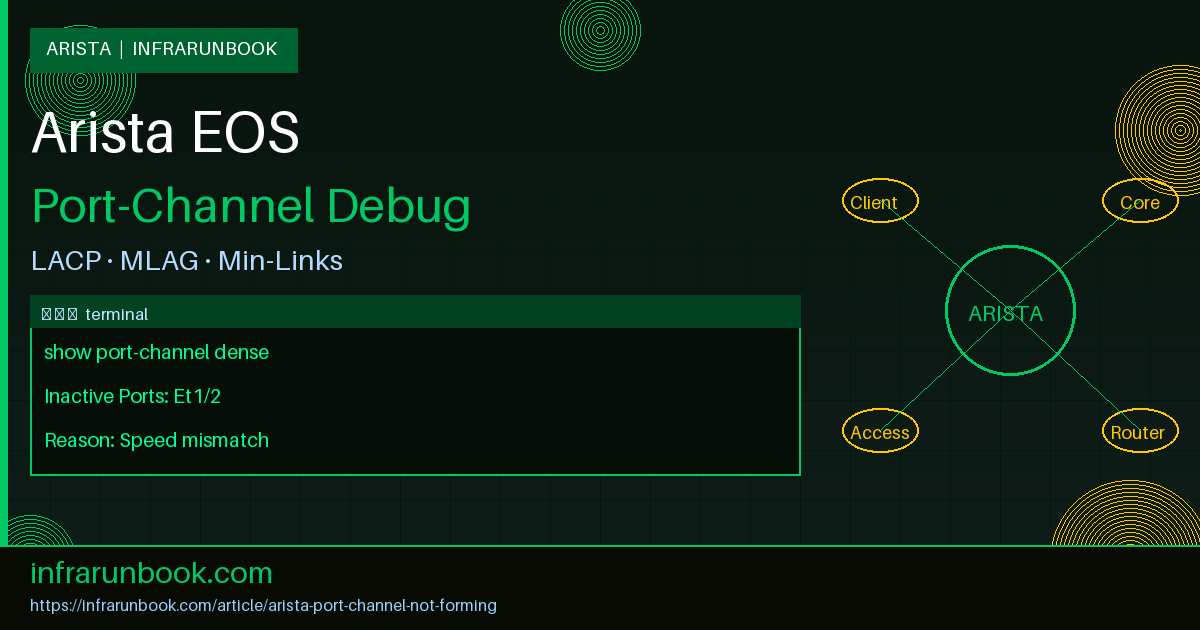

show port-channel dense

shows members flaggedI

(individual) orD

(down) instead ofR

(running)- The port-channel flaps repeatedly, coming up briefly then dropping again

I've been pulled into more than a few late-night outages where a port-channel refusing to form was the root cause, and the fixes range from a single CLI line to a full reconfiguration on both ends of the link. Let me walk through everything I've seen, starting with the most common culprit.

Root Cause 1: LACP Mode Mismatch

LACP (802.3ad) requires both ends to agree on how they're negotiating the bundle. Arista EOS supports three modes:

active,

passive, and

on. The trap is subtle: if both sides are set to

passive, neither initiates LACP PDUs and the channel never forms. Both sides sit politely waiting for the other to say hello. You need at least one

activeend to kick things off.

The

onmode adds another dimension. Setting a port to

oncreates a static LAG with no LACP negotiation whatsoever. If one side is

onand the other is

active, the active side sends LACP PDUs that the static side simply ignores. A static LAG only works when both ends are set to

on. Mixing

onwith any LACP mode is a recipe for a bundle that never forms.

To identify a mode mismatch, check both switches:

sw-infrarunbook-01# show running-config interfaces Ethernet1/1

interface Ethernet1/1

channel-group 1 mode passiveIf the far-end peer is also

passive, LACP will never negotiate. You can confirm nothing is happening by looking at the LACP interface detail:

sw-infrarunbook-01# show lacp interface Ethernet1/1

State: LACP Passive

Partner information last changed 00:00:00 ago

Partner System ID: 0000,0000-0000-0000

Partner Key: 0x0000

Partner Port Number: 0x0000

Partner Port State: 0x00A partner system ID of all zeros is the definitive tell — no LACP PDUs have ever been received from the peer. The fix is straightforward: change at least one side to

active.

sw-infrarunbook-01(config)# interface Ethernet1/1

sw-infrarunbook-01(config-if-Et1/1)# channel-group 1 mode activeAfter the change, LACP negotiation should complete within a few seconds. Confirm it worked:

sw-infrarunbook-01# show lacp interface Ethernet1/1

State: LACP Active

Partner System ID: 8000,001c.7300.abcd

Partner Key: 0x0001

Partner Port State: 0x3dA real partner system ID means LACP is talking. In my experience, standardizing on

mode activeeverywhere eliminates this class of problem entirely. There's no practical downside to always being the initiator.

Root Cause 2: Different Speed on Members

All member interfaces in a port-channel must run at the same speed. This sounds obvious, but it bites people regularly — especially in environments with mixed optics, migrations from 1G to 10G, or when someone has manually hard-coded speeds on individual interfaces. If EOS detects a speed mismatch, it will exclude the inconsistent interface from the bundle and leave it inactive.

Check interface speeds against port-channel membership:

sw-infrarunbook-01# show interfaces Ethernet1/1-4 status

Port Name Status Vlan Duplex Speed Type

Et1/1 connected in Po1 full 1G 1000BASE-T

Et1/2 connected in Po1 full 1G 1000BASE-T

Et1/3 connected in Po1 full 10G 10GBASE-SR

Et1/4 connected in Po1 full 1G 1000BASE-TEt1/3 is the odd one out — 10G while the rest are 1G. EOS will flag this explicitly in the port-channel detail output:

sw-infrarunbook-01# show port-channel 1 detailed

Port Channel Port-Channel1:

Active Ports: Ethernet1/1 Ethernet1/2 Ethernet1/4

Inactive Ports: Ethernet1/3

Reason: Speed inconsistent with other port-channel membersThe fix depends on your hardware. If the optic auto-negotiates or supports speed forcing, you can try:

sw-infrarunbook-01(config)# interface Ethernet1/3

sw-infrarunbook-01(config-if-Et1/3)# speed forced 1000fullRealistically though, if you've dropped a 10GBASE-SR SFP+ into a slot that you want to run at 1G, you're working against your hardware design. The right answer is usually to swap the optic for one that matches the rest of your bundle, or — if you actually need 10G throughput — upgrade every member to matching 10G optics. I've watched teams spend hours trying to force a speed that the transceiver type won't physically support. Save yourself the frustration and match the hardware.

Root Cause 3: Wrong Port-Channel ID

This is embarrassingly common, and I say that having made this mistake myself on a production rollout. When you assign interfaces to a channel-group, the group number on both sides doesn't need to match — LACP uses system IDs and port keys to identify peers, not matching numbers. The problem is when interfaces on the same switch are accidentally split across different channel-group IDs. LACP assigns a key per channel-group, and interfaces with different keys will never bundle together even if they're physically connected to the same remote device.

Check the LACP key assignment per interface:

sw-infrarunbook-01# show lacp interface

Interface Ethernet1/1:

Local System ID: 8000,001c.7300.1234

Local Port Key: 0x0001

Local Port Number: 0x0001

Interface Ethernet1/2:

Local System ID: 8000,001c.7300.1234

Local Port Key: 0x0002

Local Port Number: 0x0002Et1/1 has key

0x0001and Et1/2 has key

0x0002— different channel-groups. Verify the running config to confirm:

sw-infrarunbook-01# show running-config interfaces Ethernet1/1-2

interface Ethernet1/1

channel-group 1 mode active

interface Ethernet1/2

channel-group 2 mode activeThere it is. Both interfaces should be in channel-group 1, but Et1/2 got assigned to channel-group 2 — probably a copy-paste error during config deployment. Fix it by removing the incorrect assignment and re-adding it to the correct group:

sw-infrarunbook-01(config)# interface Ethernet1/2

sw-infrarunbook-01(config-if-Et1/2)# no channel-group

sw-infrarunbook-01(config-if-Et1/2)# channel-group 1 mode activeAfter reassignment, the LACP key will match across all intended members and the interface will join the bundle. Run

show port-channel denseto confirm it transitions from

I(individual) to

R(running).

Root Cause 4: MLAG Interaction Issue

If you're running MLAG (Multi-chassis Link Aggregation Group), there's an entire additional layer of coordination required beyond standard LACP. MLAG uses a peer-link and a heartbeat to synchronize state between two Arista switches that present themselves as a single logical switch to downstream devices. When that coordination breaks down, MLAG will deliberately hold port-channels in a suspended state to prevent a split-brain forwarding loop. This looks exactly like a normal port-channel formation failure but has different root causes.

Start by checking overall MLAG health:

sw-infrarunbook-01# show mlag

MLAG Configuration:

domain-id : infrarunbook-mlag

local-interface : Vlan4094

peer-address : 192.168.100.2

peer-link : Port-Channel999

reload-delay : 300 seconds

MLAG Status:

state : Active

negotiation status : Connected

peer-link status : Up

local-int status : Up

system-id : 8000.001c.7300.1234

MLAG Ports:

Disabled : 0

Configured : 0

Inactive : 1

Active-partial : 0

Active-full : 1That

Inactive: 1tells you something's wrong. Dig into the per-interface MLAG state:

sw-infrarunbook-01# show mlag interfaces

local/remote

mlag state local remote status intf status

---------- ----------- ------- -------- --------- ------------

1 active Po1 Po1 up/up up/up

2 inactive Po2 - up/- up/n/aMLAG interface 2 has no remote peer — the peer switch either doesn't have this MLAG ID configured or it has a different ID number. Check the peer:

sw-infrarunbook-02# show running-config | grep -A2 "interface Port-Channel2"

interface Port-Channel2

mlag 3The local switch has

mlag 2but the peer has

mlag 3. They'll never reconcile. Fix the MLAG ID to be consistent across both peers:

sw-infrarunbook-02(config)# interface Port-Channel2

sw-infrarunbook-02(config-if-Po2)# mlag 2Another common MLAG trigger is a peer-link that's flapping. Even a brief peer-link outage causes MLAG to suspend member port-channels as a protective measure. Check the system log for evidence:

sw-infrarunbook-01# show logging last 50 | grep -i mlag

Apr 20 03:14:22 sw-infrarunbook-01 Mlag: %MLAG-5-PEER_LINK_CHANGE: Peer link Port-Channel999 changed state to down

Apr 20 03:14:25 sw-infrarunbook-01 Mlag: %MLAG-5-PEER_LINK_CHANGE: Peer link Port-Channel999 changed state to up

Apr 20 03:14:25 sw-infrarunbook-01 Mlag: %MLAG-4-INTF_INACTIVE: mlag 2 is inactive (peer is not active)If the peer-link keeps bouncing, that's a separate physical or configuration problem to chase down before the MLAG port-channels will stay stable. Don't paper over a flapping peer-link — find out why it's bouncing and fix that first.

Root Cause 5: Min-Links Not Met

The

min-linksparameter on a port-channel interface defines the minimum number of active member links required before the port-channel itself will come up. It's a legitimate feature — if you need to guarantee a minimum bandwidth floor,

min-linksprevents the bundle from forming with insufficient capacity. But it's also a silent killer when a member link goes down and your threshold suddenly can't be met.

The symptom: the port-channel shows

notconnecteven though some member links are active. Check the configuration first:

sw-infrarunbook-01# show running-config interfaces Port-Channel1

interface Port-Channel1

description Uplink to Core

switchport mode trunk

switchport trunk allowed vlan 10,20,30

min-links 3Now check how many members are actually running:

sw-infrarunbook-01# show port-channel 1 dense

Flags: D - Down, R - Running, I - Individual

No. Protocol Ports

------ ---------- -----------------------------------------------

1 LACP Et1/1(R) Et1/2(D) Et1/3(R)Two running members — Et1/1 and Et1/3 — but

min-links 3requires three. Et1/2 is down (hardware failure, cable pull, remote side misconfiguration), so the entire port-channel stays down even though two-thirds of your bundle is healthy.

The fix is either to restore Et1/2 or lower the min-links threshold to what you can actually sustain:

sw-infrarunbook-01(config)# interface Port-Channel1

sw-infrarunbook-01(config-if-Po1)# min-links 2The port-channel will come up with the two active members immediately after this change. That said, be deliberate about lowering min-links. If it was set to 3 to guarantee a 30Gbps minimum bandwidth SLA, you're now operating below that guarantee. Lowering the threshold is a tactical workaround — the actual fix is tracking down why Et1/2 went down and restoring it.

In my experience, the worst min-links configuration is setting it equal to your total member count. A 4-member bundle with

min-links 4means any single link failure drops the entire port-channel. For most use cases, setting min-links to half the member count is a reasonable balance between availability and bandwidth assurance.

Root Cause 6: LACP System Priority and Standby Members

LACP only supports 8 active members per bundle at a time. Any interfaces beyond that go into standby, waiting to take over if an active member fails. If you see some members with an unexpected

Standbystate in the LACP output while the port-channel appears partially up or misconfigured, you may have hit this limit or have a system priority conflict.

sw-infrarunbook-01# show lacp peer

Port System ID Port ID Key State

--------- --------------------- ---------- ----- -----------

Et1/1 8000,001c.7300.abcd 0x8001 0x01 Selected

Et1/2 8000,001c.7300.abcd 0x8002 0x01 Selected

Et1/3 8000,001c.7300.abcd 0x8003 0x01 Standby

Et1/4 8000,001c.7300.abcd 0x8004 0x01 StandbyIf you want Et1/3 and Et1/4 to be preferred active members over Et1/2, set lower port priorities on them. Lower number means higher priority and gets selected first:

sw-infrarunbook-01(config)# interface Ethernet1/3-4

sw-infrarunbook-01(config-if-Et1/3-4)# lacp port-priority 16384This won't be the cause of a port-channel completely failing to form, but it explains why specific members sit in standby when you expect them active.

Root Cause 7: LACP Fallback Configuration Mismatch

Arista EOS supports LACP fallback, which allows a port-channel to come up with a single active link when the remote end doesn't respond to LACP within the configured timeout. This is designed for PXE boot scenarios where hosts don't support LACP during early boot. If one side has LACP fallback configured and the peer doesn't, or if the fallback timeout is inconsistent, you'll see intermittent channel formation — the port-channel comes up briefly in fallback mode, then fails when the remote side sends LACP PDUs that contradict the fallback state.

sw-infrarunbook-01# show running-config interfaces Port-Channel1

interface Port-Channel1

port-channel lacp fallback static

port-channel lacp fallback timeout 90Check whether the peer has matching fallback config. If you're not in a PXE environment and don't need fallback, remove it:

sw-infrarunbook-01(config)# interface Port-Channel1

sw-infrarunbook-01(config-if-Po1)# no port-channel lacp fallbackIf you do need fallback (pure PXE boot path), make sure both the timeout and the fallback mode (

staticvs

individual) are consistent with what the attached host expects.

Prevention

Most port-channel formation failures are configuration issues that surface at deployment time — and they're entirely preventable with a few consistent habits.

Standardize on

lacp mode activeeverywhere. Having one side

activeand one

passivealways works. Two

passiveswitches is a silent failure. Two

activeswitches also works fine. Make

activethe default in your configuration templates and stop thinking about it.

Document your min-links values and the reasoning behind them. If

min-links 3exists because your SLA requires 30Gbps minimum, write that down in your IPAM system, your config management comments, or even a description field on the interface. The engineer responding to a 2am alert shouldn't be guessing whether it's safe to lower that value under pressure.

For MLAG environments, build a pre-deployment verification checklist: confirm MLAG domain IDs match on both peers, verify MLAG interface IDs are consistent across both switches, check that the peer-link is stable before bringing up MLAG member port-channels, and run

show mlagon both peers to confirm negotiation status shows

Connectedbefore declaring success. MLAG issues masquerading as port-channel problems are always more painful to debug at midnight.

Add

show port-channel denseand

show lacp interfaceto your standard change verification procedure. These two commands surface nearly every issue covered in this article within seconds of running them. Make them part of your post-change checklist the same way you'd check interface status after a fiber swing.

Finally, configure syslog alerting on LACP state changes. EOS generates syslog messages whenever LACP member state transitions occur — shipping these to a central logging platform means you'll know about a flapping member or a partial bundle degradation long before it becomes a full outage. A port-channel that loses one of four members is still forwarding traffic, but you've just lost your redundancy without knowing it. Catch that event proactively rather than discovering it when the second member fails and traffic drops entirely.