Symptoms

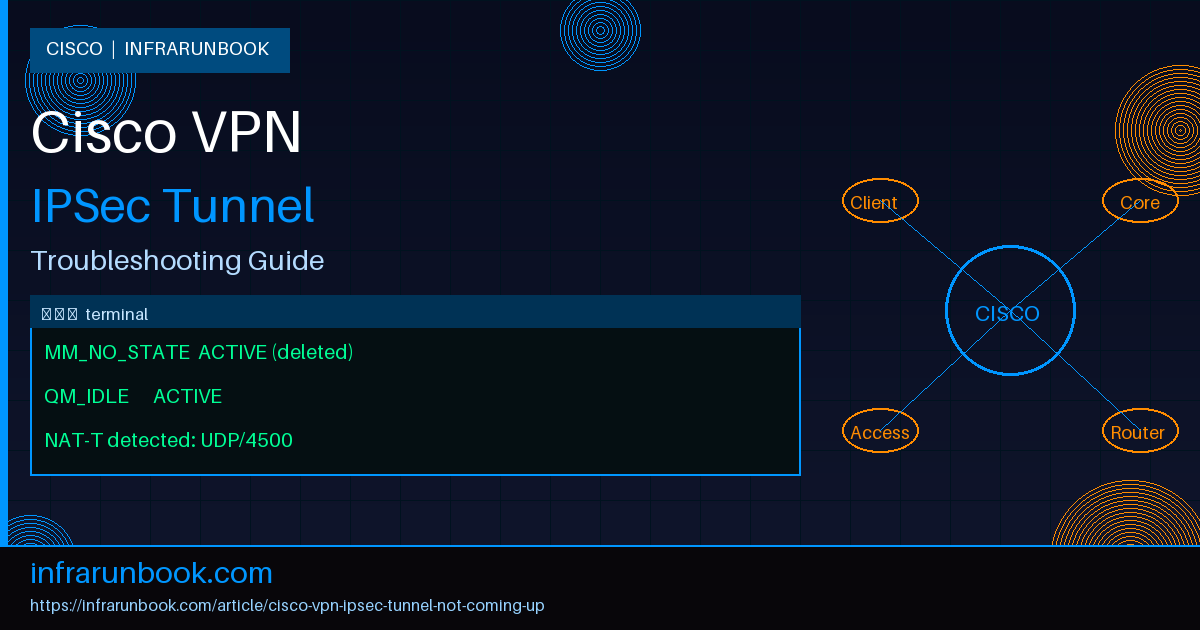

The tunnel is supposed to be up. You have the config in place, the peer is reachable via ping, and yet traffic isn't flowing. When you check the SA table, the tunnel is either stuck in

MM_WAIT_MSG2or

MM_WAIT_MSG5, or the output returns nothing at all — no SAs, no counters, just silence. Here's what you'll typically see when Phase 1 is failing:

router# show crypto isakmp sa

dst src state conn-id status

10.2.2.1 10.1.1.1 MM_NO_STATE 0 ACTIVE (deleted)Or perhaps Phase 1 appears up but the IPSec SAs are empty:

router# show crypto ipsec sa

interface: GigabitEthernet0/1

Crypto map tag: VPN-MAP, local addr 10.1.1.1

protected vrf: (none)

local ident (addr/mask/prot/port): (192.168.10.0/255.255.255.0/0/0)

remote ident (addr/mask/prot/port): (192.168.20.0/255.255.255.0/0/0)

current_peer 10.2.2.1 port 500

PERMIT, flags={origin_is_acl,}

#pkts encaps: 0, #pkts encrypt: 0, #pkts digest: 0

#pkts decaps: 0, #pkts decrypt: 0, #pkts verify: 0Users are reporting that the site-to-site link is completely dead. Pings across the tunnel fail. VoIP calls to the remote branch are dropping. Applications that depend on the tunnel — file servers, domain controllers, monitoring agents — are all unreachable. On the firewall side you might see ISAKMP retransmits stacking up with no response from the remote peer.

IPSec tunnel failures are rarely obvious. The tunnel doesn't scream at you — it just quietly refuses to come up. Let's go through the most common reasons this happens and exactly how to fix each one.

Root Cause 1: Phase 1 (ISAKMP) Proposal Mismatch

Phase 1 is where the two peers negotiate the ISAKMP SA — the control channel that protects the key exchange. Both sides must agree on the same encryption algorithm, hash algorithm, authentication method, Diffie-Hellman group, and lifetime. If even one parameter doesn't match, Phase 1 fails and you won't get anywhere near Phase 2.

In my experience, this is the single most common cause of a tunnel refusing to come up. It usually happens after a device upgrade where defaults silently changed, or when one side was configured by a different team with different standards. I've also seen it frequently when a third-party firewall on the remote end uses vendor defaults that don't align with Cisco's.

How to identify it: Enable ISAKMP debugging. Do this carefully in production — use a condition to limit output to the specific peer you're troubleshooting.

router# debug crypto isakmp

router# debug crypto condition peer ipv4 10.2.2.1A proposal mismatch will produce output like this:

*Apr 20 09:14:23.441: ISAKMP:(0): atts are not acceptable. Next payload is 0

*Apr 20 09:14:23.441: ISAKMP:(0): no offers accepted!

*Apr 20 09:14:23.441: ISAKMP:(0): phase 1 SA policy not acceptable! (local 10.1.1.1 remote 10.2.2.1)"No offers accepted" is the smoking gun. The remote peer sent one or more proposals, and not one of them matched what the local router has configured. Check what's actually configured locally:

router# show crypto isakmp policy

Global IKE policy

Protection suite of priority 10

encryption algorithm: AES - Advanced Encryption Standard (256 bit keys).

hash algorithm: Secure Hash Standard 2 (256 bit)

authentication method: Pre-Shared Key

Diffie-Hellman group: #14 (2048 bit)

lifetime: 86400 seconds, no volume limitHow to fix it: Get the Phase 1 parameters from the remote side and add a matching policy locally. If the remote is using AES-128, SHA-1, and DH group 2, either add a matching policy or — better — update the remote end to use stronger parameters. Adding a compatible policy on the local router:

router(config)# crypto isakmp policy 20

router(config-isakmp)# encryption aes 128

router(config-isakmp)# hash sha

router(config-isakmp)# authentication pre-share

router(config-isakmp)# group 2

router(config-isakmp)# lifetime 86400Once a matching policy is in place, clear the SA and let it renegotiate:

router# clear crypto isakmp

router# clear crypto saThen verify Phase 1 comes up cleanly:

router# show crypto isakmp sa

dst src state conn-id status

10.2.2.1 10.1.1.1 QM_IDLE 1 ACTIVEQM_IDLEmeans Phase 1 is up and waiting for Phase 2 Quick Mode to complete. That's exactly where you want to be before moving on.

Root Cause 2: Phase 2 (IPSec Transform Set) Mismatch

Phase 2 is where the actual data-carrying tunnel gets built. This is the IPSec SA — the one that encrypts and authenticates your user traffic. Both peers have to agree on the transform set: the encryption and authentication algorithms for the data plane. A mismatch here means Phase 1 can be perfectly healthy (you'll see

QM_IDLE) but the tunnel still won't carry a single byte, because the IPSec SA never successfully negotiates.

This catches a lot of engineers off guard. The ISAKMP SA is up,

QM_IDLElooks fine, and the assumption is that everything is working — until you notice traffic still isn't flowing. Check the IPSec SAs and you'll find them absent or repeatedly failing to build.

How to identify it:

router# debug crypto ipsec*Apr 20 09:22:11.882: IPSEC(validate_proposal_request): proposal part #1

*Apr 20 09:22:11.882: (key eng. msg.) INBOUND local= 10.1.1.1:0, remote= 10.2.2.1:0,

*Apr 20 09:22:11.882: local_proxy= 192.168.10.0/255.255.255.0/0/0,

*Apr 20 09:22:11.882: remote_proxy= 192.168.20.0/255.255.255.0/0/0,

*Apr 20 09:22:11.882: protocol= ESP, transform= esp-aes esp-sha-hmac (Tunnel),

*Apr 20 09:22:11.882: lifedur= 3600s and 4608000kb,

*Apr 20 09:22:11.882: spi= 0x0(0), conn_id= 0, keysize= 128, flags= 0x0

*Apr 20 09:22:11.882: IPSEC(validate_proposal_request): transform proposal not supported for identity:

{esp-aes esp-sha-hmac}The remote end is proposing ESP with AES-128 and SHA-1-HMAC. The local transform set doesn't include that combination. Confirm what's configured locally:

router# show crypto ipsec transform-set

Transform set default: { esp-aes 256 esp-sha256-hmac }

will negotiate = { Tunnel, },How to fix it: Create a transform set matching the remote peer's proposal, then add it to the crypto map:

router(config)# crypto ipsec transform-set TS-MATCH esp-aes esp-sha-hmac

router(cfg-crypto-trans)# mode tunnel

router(cfg-crypto-trans)# exit

router(config)# crypto map VPN-MAP 10 ipsec-isakmp

router(config-crypto-map)# set transform-set TS-MATCHClear the SAs and verify the counters start moving:

router# clear crypto sa

router# show crypto ipsec sa | include pkts

#pkts encaps: 145, #pkts encrypt: 145, #pkts digest: 145

#pkts decaps: 142, #pkts decrypt: 142, #pkts verify: 142Encrypted and decrypted packet counters climbing means Phase 2 negotiated successfully and traffic is flowing in both directions.

Root Cause 3: Crypto ACL Not Mirrored on Both Ends

The crypto ACL defines what traffic gets encrypted and sent through the tunnel — often called "interesting traffic." It must be an exact mirror on both ends. What the local side permits as source-to-destination must match what the remote side permits as destination-to-source. If they don't mirror each other precisely, Phase 2 will fail for the unmatched traffic selectors, or fail entirely.

This bites teams that manage both ends through separate change windows. Someone adds a new LAN subnet to the crypto ACL on one side but forgets to update the mirror. The result is a partial tunnel at best — some subnets work, others don't — or a completely failed Phase 2 depending on which side initiates the negotiation.

How to identify it: Pull the crypto map and its referenced ACL from both sides and compare them:

router# show crypto map

Crypto Map "VPN-MAP" 10 ipsec-isakmp

Peer = 10.2.2.1

Extended IP access list VPN-ACL

access-list VPN-ACL permit ip 192.168.10.0 0.0.0.255 192.168.20.0 0.0.0.255

access-list VPN-ACL permit ip 192.168.11.0 0.0.0.255 192.168.20.0 0.0.0.255

Current peer: 10.2.2.1

Security association lifetime: 4608000 kilobytes/3600 secondsNow look at the remote end's ACL. If it only has:

access-list VPN-ACL permit ip 192.168.20.0 0.0.0.255 192.168.10.0 0.0.0.255The mirror entry for 192.168.11.0/24 is missing. Phase 2 for traffic from that subnet will fail, and you'll see this in the debug output:

*Apr 20 09:31:04.223: IPSEC(ipsec_process_proposal): proxy identities not supported"Proxy identities not supported" is the IOS way of saying: the traffic selectors in the Phase 2 proposal don't match anything in the local crypto ACL. The initiating side said "I want to encrypt traffic from 192.168.11.0/24 to 192.168.20.0/24" and the remote side has no matching permit entry for that pair.

How to fix it: Add the missing mirror entry on the remote end:

remote-router(config)# ip access-list extended VPN-ACL

remote-router(config-ext-nacl)# permit ip 192.168.20.0 0.0.0.255 192.168.11.0 0.0.0.255Clear the SAs on both ends and trigger traffic from the affected subnet:

remote-router# clear crypto sa

router# clear crypto saGoing forward, treat the crypto ACL as a paired artifact — both sides live in the same change ticket, updated together. A mismatch here is one of those problems that produces intermittent, seemingly random failures that are very hard to diagnose without knowing exactly what to look for.

Root Cause 4: Dead Peer Detection Misconfiguration

Dead Peer Detection (DPD) is a keepalive mechanism that lets one peer detect when the other has gone silent — a router reboot, a link flap, a process restart — and tear down the stale SA so a fresh one can be negotiated. When DPD is misconfigured, you can end up in a scenario where one side holds an old SA thinking the tunnel is still alive, while the other has torn everything down and is trying to start fresh. The result is asymmetric tunnel state: traffic appears to leave one end but never arrives at the other, and the tunnel never recovers on its own.

I've also seen DPD cause outright tunnel failure when it's configured too aggressively. If the DPD interval is very short and there's legitimate WAN latency — satellite links, 4G backup circuits, overloaded MPLS paths — DPD will declare the peer dead before it has a chance to respond. The SA gets torn down, a new negotiation starts, latency causes another DPD timeout, and you end up in a rebuild loop that prevents the tunnel from stabilizing at all.

How to identify it: Check the current DPD configuration and look for teardown events in the logs:

router# show crypto isakmp sa detail

C-id Local Remote I-VRF Status Encr Hash Auth DH Lifetime Cap.

1 10.1.1.1 10.2.2.1 ACTIVE aes sha psk 14 23:41:22

Engine-id:Conn-id = SW:1

Keepalive: 10/3 (interval/retry) DPD action: clearA 10-second interval with only 3 retries is aggressive. On a high-latency link that's asking for trouble. Now check the logs for DPD-triggered teardowns:

router# show log | include DPD

*Apr 20 10:02:11.441: %CRYPTO-4-IKMP_NO_SA: IKE message from 10.2.2.1 has no SA

*Apr 20 10:02:41.553: ISAKMP: DPD request sent to 10.2.2.1

*Apr 20 10:02:51.553: ISAKMP: DPD: Peer 10.2.2.1 not responding, tearing down SAIf you're seeing DPD teardowns immediately followed by renegotiation attempts in a repeating pattern, the DPD timers are the culprit.

How to fix it: Tune the DPD timers to something appropriate for your WAN link characteristics. For a standard internet or MPLS circuit, 30 seconds with 5 retries is a solid starting point:

router(config)# crypto isakmp keepalive 30 5 periodicThe

periodickeyword sends DPD hellos at regular intervals regardless of whether traffic is flowing. The alternative,

on-demand, only sends DPD when there's been silence — which is usually better for tunnels carrying sustained traffic since it reduces overhead. If you need to temporarily disable DPD to confirm it's the cause:

router(config)# no crypto isakmp keepaliveRebuild the SA and watch whether the tunnel stays stable. If it does, DPD was tearing it down. Re-enable it with more conservative timers and make sure both ends match — asymmetric DPD configuration produces confusing behavior where one side tears down an SA the other side thought was still alive.

Root Cause 5: NAT-T Not Enabled

NAT Traversal (NAT-T) is essential when one or both peers sit behind a Network Address Translation device. Native IPSec is fundamentally incompatible with NAT in several ways. AH integrity protection covers the source IP, so NAT breaks it immediately. ESP (IP protocol 50) is a raw IP protocol, not TCP or UDP, and most NAT devices don't know how to translate it — they'll either drop it silently or forward it incorrectly. And when multiple internal hosts try to build IPSec sessions through the same NAT device using UDP/500, the translations collide.

NAT-T solves all of this by wrapping the IPSec payload inside UDP/4500. NAT devices handle UDP just fine, and the port number allows multiple simultaneous sessions to be distinguished. If NAT-T isn't enabled and one of the peers is behind NAT, you'll often see Phase 1 partially complete over UDP/500 but Phase 2 fail — or the tunnel appears to establish but encrypted traffic immediately drops because the NAT device is blocking or mangling the ESP packets.

How to identify it: First determine whether NAT is in the path. Check the ISAKMP SA detail:

router# show crypto isakmp sa detail

C-id Local Remote I-VRF Status Encr Hash Auth DH Lifetime Cap.

1 10.1.1.1 10.2.2.1 ACTIVE aes sha psk 14 23:41:22

Engine-id:Conn-id = SW:1

NAT-T is not detectedIf you expect NAT to be in the path and you're seeing "NAT-T is not detected," or the tunnel is negotiating over UDP/500 when it should be on UDP/4500, verify whether NAT-T is even configured:

router# show running-config | include nat-traversalNo output means NAT-T is not configured at all. In the debug output, when NAT-T is missing but needed, you'll typically see Phase 1 complete on UDP/500 and then Phase 2 stall or the tunnel repeatedly drop — because the NAT device is silently dropping the ESP packets that carry the encrypted data.

How to fix it: Enable NAT-T globally and set an appropriate keepalive interval:

router(config)# crypto isakmp nat traversal 20The value (20 seconds here) is the NAT-T keepalive interval. It sends periodic UDP/4500 packets to keep the NAT translation table entry alive. Without this, the NAT device will time out its UDP translation entry and the tunnel will silently drop. Most NAT devices time out UDP translations between 30 and 300 seconds — 20 seconds is a safe keepalive that will keep any reasonable NAT device from timing out the session. Clear the SAs and let them rebuild:

router# clear crypto isakmp

router# clear crypto saVerify the negotiation now detects NAT in the path and switches to UDP/4500:

router# show crypto isakmp sa detail

C-id Local Remote I-VRF Status Encr Hash Auth DH Lifetime Cap.

1 10.1.1.1 10.2.2.1 ACTIVE aes sha psk 14 23:59:11

Engine-id:Conn-id = SW:1

NAT-T is detected, remote NAT address: 203.0.113.5"NAT-T is detected" confirms the IKE peers performed the NAT discovery exchange during Phase 1 and switched to UDP/4500 for Phase 2. Your encrypted traffic is now wrapped in UDP and will pass through NAT devices cleanly.

Root Cause 6: Pre-Shared Key Mismatch

A mismatched pre-shared key is surprisingly common, especially in environments where the PSK is long and complex and was typed by hand on both sides. One character off — a lowercase L instead of a one, a zero instead of the letter O, a missing special character — and Phase 1 authentication will fail every single time. The debug output is unambiguous:

*Apr 20 10:45:03.112: ISAKMP:(1004): Old State = IKE_I_MM5 New State = IKE_I_MM6

*Apr 20 10:45:03.112: ISAKMP:(1004): Authentication failed

*Apr 20 10:45:03.112: ISAKMP:(1004): Send ISAKMP_NOTIFY message to 10.2.2.1

*Apr 20 10:45:03.112: %CRYPTO-6-IKMP_MODE_FAILURE: Processing of Main mode failed with peer at 10.2.2.1MM5 and MM6 are where the authentication exchange happens. Failure at this stage is almost always a PSK mismatch. Check the configured key:

router# show running-config | include crypto isakmp key

crypto isakmp key Sup3rS3cur3K3y!! address 10.2.2.1Compare this character-for-character with what the remote side has. If the remote side is a Cisco router you have access to, use

show running-config | include crypto isakmp keythere as well. Re-enter the key on both sides — copy-paste where possible, never type complex PSKs by hand:

router(config)# crypto isakmp key Sup3rS3cur3K3y!! address 10.2.2.1Clear the ISAKMP SA and confirm Phase 1 reaches

QM_IDLEstate.

Root Cause 7: Wrong Interface Binding or Missing Route to Peer

The crypto map must be applied to the correct interface — specifically the interface that faces the WAN or public internet link over which the tunnel is built. If it's applied to the wrong interface, or if there's simply no route to the remote peer's IP, IKE packets will never reach the other side. The tunnel will appear to be configured correctly, but nothing will happen — no ISAKMP activity at all.

How to identify it: Verify the route to the remote peer and which interface the crypto map is applied to:

router# show ip route 10.2.2.1

% Network not in tableNo route to the peer means ISAKMP packets are being dropped before they leave the box. Also verify the crypto map binding:

router# show interfaces GigabitEthernet0/1 | include Crypto

Crypto map tag: VPN-MAP, seqnum: 10, local addr: 10.1.1.1Make sure the "local addr" here is the IP that the remote peer is configured to connect to. If the crypto map is on GigabitEthernet0/1 but the default route goes out GigabitEthernet0/0, VPN traffic will either exit unencrypted from the wrong interface or get dropped entirely.

How to fix it: Remove the crypto map from the wrong interface and bind it to the correct one:

router(config)# interface GigabitEthernet0/1

router(config-if)# no crypto map VPN-MAP

router(config-if)# exit

router(config)# interface GigabitEthernet0/0

router(config-if)# crypto map VPN-MAPAdd any missing static routes or verify that the dynamic routing protocol is advertising the peer's IP correctly before clearing the SAs.

Prevention

Most of these failures are entirely preventable with discipline at configuration time. Before any tunnel goes live, document the Phase 1 and Phase 2 parameters agreed upon with the remote party — in writing, in the change ticket. Both sides should confirm their settings before the maintenance window starts. Don't wait until the tunnel fails at 2am to discover that the remote vendor defaults to DH group 2 while your policy requires group 14.

Standardize on IKEv2 where possible. It's cleaner, faster to negotiate, less ambiguous than IKEv1 Main Mode, and eliminates a lot of the edge cases that make IKEv1 debugging painful. Many of the Phase 1 mismatch scenarios covered above are handled more gracefully by IKEv2's single exchange model.

Treat the crypto ACL as a paired artifact — both sides live in the same change ticket and get updated together. Every time a new subnet needs to traverse the tunnel, both the local permit entry and its remote mirror entry are added as part of the same change. A mismatch here causes the kind of intermittent, subnet-specific failures that are genuinely hard to diagnose without knowing exactly what you're looking for.

For NAT-T, enable it proactively on any tunnel where the peer might be behind NAT — or might ever be behind NAT in the future. It adds negligible overhead and avoids a class of failures that are disproportionately hard to diagnose. Set NAT-T keepalives below your NAT device's UDP timeout. When in doubt, use 20 seconds.

Set up syslog alerting for the messages that signal tunnel trouble:

CRYPTO-4-IKMP_NO_SA,

CRYPTO-6-IKMP_MODE_FAILURE, and

CRYPTO-4-RECVD_PKT_NOT_IPSEC. These show up immediately when a tunnel fails, before the helpdesk queue starts filling up. Pair that with an IP SLA object that sends probes across the tunnel every 30 seconds, tied to a track object that can trigger routing changes or alerts when the tunnel drops:

router(config)# ip sla 1

router(config-ip-sla)# icmp-echo 192.168.20.1 source-interface GigabitEthernet0/0

router(config-ip-sla-echo)# frequency 30

router(config-ip-sla-echo)# exit

router(config)# ip sla schedule 1 life forever start-time now

router(config)# track 1 ip sla 1 reachabilityFinally, keep a runbook entry for each tunnel that lists the Phase 1 policy number, Phase 2 transform set name, crypto ACL name, PSK (stored in your secrets manager, not the runbook), and the quick commands needed to check and reset the tunnel. When something breaks at 3am, the difference between a 5-minute fix and a 45-minute outage is knowing immediately which commands to run and in what order.