Overview

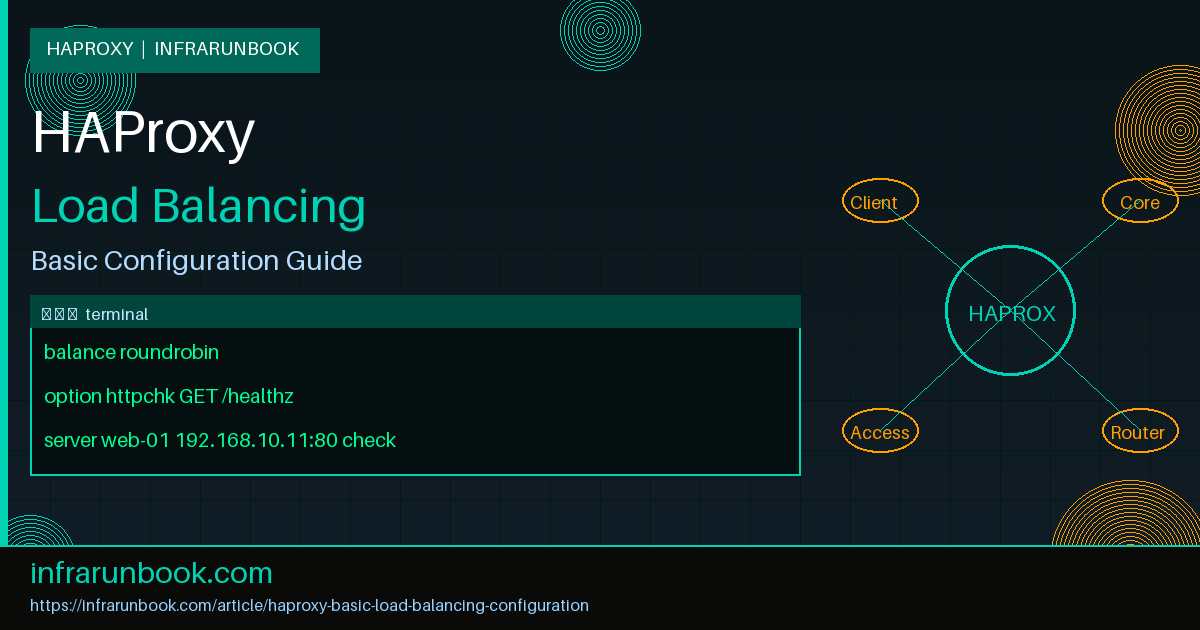

HAProxy (High Availability Proxy) is a battle-tested, open-source TCP and HTTP load balancer trusted in production environments worldwide. It provides fine-grained control over connection handling, health checks, session persistence, and routing logic — all with minimal CPU and memory overhead. Unlike cloud-native load balancers, HAProxy runs entirely on your own infrastructure, giving you full visibility and control over every connection.

This guide walks through a complete, production-ready basic load balancing setup using HAProxy on a Linux server. By the end you will have a working HTTP load balancer that distributes requests across multiple backend web servers using round-robin scheduling, with active HTTP health checks ensuring only healthy nodes receive traffic.

Prerequisites

- A Linux server running Ubuntu 22.04 LTS or Rocky Linux 9 — hostname sw-infrarunbook-01, IP 192.168.10.10

- Root or sudo access via the infrarunbook-admin account

- Three backend web servers reachable over the network: 192.168.10.11, 192.168.10.12, 192.168.10.13

- HAProxy 2.8 LTS or later installed on the load balancer host

- Basic familiarity with Linux networking and systemd service management

- Ports 80 and 8404 open in the firewall on the HAProxy host

- Backend servers serving a health check endpoint at

/healthz

that returns HTTP 200

Step 1: Install HAProxy

On Ubuntu 22.04 the default apt repository ships an older HAProxy release. Use the official HAProxy maintainer PPA for the latest 2.8 LTS build:

sudo apt-get install --no-install-recommends software-properties-common

sudo add-apt-repository ppa:vbernat/haproxy-2.8

sudo apt-get update

sudo apt-get install haproxy=2.8.*On Rocky Linux 9 or RHEL 9:

sudo dnf install haproxy -yConfirm the installed version before continuing:

haproxy -vExpected output:

HAProxy version 2.8.x 2024/xx/xx - https://haproxy.org/Step 2: Understand the Configuration Structure

The main HAProxy configuration file is located at /etc/haproxy/haproxy.cfg. It is divided into four primary sections that are evaluated top to bottom:

- global — Process-wide settings: logging destination, OS user and group, max connections, SSL/TLS tuning, and the runtime API socket path.

- defaults — Default values inherited by all frontend and backend sections unless explicitly overridden. Set your timeouts, logging format, and mode here.

- frontend — Defines a listening socket and routes incoming connections to one or more backends. Think of this as the ingress point.

- backend — Defines the pool of upstream servers, the load balancing algorithm, health check parameters, and per-server limits.

Some configurations also use a listen block, which combines a frontend and backend into a single stanza. This is handy for simple TCP proxies but less flexible for complex HTTP routing.

Step 3: Back Up the Default Configuration

Always preserve the default config before making any changes:

sudo cp /etc/haproxy/haproxy.cfg /etc/haproxy/haproxy.cfg.bakStep 4: Write the Load Balancer Configuration

Open the configuration file for editing:

sudo nano /etc/haproxy/haproxy.cfgReplace the contents with the configuration in the next section. Each block is annotated so you understand why each directive is present.

Full Configuration Example

#---------------------------------------------------------------------

# Global settings

#---------------------------------------------------------------------

global

log /dev/log local0

log /dev/log local1 notice

chroot /var/lib/haproxy

stats socket /run/haproxy/admin.sock mode 660 level admin expose-fd listeners

stats timeout 30s

user haproxy

group haproxy

daemon

# SSL/TLS hardening (modern compatibility profile)

ca-base /etc/ssl/certs

crt-base /etc/ssl/private

ssl-default-bind-ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384

ssl-default-bind-ciphersuites TLS_AES_128_GCM_SHA256:TLS_AES_256_GCM_SHA384:TLS_CHACHA20_POLY1305_SHA256

ssl-default-bind-options ssl-min-ver TLSv1.2 no-tls-tickets

# Maximum concurrent connections across all frontends

maxconn 50000

#---------------------------------------------------------------------

# Defaults — inherited by all frontend/backend sections

#---------------------------------------------------------------------

defaults

log global

mode http

option httplog

option dontlognull

option forwardfor

option http-server-close

timeout connect 5s

timeout client 30s

timeout server 30s

errorfile 400 /etc/haproxy/errors/400.http

errorfile 403 /etc/haproxy/errors/403.http

errorfile 408 /etc/haproxy/errors/408.http

errorfile 500 /etc/haproxy/errors/500.http

errorfile 502 /etc/haproxy/errors/502.http

errorfile 503 /etc/haproxy/errors/503.http

errorfile 504 /etc/haproxy/errors/504.http

#---------------------------------------------------------------------

# Stats page — bind to internal management IP only

#---------------------------------------------------------------------

listen stats

bind 192.168.10.10:8404

stats enable

stats uri /haproxy-stats

stats realm HAProxy\ Statistics

stats auth infrarunbook-admin:Ch4ng3M3N0w!

stats refresh 10s

stats show-node

stats show-legends

stats hide-version

#---------------------------------------------------------------------

# Frontend — HTTP ingress on all interfaces, port 80

#---------------------------------------------------------------------

frontend http_frontend

bind *:80

default_backend web_servers

# Capture the Host header for access log enrichment

http-request capture req.hdr(Host) len 64

# Inject basic security response headers

http-response set-header X-Content-Type-Options nosniff

http-response set-header X-Frame-Options SAMEORIGIN

#---------------------------------------------------------------------

# Backend — three-node web server pool

#---------------------------------------------------------------------

backend web_servers

balance roundrobin

# HTTP health check targeting the application health endpoint

option httpchk GET /healthz HTTP/1.1\r\nHost:\ solvethenetwork.com

# Health check tuning: probe every 5s, down after 3 failures, up after 2 successes

# maxconn per server protects backends during traffic spikes

default-server inter 5s fall 3 rise 2 maxconn 200

server web-01 192.168.10.11:80 check

server web-02 192.168.10.12:80 check

server web-03 192.168.10.13:80 checkStep 5: Validate the Configuration

HAProxy provides a built-in syntax checker. Always run this before touching a live service — a config error will prevent the process from starting or reloading:

sudo haproxy -c -f /etc/haproxy/haproxy.cfgSuccessful output:

Configuration file is validIf the check reports an error, the message includes the section name and line number. Fix the issue and re-run the check before proceeding.

Step 6: Enable and Start HAProxy

sudo systemctl enable haproxy

sudo systemctl restart haproxy

sudo systemctl status haproxyLook for active (running) in the status output. If HAProxy fails to start, inspect the journal for the detailed error:

sudo journalctl -u haproxy -n 50 --no-pagerStep 7: Open Firewall Ports

On Ubuntu with UFW:

sudo ufw allow 80/tcp

sudo ufw allow 8404/tcp comment "HAProxy stats"

sudo ufw reloadOn Rocky Linux with firewalld:

sudo firewall-cmd --permanent --add-port=80/tcp

sudo firewall-cmd --permanent --add-port=8404/tcp

sudo firewall-cmd --reloadVerification Steps

Confirm HAProxy Is Listening

Run the following on sw-infrarunbook-01 and verify both ports appear:

sudo ss -tlnp | grep haproxyExpected output:

LISTEN 0 128 0.0.0.0:80 0.0.0.0:* users:(("haproxy",pid=XXXX,fd=5))

LISTEN 0 128 192.168.10.10:8404 0.0.0.0:* users:(("haproxy",pid=XXXX,fd=6))Send a Test HTTP Request

From a host on the 192.168.10.0/24 network:

curl -I http://192.168.10.10/You should receive an HTTP 200 response. If you get a 502 Bad Gateway, the backend servers are not reachable or the health check is failing — check the stats page for details.

Verify Round-Robin Distribution

Send nine sequential requests and observe which backend responds. If your backends return a custom header or a response body identifying themselves, you will see the requests cycle across all three nodes:

for i in $(seq 1 9); do

curl -s -o /dev/null -w "%{http_code} - %{remote_ip}\n" http://192.168.10.10/

doneAccess the Stats Page

Open a browser and navigate to:

http://192.168.10.10:8404/haproxy-statsAuthenticate with infrarunbook-admin and the password configured in the

stats authdirective. The dashboard shows real-time session counts, connection rates, health check status (green = UP, red = DOWN), error counters, and backend response times for every server in the pool.

Query the Runtime API

The HAProxy admin socket enables live inspection and control without a config reload. Install

socatif not already present, then query server state:

sudo apt-get install socat -y

echo "show servers state" | sudo socat stdio /run/haproxy/admin.sockPerform a Graceful Reload

When updating the configuration on a live system, always use

reload— never

restart. A reload leverages SO_REUSEPORT to hand off listening sockets seamlessly, preserving all active connections:

sudo systemctl reload haproxyUnderstanding Balance Algorithms

The roundrobin algorithm distributes new connections sequentially across all active backend servers. It is the correct default for stateless HTTP workloads where all backends have equal capacity and similar response times.

Other commonly used algorithms include:

- leastconn — Routes each new connection to the server with the fewest active sessions. Best for long-lived connections such as databases, WebSockets, or LDAP.

- source — Hashes the client source IP address to consistently send the same client to the same backend. Provides rudimentary session affinity without cookies but breaks if client IPs change (e.g., mobile users on CGNAT).

- uri — Hashes the request URI path. Useful for reverse proxy caching where the same URL should always reach the same cache node.

- random — Picks two servers at random and routes to the one with fewer connections (power-of-two-choices). Performs well with very large backend pools where leastconn becomes expensive.

To switch algorithms, change the

balancedirective in the backend block, validate, and reload:

balance leastconnHealth Check Tuning

The configuration above uses an HTTP health check targeting

/healthz. This is strongly preferred over a bare TCP check because it validates that the application process is actually serving HTTP responses — not just that the TCP port is accepting connections.

Key health check parameters on

default-server:

- inter 5s — Send a probe every 5 seconds.

- fall 3 — Mark the server DOWN after three consecutive failed probes.

- rise 2 — Mark the server UP again after two consecutive successful probes.

- maxconn 200 — Queue connections at HAProxy rather than forwarding more than 200 simultaneous connections to a single backend.

For backends that do not expose an HTTP health endpoint, use a TCP-level check instead:

backend web_servers

balance roundrobin

option tcp-check

default-server inter 5s fall 3 rise 2

server web-01 192.168.10.11:80 check

server web-02 192.168.10.12:80 check

server web-03 192.168.10.13:80 checkDraining a Server for Maintenance

To remove a server from the pool without a config reload — for example, before applying OS patches — use the runtime API to set it to maintenance mode. HAProxy will stop sending new connections to it while existing sessions finish naturally:

echo "set server web_servers/web-02 state maint" | sudo socat stdio /run/haproxy/admin.sockOnce maintenance is complete, restore the server to the active pool:

echo "set server web_servers/web-02 state ready" | sudo socat stdio /run/haproxy/admin.sockCommon Mistakes

1. Skipping Config Validation Before Reload

Running

systemctl reload haproxywithout first running

haproxy -c -f /etc/haproxy/haproxy.cfgrisks applying a broken config. HAProxy will refuse to reload with a syntax error, but the failed reload attempt itself can interrupt in-flight health checks. Validate every time.

2. Setting Timeouts Too Low for the Application

A 30-second

timeout clientis suitable for short-lived API calls but will prematurely terminate large file downloads, server-sent event streams, or slow mobile connections. Match your timeout values to the longest legitimate request your application serves.

3. Omitting option forwardfor

Without this directive, every request your backend servers receive appears to come from HAProxy's IP address. This breaks GeoIP lookups, per-client rate limiting, security audit logs, and web application firewalls that rely on the real client IP. Always include

option forwardforin HTTP mode.

4. Binding the Stats Page to All Interfaces

Using

bind *:8404on the stats listener exposes your credentials, server topology, and connection metrics to anyone on any network interface. Always bind the stats page to an internal management IP and enforce a strong password.

5. Using restart

Instead of reload

on a Live System

A

systemctl restart haproxyon a live load balancer drops all active connections during the brief process restart window. Use

systemctl reload haproxyfor all configuration changes in production. HAProxy's reload mechanism transfers listening sockets to the new process without interrupting established sessions.

6. Not Setting maxconn

on Backend Servers

Without a per-server connection cap, a traffic spike can forward thousands of simultaneous connections to a backend that can only handle a few hundred. HAProxy will queue excess connections internally (up to the global

maxconnlimit) when per-server

maxconnis set, protecting backends from being overwhelmed.

7. Forgetting option http-server-close

Without this option, HAProxy may reuse a backend connection for multiple client requests in a way that causes subtle request routing issues.

http-server-closecloses the server-side connection after each request while keeping the client-side keep-alive connection open — the correct behavior for the vast majority of HTTP/1.1 deployments.