What Routing Instances Actually Are

Junos has always had routing instances baked into its architecture. Unlike some platforms where VRF support feels like an afterthought bolted onto a flat routing model, Juniper built the routing instance framework from the ground up. Every Junos device runs at least one routing instance — the master instance — even if you've never touched a

routing-instancesstanza in your career.

The master instance is what you're working with any time you configure routes under

routing-options, assign interfaces without specifying an instance, or run

show routewithout arguments. Its primary routing table is

inet.0for IPv4 unicast. There's also

inet.6.0for IPv6,

inet.2for MPLS RPF lookups, and a handful of others depending on what features you have enabled. Most engineers live exclusively in this space for years before they ever need to carve out a separate routing domain.

A routing instance is an independent collection of routing protocol instances, routing tables, and interfaces. When you define one, you're telling Junos: treat everything in this bucket as a separate forwarding domain. Traffic entering an interface assigned to that instance can only reach other interfaces and routes within that same instance — unless you explicitly build a bridge between them. It's real isolation, not just policy-based filtering.

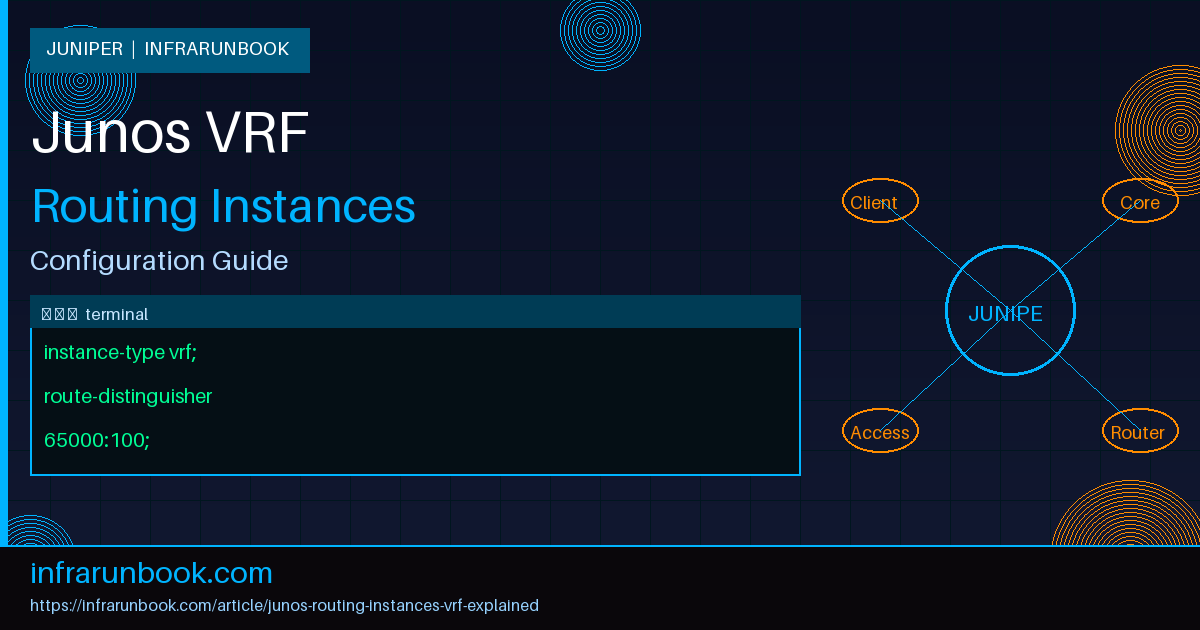

Instance Types — It's Not All VRF

This is where a lot of engineers get tripped up. In Junos, VRF is one type of routing instance, not a synonym for the concept itself. The

instance-typeknob in your configuration determines what the instance actually does, and getting this wrong causes real headaches. Let me walk through the ones you'll encounter in production.

vrf — This is what most people mean when they say VRF. It's designed for Layer 3 VPN services (L3VPN) using MPLS. It requires a route distinguisher and route targets. This is the instance type you configure when you're building MPLS-based VPNs where BGP carries VPN routes as MP-BGP VPNv4 or VPNv6 prefixes between PE routers.

virtual-router — This is the one that catches engineers off guard most often. It creates an independent routing domain just like

vrf, but without any MPLS or BGP VPN machinery. No route distinguisher needed, no route targets. If you want to segment traffic on a single device without involving MPLS,

virtual-routeris your tool. I've used it extensively for out-of-band management separation and for hosting multiple customer routing domains on PE routers that don't run MPLS.

vpls — For Virtual Private LAN Service. Combines Layer 2 switching with MPLS to stretch broadcast domains across a WAN. You'll see this on older service provider gear still running VPLS fabrics.

evpn — Ethernet VPN, the modern replacement for VPLS in most architectures. If you're building a new data center fabric or a new SP Layer 2 service, this is where you land.

forwarding — A special-purpose instance used for filter-based forwarding. You reference it in firewall filters to redirect traffic to alternate next-hops. This is the instance type behind a lot of clever traffic steering designs — inline firewalls, load balancers, DPI engines.

Understanding which type fits your use case is step one. In my experience, the most common mistake I see is engineers configuring

vrfinstances on devices that have no MPLS in sight, then wrestling with missing route distinguishers and inexplicably broken BGP sessions. The fix is usually just switching to

virtual-router.

How VRF Instances Work Mechanically

When you configure a

vrfinstance, Junos creates a dedicated routing table for it. If your instance is named

CUSTOMER-A, the IPv4 unicast table becomes

CUSTOMER-A.inet.0. You can always verify this with

show route table CUSTOMER-A.inet.0. Nothing in that table bleeds into

inet.0unless you've configured route leaking.

The route distinguisher (RD) makes VPN routes globally unique in the BGP control plane. Two different customers might both advertise a route to

10.10.0.0/24, but with different RDs, BGP sees them as entirely distinct prefixes. The RD doesn't control which routes get imported into which VRF — that's the job of route targets.

Route targets (RTs) are BGP extended communities. You define an export policy that stamps outgoing VPN routes with specific RT values, and an import policy that accepts routes carrying specific RTs. This is how multi-site L3VPN topologies control route distribution between sites. A hub-and-spoke VPN might have spokes exporting with RT

65000:100and importing

65000:200, while the hub exports

65000:200and imports

65000:100. Spokes can reach the hub but not each other — controlled entirely by RT policy, no ACLs required.

Here's a basic VRF configuration on a PE router:

routing-instances {

CUSTOMER-A {

instance-type vrf;

interface ge-0/0/1.100;

route-distinguisher 65000:100;

vrf-target target:65000:100;

vrf-table-label;

}

}The

vrf-table-labelline tells Junos to allocate a per-VRF MPLS label. This is how traffic gets steered into the correct VRF on ingress at the remote PE. Without it, you'd need per-CE interface labels instead, which is less scalable and generally more painful to manage.

The

vrf-targetshorthand automatically builds matching import and export policies using the specified community value. It's great for simple any-to-any topologies. For anything more complex — hub-and-spoke, extranet, or topologies where you need different import and export communities — you break it out explicitly:

routing-instances {

CUSTOMER-A {

instance-type vrf;

interface ge-0/0/1.100;

route-distinguisher 65000:100;

vrf-import CUSTOMER-A-IMPORT;

vrf-export CUSTOMER-A-EXPORT;

vrf-table-label;

}

}

policy-options {

policy-statement CUSTOMER-A-IMPORT {

term accept-vpn-routes {

from {

protocol bgp;

community RT-CUSTOMER-A;

}

then accept;

}

term reject-rest {

then reject;

}

}

policy-statement CUSTOMER-A-EXPORT {

term export-direct-and-static {

from protocol [ direct static ];

then {

community add RT-CUSTOMER-A;

accept;

}

}

}

community RT-CUSTOMER-A members target:65000:100;

}Virtual-Router — The Underappreciated Instance Type

If MPLS isn't in your environment,

virtual-routergives you full routing domain isolation without any L3VPN overhead. Consider a scenario where a single router connects to two tenants who both advertise

192.168.1.0/24to you. Without routing instances, those prefixes collide and only one wins. With

virtual-router, each tenant lives in its own table with no conflict.

routing-instances {

TENANT-ONE {

instance-type virtual-router;

interface ge-0/0/2.200;

protocols {

bgp {

group TENANT-ONE-PEER {

type external;

peer-as 64512;

neighbor 172.16.10.2;

}

}

}

}

TENANT-TWO {

instance-type virtual-router;

interface ge-0/0/3.300;

protocols {

bgp {

group TENANT-TWO-PEER {

type external;

peer-as 64513;

neighbor 172.16.20.2;

}

}

}

}

}Both tenants announce identical prefixes. Junos keeps them isolated in

TENANT-ONE.inet.0and

TENANT-TWO.inet.0respectively. The device handles routing for both simultaneously with zero overlap and zero complexity on the control plane.

Route Leaking Between Instances

Sometimes you need controlled communication between routing instances — a shared services model is the classic case. All tenants need to reach a common DNS resolver or NTP server sitting behind a shared interface, but they shouldn't be able to reach each other. Junos supports this through the

instance-importpolicy mechanism or through rib-groups.

The cleanest approach in most scenarios is to use a

next-tableaction in your routing policy. This tells Junos that for a specific prefix, it should resolve the next-hop in a different routing table rather than the current instance's table:

policy-options {

policy-statement LEAK-SHARED-SERVICES {

term shared-services-subnet {

from {

route-filter 10.200.0.0/24 exact;

}

then {

next-table SHARED-SERVICES.inet.0;

}

}

term reject-rest {

then reject;

}

}

}

routing-instances {

TENANT-ONE {

instance-type virtual-router;

interface ge-0/0/2.200;

routing-options {

instance-import LEAK-SHARED-SERVICES;

}

}

TENANT-TWO {

instance-type virtual-router;

interface ge-0/0/3.300;

routing-options {

instance-import LEAK-SHARED-SERVICES;

}

}

SHARED-SERVICES {

instance-type virtual-router;

interface ge-0/0/4.400;

}

}This is surgical. You're not opening the floodgates between instances — you're allowing one specific prefix through in one specific direction. Being this precise prevents the kind of accidental route leaks that cause real customer impact. Don't be tempted to use

0.0.0.0/0in that route-filter just because it's convenient.

Operational Commands You'll Actually Use

Once your instances are configured, you'll spend time in operational mode verifying state. A few commands become second nature. Show the routing table for a specific instance:

show route table CUSTOMER-A.inet.0Verify which interfaces are assigned to an instance:

show interfaces routing-instance CUSTOMER-ACheck BGP neighbors within an instance:

show bgp neighbor 172.16.10.2 instance CUSTOMER-APing from within an instance — this one matters more than you'd think:

ping 10.10.0.1 routing-instance CUSTOMER-ATraceroute within an instance:

traceroute 10.10.0.1 routing-instance CUSTOMER-AThat last pair is something I see newer engineers forget constantly. They run

ping 10.10.0.1on a device with a VRF, get no response, and assume the VRF is broken. The ping was actually hitting

inet.0, not the VRF table. Always specify the routing instance when troubleshooting connectivity inside one.

Why This Matters in Real Networks

The value proposition for routing instances goes well beyond multi-tenancy on service provider gear. I've deployed these patterns in enterprise environments for several distinct reasons.

Management plane separation is one of the strongest use cases. Keeping the out-of-band management network in its own routing instance prevents management traffic from accidentally traversing production paths or being disrupted by production routing events. The management instance only knows about management prefixes. If the production network develops a routing loop or experiences a major flap, your ability to SSH to the device is unaffected. This is a meaningful operational resilience improvement, and it's nearly free to configure.

Regulatory compliance is another driver. Some environments require demonstrable Layer 3 separation between network segments — PCI DSS zones being the obvious example. Running separate routing instances with auditable import and export policies is a much cleaner answer to a compliance audit than trying to explain a complex ACL matrix that technically achieves the same result but is harder to verify.

In-production testing is a pattern I genuinely enjoy. You can stand up a

virtual-routerinstance on production hardware, point test interfaces into it, and validate new routing configurations with zero risk to the master table. When you're confident in the behavior, you migrate. This is particularly valuable when you're testing new BGP policies or OSPF summarization changes that would be high-risk to apply directly in the production routing domain.

A Full Working Example

Let me pull this together with a realistic scenario. The router

sw-infrarunbook-01is a PE device connecting two sites for a single customer. Site A connects on

ge-0/0/1.100with a CE-facing IP of

10.1.1.1/30. Site B connects on

ge-0/0/2.200with CE-facing IP

10.2.2.1/30. Both CEs run external BGP to the PE, and you need to stitch their routes together via MPLS L3VPN so they can reach each other across the backbone.

interfaces {

ge-0/0/1 {

unit 100 {

vlan-id 100;

family inet {

address 10.1.1.1/30;

}

}

}

ge-0/0/2 {

unit 200 {

vlan-id 200;

family inet {

address 10.2.2.1/30;

}

}

}

}

routing-instances {

SOLVETHENETWORK-L3VPN {

instance-type vrf;

interface ge-0/0/1.100;

interface ge-0/0/2.200;

route-distinguisher 65000:500;

vrf-target target:65000:500;

vrf-table-label;

protocols {

bgp {

group CE-PEERS {

type external;

export EXPORT-TO-CE;

neighbor 10.1.1.2 {

peer-as 64600;

}

neighbor 10.2.2.2 {

peer-as 64601;

}

}

}

}

}

}

policy-options {

policy-statement EXPORT-TO-CE {

term send-vpn-routes {

from protocol bgp;

then accept;

}

term send-direct {

from protocol direct;

then accept;

}

term reject-rest {

then reject;

}

}

}Both CE devices peer with the PE, advertise their local prefixes into the VRF, and those prefixes get exported via MP-BGP with the VPN RT community to the remote PE. The remote PE imports them into the matching VRF instance and re-advertises to its CE. The customer sees full bidirectional connectivity between sites, routed entirely through the MPLS backbone, completely isolated from every other customer's traffic.

Common Misconceptions

The biggest one: treating VRF and routing instance as interchangeable terms. On Junos,

vrfis a specific instance type with specific MPLS and BGP requirements. Routing instance is the broader category that includes

vrf,

virtual-router,

forwarding, and all the others. When someone tells you "we need a VRF for this" and there's no MPLS backbone, they almost certainly want a

virtual-router.

Second misconception: assuming interfaces remain accessible from the master instance after being assigned to a routing instance. Once an interface is assigned to a routing instance, it's gone from

inet.0. You can't have the same logical interface in two routing instances simultaneously. If you need to communicate between instances, you need explicit route leaking, a physical or logical back-to-back connection, or the

next-tablepolicy approach.

Third: thinking

vrf-targetis sufficient for any topology. It's a convenience shorthand that sets identical import and export RT values. The moment you have hub-and-spoke VPNs, extranet VPNs, or any topology where import and export communities must differ,

vrf-targetwon't cut it. You need explicit

vrf-importand

vrf-exportpolicies. I've debugged more than one broken L3VPN where the only problem was

vrf-targetbeing used in a scenario that required asymmetric RT handling.

Fourth — and this one bites people regularly — forgetting that protocols must be configured inside the instance to run within it. If you want OSPF running inside

TENANT-ONE, the OSPF configuration goes under

routing-instances TENANT-ONE protocols ospf, not under the top-level

protocols ospfstanza. The top-level protocols block is the master instance. Configuring OSPF there does nothing for your

virtual-routerinstance, and Junos won't warn you about it.

Routing instances are one of those foundational Junos concepts that unlock a significant amount of design flexibility once you internalize the model. Get comfortable with the instance types, understand when

vrfis the right choice versus

virtual-router, and learn to read per-instance routing tables. Everything else — MPLS VPNs, shared services designs, management plane isolation, traffic steering — builds directly from this foundation.