Symptoms

You open Kibana, navigate to Discover, and find nothing. The histogram at the top of the page is flat. The document list reads "No results found." Or — and this is the one that really messes with your head — you can see logs from yesterday but nothing from the last 30 minutes, even though your application is definitely generating events right now.

Sometimes it's subtler. Logs trickle in from some hosts but not others. A specific log level is missing entirely. A particular service's output vanishes at a precise timestamp that coincides with a config change nobody remembers making. In my experience, this failure mode sends engineers down a restarting-services rabbit hole before they've actually identified the root cause. Don't restart things blindly. Let's work through this systematically.

Common symptoms include:

- Kibana Discover shows "No results found" for recent time ranges while older data appears normally

- The document hit count reads zero despite active application traffic

- Logs stop at a specific timestamp and nothing newer is visible

- Some indices show fresh data while others are stale or completely missing

- A "Failed to parse date" error or a yellow warning icon next to fields in the Data View editor

- Kibana loads but the Discover page spins indefinitely before returning empty results

Root Cause 1: Index Pattern Is Wrong or Stale

This is the first thing I check, and it resolves the problem far more often than people expect. Kibana uses index patterns — called data views in versions 8.x and later — to determine which Elasticsearch indices to query. If your index pattern doesn't match the indices where logs are actually landing, Kibana queries the right cluster but the wrong buckets and returns nothing.

Why does this happen? Log shippers like Filebeat and Logstash create time-based indices by default. A pattern like

filebeat-*works fine until someone modifies the Logstash output configuration to write to

app-logs-prod-2026.04.18instead. The old pattern stops matching those new indices immediately. No match, no logs, no error message explaining why.

To identify this, open Dev Tools in Kibana and run the following against your Elasticsearch cluster:

GET /_cat/indices?v&h=index,docs.count,creation.date.string&s=creation.date.string:descThis lists all indices sorted by creation date, newest first. Look at the top of the output:

health status index docs.count creation.date.string

green open app-logs-prod-2026.04.18 148392 2026-04-18T00:00:01.234Z

green open app-logs-prod-2026.04.17 2104871 2026-04-17T00:00:01.102Z

green open filebeat-8.12.0-2026.04.16 984123 2026-04-16T00:00:00.988ZNotice the naming shift — the two most recent indices are prefixed

app-logs-prod-while the older one follows the

filebeat-convention. If your Kibana data view still points to

filebeat-*, those new indices are invisible to it entirely.

The fix: navigate to Stack Management → Data Views (or Index Patterns in older Kibana), and either update the existing pattern to cover both naming schemes with a comma-separated entry like

app-logs-prod-*,filebeat-*, or create a new data view that exclusively matches the current naming convention. After saving, return to Discover and verify logs appear. You can also create a data view programmatically via the Kibana API:

POST /api/data_views/data_view

{

"data_view": {

"title": "app-logs-prod-*",

"timeFieldName": "@timestamp"

}

}Make sure the

timeFieldNamematches the actual timestamp field in your documents. If it's

event.createdor some other field, a mismatch here means Kibana can't filter by time even when the pattern itself is correct.

Root Cause 2: Time Filter Is Set Incorrectly

I've watched senior engineers spend 20 minutes troubleshooting a "missing logs" issue only to discover the time picker in the top-right corner of Kibana was still set to "Last 15 minutes" from a quick glance they took earlier. The logs existed the entire time — they just weren't in that 15-minute window.

It sounds embarrassing, but the time filter interacts with Kibana in non-obvious ways. If your log timestamps are in a timezone different from your browser, or if the

@timestampfield is being populated with ingest time rather than the actual event time, you can end up searching a window that genuinely doesn't contain the events you're after even with a wide filter selected.

The first step is to widen the time filter dramatically — set it to "Last 7 days" or even "Last 90 days." If logs suddenly appear, you have a time filter or timestamp mismatch, not a pipeline failure. Then check what timestamps your most recent documents actually carry:

GET /app-logs-prod-2026.04.18/_search

{

"size": 5,

"sort": [{ "@timestamp": { "order": "desc" } }],

"_source": ["@timestamp", "host.name", "message"]

}Sample output revealing a clock drift problem:

{

"hits": {

"hits": [

{

"_source": {

"@timestamp": "2026-04-18T03:22:11.000Z",

"host.name": "sw-infrarunbook-01.solvethenetwork.com",

"message": "Connection accepted from 10.10.1.55"

}

}

]

}

}If

@timestampshows a value several hours behind wall clock time when you know events were generated recently, the shipper's system clock is drifting or a timezone offset is being applied twice — once during log parsing and again during Logstash filtering. Check NTP synchronization on sw-infrarunbook-01:

timedatectl status Local time: Fri 2026-04-18 09:22:45 UTC

Universal time: Fri 2026-04-18 09:22:45 UTC

RTC time: Fri 2026-04-18 09:22:45

Time zone: UTC (UTC, +0000)

System clock synchronized: yes

NTP service: activeIf "System clock synchronized" reads "no" or the NTP service is inactive, fix it before anything else:

systemctl enable --now systemd-timesyncd

timedatectl set-ntp trueAlso audit your Logstash or Filebeat configuration for any

timezoneor

tzoverrides in date filter blocks. A common mistake is parsing a timestamp that already includes a UTC offset and then applying an additional timezone conversion on top of it, pushing the stored timestamp hours into the past.

Root Cause 3: Elasticsearch Is Not Receiving Logs

Sometimes the problem has nothing to do with Kibana's configuration — it's that logs never reached Elasticsearch in the first place. The ingestion pipeline is broken somewhere between your application and the cluster. Kibana can only display what Elasticsearch has indexed, so if the pipeline is silent, the Discover page will be too.

The usual suspects here are a Filebeat or Logstash process that silently crashed and wasn't restarted by systemd, a firewall ACL blocking port 9200 or 5044, an Elasticsearch cluster that started rejecting documents because of a full disk or a triggered circuit breaker, or API key credentials that expired after rotation.

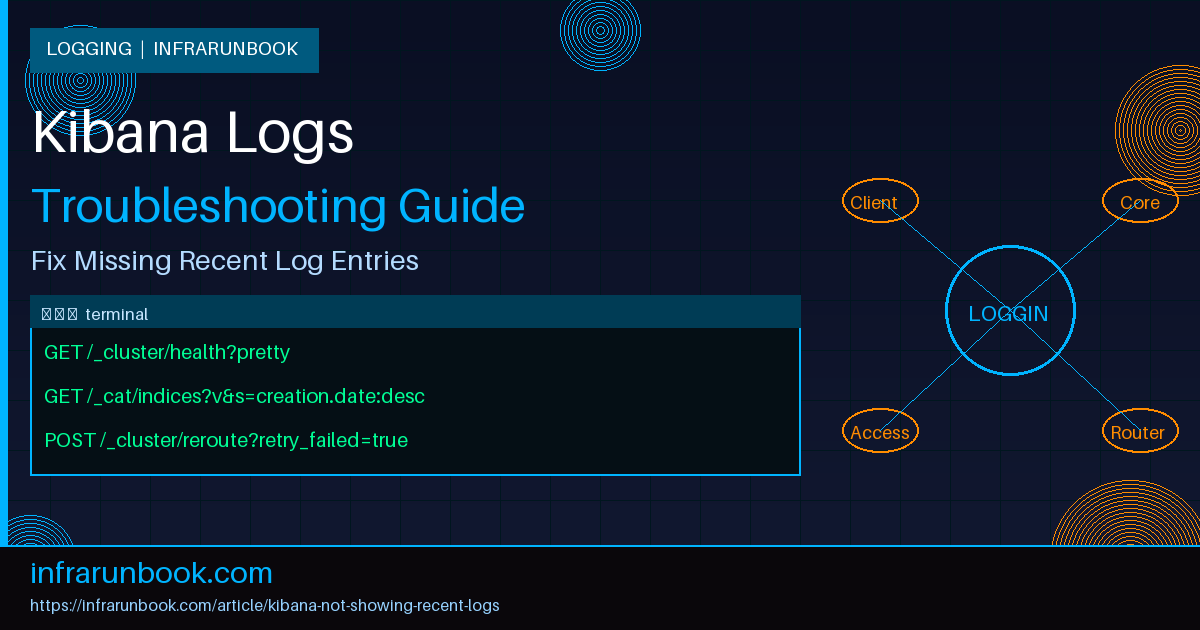

Start by checking Elasticsearch cluster health directly from sw-infrarunbook-01:

curl -s -u infrarunbook-admin:changeme http://10.10.1.10:9200/_cluster/health?pretty{

"cluster_name" : "elk-prod",

"status" : "red",

"number_of_nodes" : 3,

"number_of_data_nodes" : 3,

"active_primary_shards" : 142,

"active_shards" : 142,

"unassigned_shards" : 14,

"number_of_pending_tasks" : 23

}A red status with pending tasks and unassigned shards means the cluster is degraded and may be rejecting new writes entirely. Next, check whether Logstash is actually delivering events to Elasticsearch by querying the pipeline stats endpoint:

curl -s http://10.10.1.11:9600/_node/stats/pipelines?pretty | grep -A 8 '"events"'"events" : {

"duration_in_millis" : 4823012,

"in" : 2048392,

"filtered" : 2048392,

"out" : 0,

"queue_push_back_time_in_millis" : 0

}Events in, zero events out. That's a blocked output. Check the Logstash plain log for the reason:

tail -100 /var/log/logstash/logstash-plain.log | grep -i "error\|reject\|refused\|forbidden"[2026-04-18T07:14:22,341][ERROR][logstash.outputs.elasticsearch] Could not index event to Elasticsearch.

{:status=>403, :response=>{"index"=>{"error"=>{"type"=>"cluster_block_exception",

"reason"=>"index [app-logs-prod-2026.04.18] blocked by: [FORBIDDEN/12/index read-only / allow delete (api)]"}}}That

cluster_block_exceptionwith a read-only status means Elasticsearch triggered the flood-stage disk watermark and automatically put the index into read-only mode to protect data integrity. First, resolve the disk space issue — delete old indices, expand storage, or move data to cheaper tiers. Then explicitly clear the read-only block once there's enough headroom:

PUT /app-logs-prod-2026.04.18/_settings

{

"index.blocks.read_only_allow_delete": null

}After clearing the block, restart Logstash or force a Filebeat reload so the output workers retry their queued events.

Root Cause 4: Shard Allocation Issue

Shard allocation failures are one of the more frustrating causes because the symptoms are gradual and indirect. You won't always see a hard error in Kibana. Instead, new indices fail to become fully green, primary shards sit in an unassigned or initializing state, and writes either fail silently or go to fewer replicas than expected. Kibana will show whatever data exists on assigned shards — which for a freshly created index with no assigned primaries means nothing at all.

This happens when Elasticsearch can't place a shard on any available data node. Common causes: all nodes have exceeded the high watermark for disk usage, a data node left the cluster unexpectedly leaving orphaned shards, or allocation filtering rules using

index.routing.allocation.require.*reference a node attribute that no longer exists on any live node.

Get the full allocation picture for a specific unassigned shard:

GET /_cluster/allocation/explain

{

"index": "app-logs-prod-2026.04.18",

"shard": 0,

"primary": true

}{

"index" : "app-logs-prod-2026.04.18",

"shard" : 0,

"primary" : true,

"current_state" : "unassigned",

"unassigned_info" : {

"reason" : "ALLOCATION_FAILED"

},

"can_allocate" : "no",

"node_allocation_decisions" : [

{

"node_name" : "es-data-01",

"deciders" : [

{

"decider" : "disk_threshold",

"decision" : "NO",

"explanation" : "the node is above the high watermark [cluster.routing.allocation.disk.watermark.high=90%], having more than the maximum allowed [90%] used disk ratio [93.2%]"

}

]

}

]

}The allocation explain API is one of the most useful diagnostic tools in the Elasticsearch arsenal — it tells you exactly which decider rejected shard placement and why. In this case, disk utilization at 93.2% exceeded the 90% high watermark on every data node, so there's nowhere to place the shard.

Free up disk space first — remove old indices that have aged out of your retention window, or manually delete large indices that are no longer needed. Then, if you need to buy time while storage is being expanded, temporarily raise the watermarks:

PUT /_cluster/settings

{

"transient": {

"cluster.routing.allocation.disk.watermark.low": "95%",

"cluster.routing.allocation.disk.watermark.high": "97%",

"cluster.routing.allocation.disk.watermark.flood_stage": "99%"

}

}After freeing space, kick the cluster to retry failed allocations:

POST /_cluster/reroute?retry_failed=trueMonitor shard recovery in real time:

GET /_cat/recovery?v&h=index,shard,stage,time,type,source_node,target_node&active_only=trueDon't leave transient watermark overrides in place. Once the cluster is green and the underlying disk problem is resolved, reset them back to defaults:

PUT /_cluster/settings

{

"transient": {

"cluster.routing.allocation.disk.watermark.low": null,

"cluster.routing.allocation.disk.watermark.high": null,

"cluster.routing.allocation.disk.watermark.flood_stage": null

}

}Root Cause 5: Field Mapping Conflict

Field mapping conflicts are sneaky. They don't necessarily prevent logs from being indexed — but they cause queries and visualizations in Kibana to silently return incomplete or incorrect results, and they mark affected fields as "conflicting" which makes them unsearchable or unaggregatable across index generations.

Here's the classic scenario: your data view covers

app-logs-prod-*. In the index from two days ago, the

status_codefield was dynamically mapped as a

keywordbecause the first document that arrived had it as a string. Yesterday someone updated the Logstash grok pattern to parse it as an integer, so in the new index it's mapped as a

long. Kibana can't reliably query across two indices where the same field carries different types, so it flags the field as conflicted and silently excludes it from aggregations.

Check for mapping conflicts programmatically by comparing the field mapping across your index pattern:

GET /app-logs-prod-*/_mapping/field/status_code{

"app-logs-prod-2026.04.17" : {

"mappings" : {

"status_code" : {

"full_name" : "status_code",

"mapping" : { "status_code" : { "type" : "keyword" } }

}

}

},

"app-logs-prod-2026.04.18" : {

"mappings" : {

"status_code" : {

"full_name" : "status_code",

"mapping" : { "status_code" : { "type" : "long" } }

}

}

}

}Same field, two different types across two indices. You can't change a mapping on existing data without reindexing, so the correct approach is to first fix the index template to lock in the right type going forward, then reindex the conflicting older index if you need query consistency across it.

Update the index template to enforce the correct type for future indices:

PUT /_index_template/app-logs-prod-template

{

"index_patterns": ["app-logs-prod-*"],

"template": {

"mappings": {

"properties": {

"status_code": {

"type": "long"

}

}

}

}

}For the existing conflicting index, use the reindex API with a Painless script to coerce the type during reindexing:

POST /_reindex

{

"source": {

"index": "app-logs-prod-2026.04.17"

},

"dest": {

"index": "app-logs-prod-2026.04.17-fixed"

},

"script": {

"source": "if (ctx._source.status_code instanceof String) { ctx._source.status_code = Integer.parseInt(ctx._source.status_code) }",

"lang": "painless"

}

}After verifying the reindexed data looks correct, you can either alias the fixed index back to the original name or update your data view to exclude the old conflicting index and include the fixed one. In the Kibana UI, refresh the data view field list after reindexing so the conflict warnings disappear.

Root Cause 6: Log Shipper Pipeline Is Stalled

Even when Elasticsearch is perfectly healthy, the log shipper itself can be the silent culprit. Filebeat is particularly prone to getting stuck on a corrupted registry. The registry file tracks which log files have been read and at what byte offset. If it gets corrupted — or if it points to an inode that was rotated away by logrotate — Filebeat will sit there doing nothing while your logs accumulate on disk unread.

Check Filebeat's status and harvester metrics via its monitoring endpoint:

systemctl status filebeat

curl -s http://127.0.0.1:5066/stats | python3 -m json.tool | grep -A 10 '"harvester"'"harvester": {

"closed": 12,

"open_files": 0,

"running": 0,

"skipped": 0,

"started": 12

}Zero running harvesters and zero open files while log files on disk are actively growing is the signature of a stalled Filebeat instance. Stopping the service, clearing the registry, and restarting forces it to rediscover active log files:

systemctl stop filebeat

rm -rf /var/lib/filebeat/registry

systemctl start filebeatBe aware that this causes Filebeat to re-harvest from the beginning of your log files (subject to

ignore_oldersettings), which can produce a burst of historical data hitting Elasticsearch. In a high-volume environment, do this during a low-traffic window and watch the Logstash or Elasticsearch ingestion rate so you don't accidentally cause a write backlog.

Root Cause 7: Index Refresh Interval Too Long

This one only affects data that was indexed in the last few minutes, so it tends to masquerade as a "logs are slightly delayed" problem rather than an outright outage. Elasticsearch doesn't make newly indexed documents searchable immediately — a per-index refresh interval controls how frequently the in-memory write buffer gets committed to a searchable segment. The default is one second, which is fine for most real-time logging use cases. But if someone raised that interval to reduce I/O pressure on the data nodes, you might find that documents sit invisible in the buffer for the full duration of the interval.

Check the current refresh interval for your active index:

GET /app-logs-prod-2026.04.18/_settings?filter_path=*.settings.index.refresh_interval{

"app-logs-prod-2026.04.18" : {

"settings" : {

"index" : {

"refresh_interval" : "60s"

}

}

}

}A 60-second refresh interval means documents won't appear in Kibana searches until up to a minute after they're written. For a logging index where near-real-time visibility matters, reset it:

PUT /app-logs-prod-2026.04.18/_settings

{

"index": {

"refresh_interval": "1s"

}

}To immediately surface documents currently sitting in the buffer without waiting for the next scheduled refresh:

POST /app-logs-prod-2026.04.18/_refreshIf the refresh interval was intentionally increased to handle high ingest throughput, consider a compromise — something like

5srather than

60s— which reduces refresh overhead while keeping Kibana data reasonably current.

Prevention

Most of the issues above are repeatable. Here's what actually works in practice to stop them from recurring.

Implement Index Lifecycle Management from day one. An ILM policy that rolls over active indices at a size or age threshold and hard-deletes after your retention window keeps data nodes well under their disk watermarks. Clusters that hit the flood-stage watermark almost always do so because someone planned to "set up ILM later" and then didn't. Define the policy before you generate your first gigabyte of logs, not after your first incident.

Use explicit index templates with field mappings for any field you intend to query, filter, or aggregate on. Dynamic mapping is convenient for early prototyping but it becomes a liability in production — the first document with an unexpected format sets a mapping that conflicts with every subsequent document that differs. Define types for

status_code,

response_time,

log.level, and any other structured field before your first write.

Expose the Elasticsearch cluster health metric to your monitoring stack. The Elasticsearch Prometheus exporter or Metricbeat's Elasticsearch module can scrape

/_cluster/healthand forward the status to your alerting system. An alert on

cluster_status != greengets you ahead of shard allocation failures, disk pressure, and node losses before users notice missing data in Kibana. A cluster that's been yellow for three days is one node failure away from going red.

Similarly, monitor your log shipper pipeline metrics. When Logstash's

events.outdrops to zero while

events.inis non-zero, or when Filebeat's running harvester count drops to zero unexpectedly, you want an automated alert — not a support ticket that arrives after an hour of missing logs. Both Logstash and Filebeat expose these metrics natively via their monitoring APIs and both integrate with Metricbeat for centralized collection.

Finally, keep your Kibana data views documented and in version control alongside your Logstash or Fluent Bit pipeline configurations. Whenever the output index naming convention changes — and it will change — the data view needs to change with it. A short checklist step in your pipeline change runbook that says "verify the Kibana data view pattern still matches the new index names" costs almost nothing and prevents the class of invisible failures where logs are flowing but Kibana is just looking in the wrong place.