What Is MTU?

The Maximum Transmission Unit (MTU) is the largest size, measured in bytes, that a single network packet or frame can be transmitted across a given link or interface without being fragmented. It is one of the most foundational parameters in network engineering, and one of the most frequently misconfigured — silently degrading throughput, increasing CPU usage, and causing intermittent black holes that are notoriously difficult to diagnose.

MTU operates at both Layer 2 (Data Link) and Layer 3 (Network) of the OSI model. At Layer 2, the frame size limit is enforced by the underlying physical medium. Standard Ethernet has a maximum frame size of 1518 bytes (or 1522 bytes with an 802.1Q VLAN tag). At Layer 3, the IP packet must fit inside the Layer 2 frame payload, which is where the 1500-byte standard IPv4 and IPv6 MTU originates.

The 1500-byte figure is not arbitrary. It was standardized in IEEE 802.3 as a deliberate trade-off between efficiency and error recovery on early shared-medium Ethernet hardware. Larger frames meant more data lost per collision or bit error, while smaller frames increased per-packet header overhead. As hardware improved, that constraint remained baked into the internet's global default — which is why changing it requires consistent, end-to-end coordination.

Understanding Ethernet Frames and Packet Structure

To fully understand MTU, you need to understand the anatomy of an Ethernet frame and where the 1500-byte limit sits within it:

- Preamble (7 bytes): Clock synchronization sequence — not counted in frame size calculations

- Start Frame Delimiter (1 byte): Marks the beginning of the addressable frame

- Destination MAC Address (6 bytes)

- Source MAC Address (6 bytes)

- EtherType or Length Field (2 bytes)

- Payload (46–1500 bytes): This is where the IP packet lives

- Frame Check Sequence (4 bytes): CRC error detection trailer

The total raw Ethernet frame on the wire is therefore up to 1518 bytes: a 14-byte header, a 1500-byte payload, and a 4-byte FCS. The MTU value of 1500 refers specifically to the maximum payload size — everything above Layer 2. Your IP header, TCP header, and application data all share that 1500-byte budget.

Add an 802.1Q VLAN tag and the frame grows to 1522 bytes, but the IP payload budget remains 1500. Add tunneling encapsulation and the available payload shrinks further. VXLAN adds 50 bytes of outer headers, GRE adds 24 bytes, and IPSec in tunnel mode can add 60 bytes or more depending on cipher suite and padding. In overlay and cloud environments, this overhead is the source of persistent MTU-related problems.

What Are Jumbo Frames?

Jumbo frames are Ethernet frames with an IP payload larger than the standard 1500 bytes. There is no formal IEEE standard defining jumbo frames — the term emerged organically from the networking industry to describe frames that exceed the traditional limit. The de facto standard target is an MTU of 9000 bytes, occasionally listed as 9216 bytes when vendors are counting the full Ethernet frame size including headers rather than just the payload.

The jump from 1500 to 9000 is a 6x increase in payload per frame. This dramatically reduces the number of packets required to transfer a given amount of data, cutting per-packet overhead proportionally. For workloads that saturate 10 GbE, 25 GbE, or 100 GbE links, this overhead reduction can be the difference between hitting line rate or stalling under CPU pressure.

Critical constraint: Jumbo frames are not an IEEE standard and are not universally supported. Every device in the traffic path — physical NICs, virtual NICs, physical switch ports, virtual switches, and any routers or firewalls — must be explicitly configured to support the larger MTU. A single 1500-byte device in the middle will either silently drop jumbo packets (if the Don't Fragment bit is set) or fragment them (adding CPU overhead and undermining the goal). This end-to-end consistency requirement is where most jumbo frame deployments fail.

How MTU Works — Fragmentation and Path MTU Discovery

When a host generates an IP packet larger than the MTU of its outgoing interface, the IP stack has two options: fragment the packet or discard it. The decision is governed by the Don't Fragment (DF) bit in the IPv4 header.

If DF is cleared, routers along the path are permitted to fragment the packet. Each fragment carries a copy of the original IP header plus a subset of the payload, along with fragmentation offset information so the destination can reassemble the original datagram. Reassembly adds CPU overhead at the destination, increases memory pressure, and creates vulnerability to fragment-based attacks. It is generally undesirable in modern networks and disabled by default in most secure configurations.

If DF is set — which is the default for modern TCP implementations — and the packet exceeds the link MTU, the router drops the packet and returns an ICMP Type 3, Code 4 message: "Fragmentation Needed and Don't Fragment was Set." The ICMP response includes the MTU of the limiting link, allowing the sender to reduce its packet size and retransmit.

This negotiation process is called Path MTU Discovery (PMTUD), standardized in RFC 1191 for IPv4 and RFC 1981 for IPv6. PMTUD allows endpoints to dynamically discover the minimum MTU across the entire network path and size their packets accordingly. It is elegant in theory and fragile in practice.

The core problem is that PMTUD depends on ICMP delivery. Many firewall operators block ICMP traffic wholesale — either out of security policy or a misguided belief that ICMP is inherently dangerous. When ICMP Type 3 Code 4 messages are blocked, the sending host never learns that its packets are being dropped. TCP sessions appear to establish normally (the initial handshake uses small packets) but data transfers stall once the window fills with large segments. This is the PMTUD black hole — one of the most common and hardest-to-diagnose networking issues in enterprise environments.

The standard mitigation is TCP MSS clamping. A firewall or router at the network boundary intercepts TCP SYN and SYN-ACK packets and rewrites the advertised MSS value to a safe size that accounts for the path MTU. This prevents oversized packets from ever being transmitted, working around the ICMP dependency entirely.

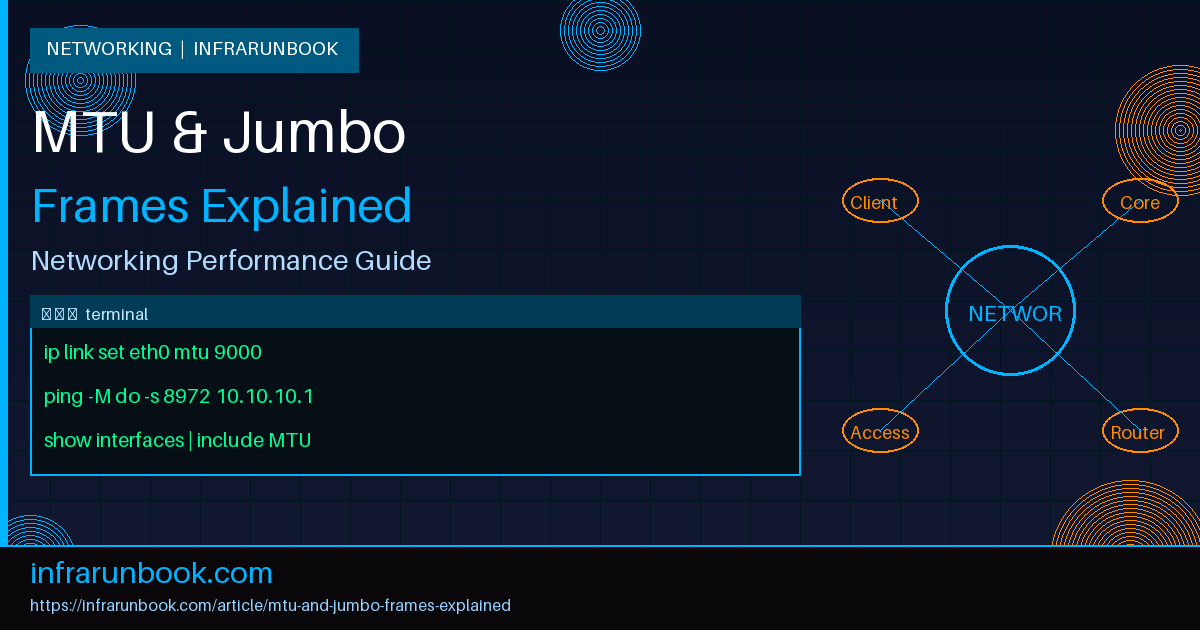

# Verify current MTU on Linux interface

ip link show eth0

# Output example:

# 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc mq state UP

# Set MTU to 9000 (requires root)

ip link set eth0 mtu 9000

# Confirm the change

ip link show eth0 | grep mtu

# 2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 9000 qdisc mq state UPWhy MTU Matters for Network Performance

MTU has measurable, quantifiable impact on performance — but the effects are workload-dependent. Understanding exactly what changes and why helps you decide when jumbo frames are worth the operational complexity.

Header overhead reduction: An IPv4 header is at minimum 20 bytes; IPv6 is 40 bytes. With a 1500-byte MTU, IP headers represent approximately 1.3% of each packet. With a 9000-byte MTU, the same header is 0.22% of the packet. For bulk transfers, this compounds significantly over millions of packets. TCP adds another 20 bytes of header, and with options like timestamps and SACK, TCP headers routinely reach 32–40 bytes. At 1500-byte MTU with a 40-byte combined header, you are spending 2.7% of every packet on overhead. At 9000-byte MTU, that drops to 0.44%.

Interrupt and CPU load reduction: Each received packet triggers a CPU interrupt (or a polling cycle in NAPI-based drivers). Fewer, larger packets mean fewer interrupts per unit of data, directly reducing CPU utilization on both endpoints. On a 25 GbE link transferring data at line rate with 1500-byte packets, the host processes approximately 2.08 million packets per second. At 9000-byte MTU, that drops to approximately 347,000 packets per second — an 83% reduction in packet processing events.

Throughput improvement at high link speeds: At 1 GbE and below, the CPU overhead savings from jumbo frames are modest. At 10 GbE, they become meaningful. At 25 GbE and above, they are often the deciding factor in whether the host CPU can sustain line rate. Benchmarks on iSCSI and NFS workloads consistently show 10–25% throughput improvement when jumbo frames are enabled on 10 GbE links, with the primary driver being freed CPU cycles.

Latency impact: Serialization delay — the time to place a frame on the wire — increases proportionally with frame size. A 9000-byte frame on a 1 GbE link takes 72 microseconds to serialize; a 1500-byte frame takes 12 microseconds. On 10 GbE, these numbers are 7.2 and 1.2 microseconds respectively. For latency-sensitive workloads, jumbo frames can slightly increase worst-case latency, but the effect is negligible in most enterprise contexts.

Configuring MTU in Practice

Deploying jumbo frames is an end-to-end operation. Start at the physical switch, work outward to host interfaces, then validate with active packet-size testing. Never assume — always verify.

Step 1: Configure the physical switch

Using sw-infrarunbook-01 as the target switch (Cisco IOS/NX-OS syntax):

sw-infrarunbook-01# configure terminal

! Set system-wide jumbo MTU (NX-OS)

sw-infrarunbook-01(config)# system jumbomtu 9216

! Configure individual interface (IOS)

sw-infrarunbook-01(config)# interface GigabitEthernet1/0/1

sw-infrarunbook-01(config-if)# mtu 9216

sw-infrarunbook-01(config-if)# description iSCSI-Storage-Uplink

sw-infrarunbook-01(config-if)# end

! Verify

sw-infrarunbook-01# show interfaces GigabitEthernet1/0/1 | include MTU

MTU 9216 bytes, BW 1000000 Kbit/sec, DLY 10 usecStep 2: Configure the Linux host interface

# Temporary change (lost on reboot) — test first

ip link set eth0 mtu 9000

# Persistent via /etc/network/interfaces (Debian/Ubuntu)

auto eth0

iface eth0 inet static

address 10.10.30.50

netmask 255.255.255.0

gateway 10.10.30.1

mtu 9000

# Persistent via NetworkManager (nmcli)

nmcli connection modify "Storage-Network" 802-3-ethernet.mtu 9000

nmcli connection up "Storage-Network"

# Persistent via RHEL/CentOS ifcfg file

# Edit /etc/sysconfig/network-scripts/ifcfg-eth0

# Add: MTU=9000

# Verify after applying

ip link show eth0 | grep mtuStep 3: Validate with oversized ping

# Linux — send 8972-byte ICMP payload with DF bit set

# 8972 = 9000 (MTU) - 20 (IP header) - 8 (ICMP header)

ping -M do -s 8972 10.10.30.200

# Success: 8972 bytes from 10.10.30.200: icmp_seq=1 ttl=64 time=0.412 ms

# Failure: ping: local error: message too long, mtu=1500

# macOS equivalent

ping -D -s 8972 10.10.30.200

# Windows equivalent

ping -f -l 8972 10.10.30.200

# Discover path MTU using tracepath

tracepath 10.10.30.200

# 1?: [LOCALHOST] pmtu 9000

# 1: 10.10.30.200 0.312ms reached

# Resume: pmtu 9000 hops 1 back 1Step 4: Benchmark throughput with iperf3

# Run iperf3 server on storage host (10.10.30.200)

iperf3 -s -B 10.10.30.200

# Run iperf3 client on compute host (10.10.30.50) — baseline 1500 MTU

iperf3 -c 10.10.30.200 -t 60 -P 4 --logfile /tmp/baseline-1500mtu.txt

# After configuring jumbo frames on both ends — retest

iperf3 -c 10.10.30.200 -t 60 -P 4 --logfile /tmp/jumbo-9000mtu.txt

# Compare results and CPU utilization

cat /tmp/baseline-1500mtu.txt

cat /tmp/jumbo-9000mtu.txtMTU in Virtual and Overlay Environments

Modern virtualized infrastructure introduces additional MTU complexity because overlay protocols add encapsulation headers that consume payload space. If the physical network MTU is not sized to accommodate both the encapsulation overhead and the full guest frame, fragmentation or silent packet drops occur.

VXLAN adds 50 bytes of overhead: 14 bytes outer Ethernet header + 20 bytes outer IP header + 8 bytes UDP header + 8 bytes VXLAN header. If the physical network MTU is 1500 bytes, the maximum guest payload is only 1450 bytes. Standard 1500-byte guest traffic will be fragmented or dropped. The solution is to set the physical network MTU to 1600 bytes minimum, or ideally 9000 bytes so that guest jumbo frames also pass cleanly.

VMware vSphere with NSX-T requires the physical uplink MTU to be set to at least 1600 bytes for standard VXLAN, with 9000 bytes recommended for storage and vMotion traffic. The ESXi vSS or vDS port groups must be configured with a matching MTU, and the physical switch ports connected to ESXi uplinks must also support the configured MTU.

Kubernetes overlay networks present similar challenges. Flannel using the VXLAN backend defaults to MTU 1450 to account for the 50-byte VXLAN overhead on a 1500-byte physical network. Calico attempts to auto-detect and configure the correct MTU but requires manual tuning in complex environments with multiple encapsulation layers.

# Check MTU of overlay interfaces on a Kubernetes node

ip link show flannel.1

ip link show vxlan.calico

# Check Calico MTU configuration

kubectl get configmap -n kube-system calico-config -o yaml | grep -i mtu

# Check Flannel network config

kubectl get configmap -n kube-flannel kube-flannel-cfg -o yaml | grep -i mtuReal-World Use Cases for Jumbo Frames

iSCSI Storage Networks: iSCSI over Ethernet is the most universally cited use case for jumbo frames in enterprise environments. Every major storage vendor — NetApp, Pure Storage, Dell EMC, HPE Nimble — recommends MTU 9000 for iSCSI initiator-to-target traffic. A VMware ESXi cluster at 10.10.30.0/24 connecting to a storage array at 10.10.30.200 over a dedicated iSCSI VLAN should have MTU 9000 configured on the ESXi vmkernel adapters, the vSwitch, the physical uplinks, and all switch ports in the iSCSI VLAN. Inconsistent configuration — even a single switch port left at 1500 — causes random iSCSI timeouts under load that are extremely difficult to trace without packet capture.

NFS over High-Speed Ethernet: NFS v3 and v4 workloads running large sequential operations — media ingest, backup targets, HPC scratch filesystems — benefit substantially from jumbo frames. The reduced packet processing overhead allows both the NFS server and client to sustain throughput closer to the line rate of the underlying link. An NFS server at 172.16.10.20 exporting to compute nodes in the 172.16.10.0/24 subnet over a 25 GbE dedicated storage network should use MTU 9000 across all interfaces in the path.

HPC and RDMA Workloads: Ethernet-based RDMA using RoCEv2 (RDMA over Converged Ethernet version 2) requires a lossless fabric, typically achieved with Priority Flow Control (PFC). RoCEv2 deployments uniformly use jumbo frames at MTU 4200 to 9000 to reduce packet rates and CPU overhead on MPI-based workloads. The combination of PFC, DCQCN congestion control, and jumbo frames is the standard formula for high-performance compute networking on Ethernet.

Database Replication: High-availability database configurations — PostgreSQL streaming replication, MySQL Group Replication, Oracle Data Guard — transfer continuous streams of write-ahead log data between primary and replica nodes. On a dedicated replication network between 10.10.40.10 (primary) and 10.10.40.11 (replica), jumbo frames reduce the CPU cycles consumed by network I/O, leaving more headroom for query processing under write-heavy workloads.

Common Misconceptions About MTU and Jumbo Frames

Misconception: Jumbo frames improve performance for all traffic types.

Reality: Jumbo frames benefit bulk transfers with sustained, large data flows. Small-packet, latency-sensitive workloads — VoIP, interactive SSH sessions, gaming, financial tick data — see no throughput benefit and may experience slightly increased worst-case latency due to higher serialization time per frame. Jumbo frames are a bulk-transfer optimization, not a universal performance improvement.

Misconception: Setting MTU 9000 on the server is sufficient.

Reality: Every device in the path must be configured for the same (or larger) MTU. NICs, bond interfaces, vSwitches, physical switch ports, port channels, routers, and firewalls all have MTU settings that must be reviewed. One 1500-byte device in the path will silently break jumbo frame traffic in ways that are hard to reproduce and diagnose without a protocol analyzer.

Misconception: MTU 9000 and MTU 9216 mean the same thing.

Reality: These values are calculated differently by different vendors. MTU 9000 typically refers to the IP-layer payload size. MTU 9216 is often the full Ethernet frame size including the 14-byte Ethernet header and 4-byte FCS. Always verify what a specific vendor counts in their MTU value to avoid a 216-byte mismatch that triggers fragmentation.

Misconception: PMTUD reliably handles MTU mismatches automatically.

Reality: PMTUD depends on ICMP messages being delivered end-to-end. When firewalls block ICMP — even selectively — PMTUD breaks silently. The result is the classic black hole: small packets flow normally, large data transfers stall after the TCP window fills. MSS clamping is the reliable workaround, not a preference.

Misconception: Jumbo frames work on internet-facing links.

Reality: The public internet operates at MTU 1500. Internet-facing interfaces must use the standard MTU. Jumbo frames are strictly a LAN and private data center optimization. Attempting to send jumbo frames toward the internet will result in fragmentation or packet loss at the first ISP router.