Symptoms

You're paged at 2 AM because monitoring is screaming about replication lag. Or a developer pings you saying "the replica is showing data from 10 minutes ago." Either way, you open your terminal and start digging. Here's what you typically see.

Querying

pg_stat_replicationon the primary reveals non-zero lag across every column:

SELECT client_addr, state, sent_lsn, write_lsn, flush_lsn, replay_lsn,

write_lag, flush_lag, replay_lag

FROM pg_stat_replication;

client_addr | state | sent_lsn | write_lsn | flush_lsn | replay_lsn | write_lag | flush_lag | replay_lag

--------------+-----------+-----------+-----------+-----------+------------+-----------+------------+------------

10.10.10.52 | streaming | 0/B2A0018 | 0/B1C4020 | 0/B1C4020 | 0/B0F8800 | 00:00:04.2| 00:00:04.2 | 00:00:14.8

(1 row)On the replica side,

pg_stat_wal_receivershows the connection is alive but replay is clearly behind received LSN:

SELECT status, receive_start_lsn, received_lsn,

last_msg_send_time, last_msg_receipt_time

FROM pg_stat_wal_receiver;

status | receive_start_lsn | received_lsn | last_msg_send_time | last_msg_receipt_time

-----------+-------------------+--------------+-----------------------------+-----------------------------

streaming | 0/A0000000 | 0/B1C4020 | 2026-04-15 02:13:45.12+00 | 2026-04-15 02:13:45.34+00

(1 row)The gap between what the replica has received and what

pg_current_wal_lsn()returns on the primary is your lag in bytes — translate that to seconds and it maps directly to the

replay_laginterval you saw above. Other symptoms include monitoring dashboards showing replication_delay_seconds spiking past SLO thresholds, application sessions on the replica reading stale rows that were committed minutes ago on the primary, and in the worst cases the replica falling so far behind that it disconnects entirely and must re-sync from a fresh base backup.

Root Cause 1: Network Bandwidth Saturated

WAL streaming is a continuous, high-throughput data flow. The primary ships every WAL record to connected replicas in near real-time. When the network link between primary and replica gets saturated — by backup jobs, ETL pipelines, application traffic, or even a competing replica on the same interface — WAL data queues up faster than it can be transmitted. Lag grows. Steadily.

In my experience, this is most common on shared 1GbE links where a nightly

pg_dumpover the same interface consumes 800+ Mbps. The lag climbs from midnight until the dump finishes, then corrects itself. Nobody notices until the SLO is breached.

To identify this, check interface throughput on both ends during the lag window:

# On sw-infrarunbook-01 (primary)

$ sar -n DEV 1 10 | grep eth0

02:14:01 eth0 rxpck/s txpck/s rxkB/s txkB/s

02:14:02 eth0 1243.00 8921.00 782.34 9842.11

02:14:03 eth0 1198.00 9102.00 755.21 9901.45If

txkB/sis pinned near the interface's rated capacity, you've found your culprit. Cross-reference which processes own those connections:

$ ss -tnp | grep :5432

ESTAB 0 0 10.10.10.51:5432 10.10.10.52:41022 users:(("postgres",pid=3821,fd=14))

ESTAB 0 0 10.10.10.51:5432 10.10.10.20:55301 users:(("postgres",pid=4012,fd=10))Then check

pg_stat_activityto see if backup or bulk query traffic is competing with WAL sender on the same link.

Fix: Move backups to a dedicated network interface, or implement QoS to prioritize WAL replication traffic. If you're on a hypervisor, give database VMs their own vSwitch or dedicated trunk port. Replication traffic should never compete with application or backup flows on the same interface. A dedicated 10.10.20.0/24 replication VLAN with its own NIC eliminates this class of problem entirely.

Root Cause 2: Primary Under Heavy Write Load

PostgreSQL generates WAL for every committed write. Bulk inserts, large updates, VACUUM FULL runs, and index rebuilds can produce gigabytes of WAL per minute. If your primary is generating more WAL than your replica can apply — even over a fast network — lag grows as a direct function of that throughput mismatch.

The replica's WAL replay process is single-threaded in PostgreSQL's physical streaming replication model. It applies WAL sequentially. If the primary generates 500 MB/s during a batch job and the replica can only sustain 200 MB/s replay throughput due to disk or CPU constraints, the gap widens until the load subsides.

Diagnose it by measuring WAL generation rate on the primary directly:

-- Run twice, 60 seconds apart, subtract to get rate

SELECT pg_current_wal_lsn();

pg_current_wal_lsn

--------------------

0/B2A0018

-- 60 seconds later:

SELECT pg_current_wal_lsn();

pg_current_wal_lsn

--------------------

0/D1F4200

-- Convert difference to bytes:

SELECT ('0/D1F4200'::pg_lsn - '0/B2A0018'::pg_lsn) AS wal_bytes_per_minute;

wal_bytes_per_minute

----------------------

501784040

(1 row)500 MB of WAL per minute is aggressive. Find what's generating it using

pg_stat_statementsif it's enabled, or catch active sessions in the act:

SELECT pid, usename, application_name, state, wait_event_type, wait_event,

query_start, left(query, 100) AS query_snippet

FROM pg_stat_activity

WHERE state = 'active'

ORDER BY query_start ASC

LIMIT 10;Fix: Throttle batch jobs by adding

pg_sleep()between chunk commits. Reduce individual transaction sizes so WAL is generated in smaller, more digestible bursts. Schedule heavy writes during off-peak hours. For index rebuilds, use

CREATE INDEX CONCURRENTLYwhich spreads WAL generation over time rather than dumping it all at once. Also enable WAL compression — it's one of the highest-leverage settings for reducing WAL volume with minimal downside:

# postgresql.conf on the primary

wal_compression = onOn typical OLTP workloads, WAL compression reduces volume by 30-60% at the cost of modest CPU overhead. That translates directly to less data the replica needs to apply.

Root Cause 3: Replica Underpowered

The replica's WAL replay process is CPU and I/O bound. If the replica is on a VM with fewer CPU cores or slower storage than the primary, it simply can't keep up. This is surprisingly common in environments where replicas were sized as "just for reads" without accounting for the constant WAL replay workload running underneath those reads.

Check the replica's resources during a lag event:

# On the replica (10.10.10.52)

$ vmstat 1 10

procs -----------memory---------- ---swap-- -----io---- -system-- ------cpu-----

r b swpd free buff cache si so bi bo in cs us sy id wa st

3 2 0 214032 18240 1842160 0 0 4821 1204 892 2241 8 12 32 48 0

4 3 0 211840 18240 1843200 0 0 5012 1340 934 2389 9 13 28 50 0High

wa— I/O wait — is the telltale sign. The replica is trying to apply WAL but the disk can't keep pace. Verify with iostat:

$ iostat -x 1 5 | grep -E 'Device|sda|nvme'

Device r/s w/s rkB/s wkB/s r_await w_await %util

sda 12.40 842.30 196.20 6738.40 0.82 18.94 99.899.8% disk utilization on the replica while the primary's disk is barely touched tells the whole story. I've watched this exact scenario on a fleet where the primary ran NVMe and replicas were still on spinning HDDs. The replica ran perpetually 30-60 seconds behind and never caught up during business hours, no matter how much we tuned PostgreSQL parameters.

Also rule out an intentional delay being misconfigured:

-- On the replica

SHOW recovery_min_apply_delay;

recovery_min_apply_delay

--------------------------

0

(1 row)Fix: Match the replica's storage performance to the primary's at minimum. The replica is doing everything the primary does — applying every write — while simultaneously serving read queries on top. If the primary has NVMe, the replica needs NVMe. Upgrading storage is the right answer when hardware is the bottleneck. No amount of PostgreSQL tuning compensates for a disk that can't keep up.

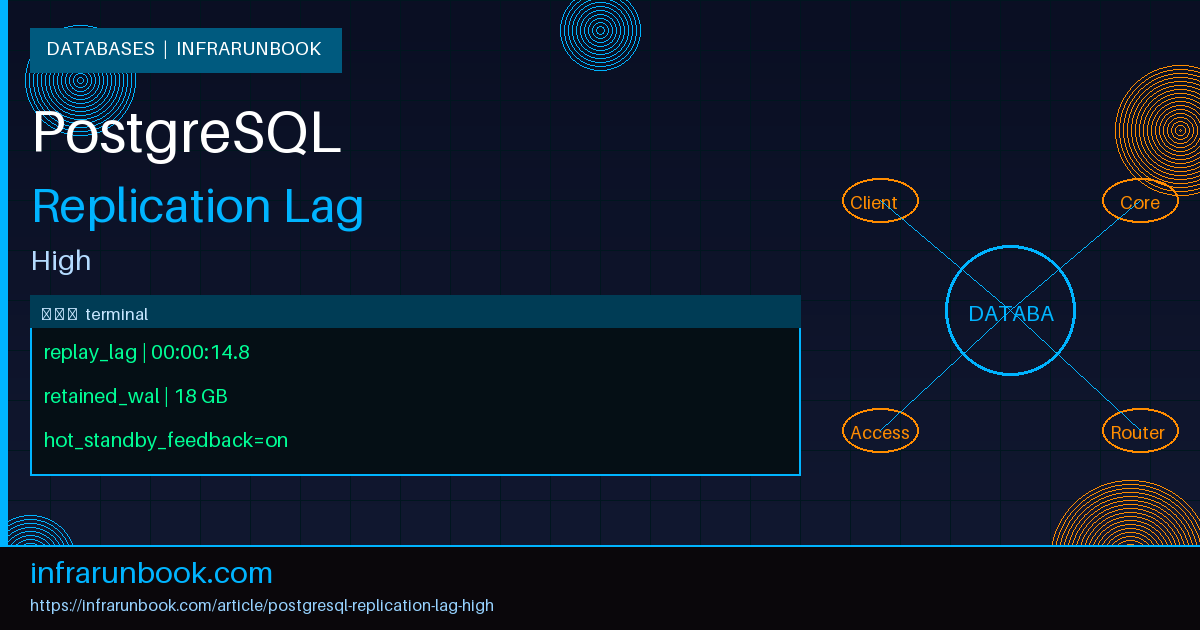

Root Cause 4: WAL Sender Slot Blocked

Replication slots guarantee that WAL is retained on the primary until the replica confirms it has consumed that data. This prevents the replica from falling behind due to WAL being recycled before it's been read — a real problem without slots. But it introduces a serious failure mode: if a slot becomes stale because the replica goes down or disconnects for an extended period, the primary must hold all WAL generated since the slot's

restart_lsn. That can mean gigabytes or even terabytes of WAL accumulating in

pg_wal.

Check slot status on the primary:

SELECT slot_name, plugin, slot_type, active, restart_lsn, confirmed_flush_lsn,

pg_size_pretty(pg_wal_lsn_diff(pg_current_wal_lsn(), restart_lsn)) AS retained_wal

FROM pg_replication_slots;

slot_name | plugin | slot_type | active | restart_lsn | confirmed_flush_lsn | retained_wal

----------------+--------+-----------+--------+-------------+---------------------+--------------

replica_slot_1 | | physical | t | 0/B0F8800 | 0/B0F8800 | 448 MB

replica_slot_2 | | physical | f | 0/42C10000 | 0/42C10000 | 18 GB

(2 rows)That second slot is inactive and retaining 18 GB of WAL. Confirm the

pg_waldirectory reflects this:

$ du -sh /var/lib/postgresql/16/main/pg_wal/

18G /var/lib/postgresql/16/main/pg_wal/When

pg_walfills the filesystem, PostgreSQL panics and stops. That stops replication entirely for all connected replicas, not just the one with the stale slot. This is how one offline replica can take down an entire replication topology.

Fix: Drop stale slots that are no longer needed:

SELECT pg_drop_replication_slot('replica_slot_2');Set

max_slot_wal_keep_sizein

postgresql.confto cap how much WAL any slot can retain before PostgreSQL invalidates it automatically. A replica that needs a resync is far better than a primary with a full disk:

# postgresql.conf

max_slot_wal_keep_size = 20GB

wal_keep_size = 2GBMonitor slot lag as a first-class alerting metric. Alert when

retained_walfor any slot exceeds your disk headroom threshold — long before it becomes an incident.

Root Cause 5: Hot Standby Feedback Causing Bloat

Hot standby feedback is a mechanism that prevents query cancellations on the replica due to conflicting VACUUM operations. When

hot_standby_feedback = on, the replica periodically tells the primary: "don't vacuum rows that my active queries are still reading." This sounds like a feature. It is a feature. It's also a slow-motion disaster in environments with long-running analytics queries on replicas.

Here's what happens in practice. A long-running query on the replica runs for 45 minutes. During that entire window, the primary cannot vacuum the rows that query is reading. Dead tuples accumulate. Table bloat grows. Scans get slower because they have to physically skip more dead rows. Autovacuum eventually fires an intensive, WAL-heavy cleanup pass — and the replica has to replay all of it. The very mechanism meant to protect the replica's queries ends up generating more work for it to do.

Identify this by correlating long-running queries on the replica with bloat accumulation on the primary:

-- On the replica: find long-running queries

SELECT pid, now() - query_start AS duration, state, left(query, 120) AS query

FROM pg_stat_activity

WHERE state != 'idle'

AND (now() - query_start) > interval '5 minutes'

ORDER BY duration DESC;

pid | duration | state | query

-------+----------+--------+----------------------------------------------

21045 | 00:47:23 | active | SELECT * FROM orders WHERE created_at > ...

(1 row)-- On the primary: check for bloated tables

SELECT schemaname, tablename, n_dead_tup, n_live_tup,

round(n_dead_tup::numeric / nullif(n_live_tup + n_dead_tup, 0) * 100, 2) AS dead_pct,

last_autovacuum

FROM pg_stat_user_tables

WHERE n_dead_tup > 100000

ORDER BY n_dead_tup DESC

LIMIT 10;

schemaname | tablename | n_dead_tup | n_live_tup | dead_pct | last_autovacuum

------------+-----------+------------+------------+----------+------------------------------

public | orders | 9821440 | 4201030 | 70.05 | 2026-04-14 22:01:12.123+00

(1 row)70% dead tuples is severe. Autovacuum has tried and failed to clean this table because hot_standby_feedback is blocking it from removing rows the replica's 47-minute query still holds an XID interest in.

Fix: Disable hot_standby_feedback and instead protect the replica's queries through timeout policies. Canceling a query is recoverable. Uncontrolled bloat and replication lag are not:

# postgresql.conf on the replica

hot_standby_feedback = off

# Set in postgresql.conf or per analytics role:

statement_timeout = '30min'

idle_in_transaction_session_timeout = '5min'If you genuinely need multi-hour analytics on a replica without query cancellations, use a logical replica or a purpose-built analytics node — somewhere the bloat side-effect doesn't flow back to affect the primary's vacuum behavior. For immediate relief, terminate the blocking backend:

-- On the replica

SELECT pg_terminate_backend(21045);Root Cause 6: Long-Running Transactions on the Primary

A forgotten open transaction on the primary prevents VACUUM from advancing the oldest transaction ID horizon, which causes the same bloat and WAL-amplification problem as hot_standby_feedback — but it originates on the primary itself. The replica then has to replay the resulting heavy autovacuum WAL. It's a quieter cause of lag but I've seen it show up enough times that it deserves its own check.

Find it like this:

SELECT pid, usename, state, now() - xact_start AS txn_age, left(query, 80) AS query

FROM pg_stat_activity

WHERE xact_start IS NOT NULL

AND (now() - xact_start) > interval '10 minutes'

ORDER BY txn_age DESC;

pid | usename | state | txn_age | query

-------+-------------------+----------------------+----------+-------

18832 | infrarunbook-admin| idle in transaction | 01:22:14 | BEGIN

(1 row)An "idle in transaction" session open for 82 minutes is a forgotten connection — likely an application that opened a transaction, did its work, and then crashed or hung before committing. Terminate it and set

idle_in_transaction_session_timeouton the primary to prevent recurrence:

SELECT pg_terminate_backend(18832);

# postgresql.conf on the primary

idle_in_transaction_session_timeout = '10min'Prevention

Reactive troubleshooting at 2 AM is expensive. Build the following into every PostgreSQL deployment and you'll catch lag before it becomes a page.

Monitor the right metrics. Alert on

replay_lagfrom

pg_stat_replicationwhen it exceeds your SLO — typically 30 seconds for most applications, tighter for financial or inventory systems. Also alert on

pg_replication_slots.retained_waland on

pg_stat_user_tables.n_dead_tup / (n_live_tup + n_dead_tup)for bloat ratio. These three metrics catch the majority of lag causes before they escalate.

Cap WAL slot retention unconditionally. Always set

max_slot_wal_keep_sizein production. Losing a replica to a required resync is a recoverable operational event. Losing your primary to a full disk is not:

# postgresql.conf

max_slot_wal_keep_size = 20GB

wal_keep_size = 2GBMatch hardware between primary and replica. The replica is doing everything the primary does plus serving read queries. If the primary has NVMe, the replica needs NVMe. Underpowering replicas is a false economy — the operational cost of chasing lag incidents far exceeds the hardware savings.

Isolate replication traffic to its own network path. Backups, ETL, and application traffic should not share a NIC with WAL streaming. A dedicated replication subnet — even a simple second interface at 10.10.20.0/24 — eliminates an entire class of network saturation problems.

Set session timeouts at every layer. Both

statement_timeoutand

idle_in_transaction_session_timeoutshould be configured for application roles on both primary and replica. These are your safety net against long-running queries and forgotten transactions feeding bloat and lag.

Enable WAL compression.

wal_compression = onreduces WAL volume by 30-60% for typical OLTP workloads with minimal CPU cost on modern hardware. Less WAL shipped means less replay work and less lag headroom consumed.

Test your replica's catch-up speed. Run a controlled write burst on the primary and time how long the replica takes to fully apply it. That number is your actual recovery time under load — and it should inform your SLO and RTO planning, not the other way around.

Replication lag is rarely mysterious once you know where to look. The causes above cover the vast majority of production incidents. Instrument your stack to surface them early, keep your hardware balanced, and put hard limits on the things that grow unbounded — slots, long transactions, bloat — and you'll spend a lot less time debugging at 2 AM.