What SLOs, SLAs, and Error Budgets Actually Are

Most engineers have seen these three terms thrown around in the same breath, often interchangeably, which is the first problem. SLA, SLO, and error budget are not synonyms. They're three distinct layers of the same reliability framework, and if you conflate them you'll make poor operational decisions every single time.

Start with the Service Level Indicator (SLI) — because that's actually where this whole thing begins, even though people rarely list it first. An SLI is the raw metric you're measuring. Request success rate, latency at the 99th percentile, error rate per minute — these are SLIs. They're just numbers. They tell you what's happening right now, with no judgment attached.

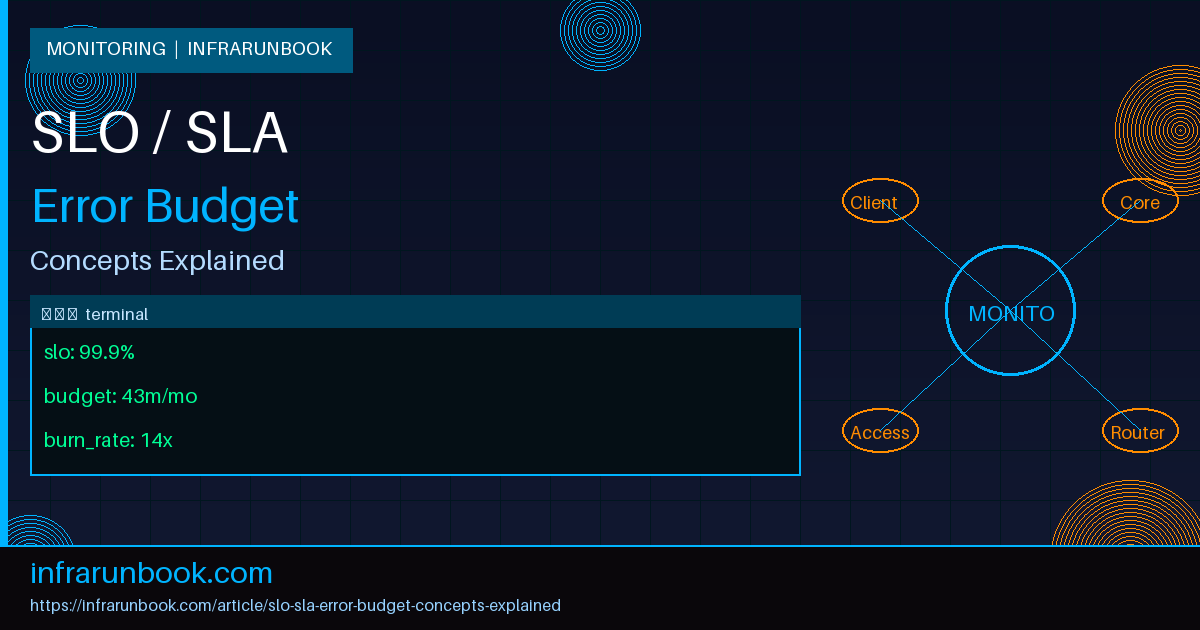

A Service Level Objective (SLO) is the target you set for an SLI over a specific time window. If your SLI is HTTP success rate and you decide you want 99.9% of requests to succeed over a rolling 30-day window, that's your SLO. It's an internal commitment. It lives in your runbook, your monitoring configuration, and your team's operational culture — not in a legal contract.

A Service Level Agreement (SLA) is the externally-facing, contractually-binding version of an SLO. Your SLA is what you've promised customers. Breach it and there are consequences — usually financial, like service credits or remedies outlined in your terms of service. The SLA is almost always softer than the SLO. In my experience, the gap between them is entirely intentional: your SLO is your internal tripwire so that if something is trending wrong, you catch it before the SLA breach actually materializes.

The error budget is derived from the SLO. It's the amount of unreliability you're permitted before you breach your objective. If your SLO is 99.9% availability over 30 days, you have 0.1% of that window to spend on downtime, errors, or degraded performance. That's roughly 43 minutes per month. Spend it on a deployment gone wrong, infrastructure maintenance, or an unexpected incident — but once it's gone, it's gone until the measurement window resets.

An SLO is a promise to yourself. An SLA is a promise to your customers. The error budget is what you're allowed to spend before breaking either one.

How the Math Actually Works

Let's make this concrete. Suppose sw-infrarunbook-01 hosts an API for solvethenetwork.com and you've set a 99.9% availability SLO over a 30-day rolling window. The arithmetic is straightforward.

# 30-day error budget calculation

Total minutes in 30 days : 30 * 24 * 60 = 43,200 minutes

Allowed downtime (0.1%) : 43,200 * 0.001 = 43.2 minutes

Error budget : 43.2 minutes / month

# Common SLO tiers and their monthly budgets

99.0% -> 432 minutes (~7.2 hours)

99.5% -> 216 minutes (~3.6 hours)

99.9% -> 43 minutes

99.95% -> 21 minutes

99.99% -> 4 minutesNow suppose you've already burned 30 minutes of that budget this month during a botched config push. You have 13.2 minutes left. That's a real, concrete number you can carry into any deployment discussion. "Should we push this change on a Friday afternoon?" suddenly becomes "we have 13 minutes of error budget remaining — is this change worth the risk?" That's a very different conversation from the vague "let's be careful."

Error budgets are typically tracked over a rolling window. Some teams prefer calendar-month windows because they make reporting easier, but rolling windows are generally more stable operationally — they don't create the artificial pressure spike that happens when teams realize "we reset on the 1st, so let's push risky changes right before the reset." Rolling windows smooth out that incentive.

In Prometheus, you'll typically record two counters: total requests and successful requests. Your SLI query for HTTP availability looks like this:

# SLI: HTTP success rate over a 5m window

sum(rate(http_requests_total{job="api", status!~"5.."}[5m]))

/

sum(rate(http_requests_total{job="api"}[5m]))To understand how fast you're consuming your error budget relative to your allowed rate, you calculate a burn rate. A burn rate of 1.0 means you're consuming budget at exactly the pace your SLO permits — you'll use it all up by the end of the window. A burn rate of 14 means you'd exhaust the entire monthly budget in about 2 hours.

# Error budget burn rate query

# Value > 1.0 means you're burning faster than the SLO allows

# Value > 14.0 on a 1h window = critical page

(

1 - (

sum(rate(http_requests_total{job="api", status!~"5.."}[1h]))

/

sum(rate(http_requests_total{job="api"}[1h]))

)

) / (1 - 0.999)A burn rate above 14 on a 1-hour window combined with a burn rate above 1 on a 6-hour window is a widely used double-alerting threshold. The short window catches fast burns — a sudden spike that'll drain your budget in hours. The long window catches slow burns — a subtle degradation that silently eats through your budget over days without ever looking dramatic enough to page anyone. I've seen the slow-burn scenario cause SLO breaches more often than the acute incidents, because nobody notices until it's too late.

Why This Framework Changes Everything

Here's what the SLO and error budget model does that raw uptime targets simply don't: it creates a shared language between engineering and the business. Before SLOs, reliability conversations usually followed a predictable and frustrating pattern. Operations says there was an incident. Product asks why this keeps happening. Everyone agrees to "be more careful." There's no data, no agreed definition of "reliable enough," and no objective threshold that triggers a specific response. Reliability is just vibes.

With an error budget, you get an objective answer to the question "are we reliable enough right now?" If your budget is positive, you're hitting your targets — ship features, move fast, experiment. If your budget is exhausted, stop deploying non-critical changes and fix reliability. That's not a judgment call anymore. It's policy.

This is the fundamental tension that error budgets resolve: reliability versus velocity. Development teams want to ship. Operations teams want stability. Error budgets give both sides a shared scoreboard. The development team isn't the enemy of reliability — they're allowed to spend the budget, but only as fast as the SLO permits. Once it's gone, the objective data says: slow down.

I've seen this transform team dynamics in practice. When error budget burn becomes a visible metric that product managers and engineering leads can track, "reliability work" stops being something that only gets prioritized after a major incident. It becomes a continuous part of sprint planning. "We're at 40% of our budget with two weeks left in the window — let's hold off on the database schema migration until next month" is a sentence that makes sense to everyone in the room, not just the ops team.

Real-World Implementation

Let's walk through how you'd actually set this up. Assume infrarunbook-admin is running a Prometheus and Alertmanager stack on sw-infrarunbook-01 at 192.168.1.10, monitoring the API service for solvethenetwork.com.

Rather than hand-rolling all the recording rules and alert thresholds yourself, use a tool like Sloth or Pyrra. These take a high-level SLO definition and generate the multi-window recording rules and burn rate alerts automatically. Your SLO definition lives in version control and gets reviewed like any other config change.

# /etc/sloth/slos.yaml

version: "prometheus/v1"

service: "solvethenetwork-api"

labels:

team: "platform"

slos:

- name: "requests-availability"

objective: 99.9

description: "HTTP availability SLO for the public API"

sli:

events:

error_query: >

sum(rate(http_requests_total{job="api",status=~"5.."}[{{.window}}]))

total_query: >

sum(rate(http_requests_total{job="api"}[{{.window}}]))

alerting:

name: SolvethenetworkAPIAvailability

page_alert:

labels:

severity: critical

ticket_alert:

labels:

severity: warningSloth generates Prometheus recording rules across six time windows — 5m, 30m, 1h, 6h, 1d, and 3d — and produces alerting rules that fire on the multi-window burn rate thresholds. You don't calculate any of this manually. The generated metrics appear as

slo:sli_error:ratio_rate5m,

slo:error_budget:ratio, and others that you can wire directly into Grafana dashboards.

Your Alertmanager configuration on sw-infrarunbook-01 routes alerts by severity to the appropriate notification channels:

# /etc/alertmanager/alertmanager.yml

route:

receiver: 'default'

routes:

- match:

severity: critical

receiver: 'pagerduty-critical'

- match:

severity: warning

receiver: 'slack-warnings'

receivers:

- name: 'pagerduty-critical'

pagerduty_configs:

- service_key: ''

- name: 'slack-warnings'

slack_configs:

- api_url: ''

channel: '#infra-alerts' Critical fires at the 14x burn rate on the 1-hour window — that's an immediate page. Warning fires when you're burning at 1x over 6 hours — you'll exhaust the budget right at the window boundary if you stay on this trajectory. Both conditions together form the multi-window check: you need the short window to confirm it's not just a brief spike, and the long window to confirm the trend is sustained. Wire the remaining error budget metric into a shared Grafana dashboard so the entire team — including product managers — can see it at any time without needing to know Prometheus query syntax.

SLA vs. SLO: The Gap Is the Point

Your SLA should never equal your SLO. This is the thing teams get wrong most often, and it's a painful mistake to discover during an actual incident. If you promise customers 99.9% in your SLA and you set your internal SLO at 99.9%, you have zero operational headroom. You'll breach the customer contract at the exact same moment you breach your internal target. There's no time to react, no time to mitigate, no early warning — just simultaneous internal and external failure.

The standard approach: set your SLO tighter than your SLA by at least one nines position. If your SLA is 99.9%, your SLO should target 99.95% or 99.99%. That gap is your buffer. By the time an SLA breach is imminent, your team has already been paged on the SLO, triaged the issue, and possibly resolved it. The SLA alert should ideally never fire in production — because the SLO alert fired first and the problem was already handled.

Some teams go further with tiered SLOs: a "target" SLO representing where you aim to operate, and a "minimum viable" SLO representing the floor below which you'd trigger a full incident response and engineering freeze. The SLA sits comfortably below even the minimum viable threshold. This layered structure gives you graduated escalation responses rather than a single binary threshold between "fine" and "on fire."

Common Misconceptions

Higher SLOs are always better. They're not. A 99.999% SLO sounds impressive on a slide, but it gives you roughly 5 minutes of downtime per month across the entire measurement window. Can your deployment pipeline even complete a rollback in 5 minutes? Can your on-call engineer acknowledge a page, understand the issue, and take action in that window? If not, you've set an objective you can't operationally sustain — and you'll be permanently in breach while pretending you have an elite reliability posture. SLOs should reflect what your users actually need and what your infrastructure can realistically deliver, not what sounds impressive.

Error budgets are about measuring failure. This framing causes real damage to team culture. Error budgets are about authorizing risk. A full, untouched error budget doesn't mean you're doing great — it might mean you're being too conservative, not shipping enough, and leaving velocity on the table. An exhausted budget doesn't mean the team failed; it means you took risk and it bit you harder than expected. The appropriate response is to slow deployment cadence and invest in reliability, not to punish the engineers who were shipping features.

SLOs are only for large organizations. I've heard this more times than I can count: "We're not Google, we don't need SLOs." But SLOs are valuable at any scale precisely because they force you to define what "working" means before something breaks. A single-server setup serving solvethenetwork.com on 192.168.1.10 benefits from an explicit, measurable reliability target. Without one, every incident is subjective, every post-mortem devolves into argument about severity, and every conversation about reliability work vs. feature work is circular and political.

Once you set an SLO, you never change it. SLOs should evolve with your system. As your infrastructure matures and your understanding of user needs sharpens, the right SLO targets will shift. In my experience, SLOs set in year one are almost always wrong by year two — either too aggressive because the team couldn't operationally sustain them, or too lenient because the service grew in criticality and user expectations shifted upward. Review your SLOs quarterly. Treat them like any other configuration: version-controlled, change-reviewed, and deliberately updated when the evidence says they're no longer right.

Availability is the only SLI worth measuring. Availability is the most common SLI, but latency is often what users actually experience as degradation. A service that responds to 100% of requests but takes 8 seconds per request isn't reliable from a user's perspective — it's just slow in a way that doesn't show up on your uptime graph. Define latency SLOs alongside availability SLOs: something like "99% of requests complete in under 300ms, measured at the 30-day rolling window." A service can be 100% available and still be violating its latency SLO during a database saturation event that raw availability metrics will never catch.

The SLO, SLA, and error budget framework is — at its core — a tool for having honest conversations about risk. It replaces gut-feel reliability arguments with a shared, measurable scoreboard that everyone from an on-call engineer to a product manager can read. You're not managing uptime percentages. You're managing a budget. And like any budget, the goal isn't to spend none of it. It's to spend it deliberately, on the things that actually matter, with full awareness of what you have left.