What Is a Standard Ethernet Frame?

Before understanding jumbo frames, it helps to understand the default Ethernet frame structure. A standard Ethernet II frame carries a payload of up to 1500 bytes, a value defined in the original IEEE 802.3 specification. Combined with the 14-byte Ethernet header (source MAC, destination MAC, and EtherType) and a 4-byte Frame Check Sequence (FCS), the total on-wire size of a maximum standard frame is 1518 bytes — or 1522 bytes when an 802.1Q VLAN tag is present.

This 1500-byte Maximum Transmission Unit (MTU) has been the backbone of Ethernet networking for decades. It was a reasonable ceiling when networks ran at 10 Mbps, but in today's environments — where 10 GbE, 25 GbE, and 100 GbE links are standard — a 1500-byte MTU creates measurable inefficiencies. Whenever data exceeds 1500 bytes, it must be fragmented into multiple frames, each carrying its own headers and requiring its own processing cycle at every hop in the path.

Defining a Jumbo Frame

A jumbo frame is any Ethernet frame carrying a payload larger than the standard 1500-byte MTU. There is no single IEEE standard that mandates a specific jumbo frame size, but the industry has converged on 9000 bytes as the de facto MTU for jumbo frame deployments. You will also encounter 9216 bytes on Cisco NX-OS platforms, which provides headroom for additional encapsulation overhead such as 802.1Q double tags, FCoE headers, and VXLAN encapsulation.

Jumbo frames are not a new technology. Alteon Networks popularized the 9000-byte MTU in the late 1990s for its gigabit Ethernet adapters. What has changed is the scale of adoption — virtually every modern server NIC, hypervisor platform, and data center switch supports jumbo frames, making them a standard optimization layer for high-throughput workloads in any serious data center.

Key Point: A jumbo frame is not an IEEE standard — it is a vendor convention. The widely accepted MTU is 9000 bytes at the IP layer, which maps to a 9018-byte Ethernet frame when including the 14-byte header and 4-byte FCS, or 9022 bytes with an 802.1Q tag.

Why Jumbo Frames Matter: The Performance Case

The performance argument for jumbo frames comes down to two factors: throughput efficiency and CPU overhead reduction.

With a 1500-byte MTU, sending 1 GB of data requires approximately 689,000 frames. With a 9000-byte MTU, the same 1 GB requires only about 115,000 frames — a reduction of roughly 83 percent. Each frame carries its own Ethernet header, IP header, and TCP or UDP header. The header-to-payload ratio drops dramatically with larger frames, meaning a higher proportion of every byte on the wire is actual application data rather than protocol overhead.

The CPU savings are equally significant. Each frame received triggers an interrupt on the host NIC and requires a context switch in the OS kernel. Reducing the frame count by 83 percent translates directly into fewer interrupts and less CPU time spent on interrupt service routines. On high-speed storage and virtualization hosts where network I/O is a dominant workload, this frees up substantial CPU cycles for application work — an effect that becomes more pronounced as link speeds increase beyond 10 GbE.

Primary Use Cases for Jumbo Frames

Jumbo frames deliver the greatest benefit in environments characterized by large sequential data transfers over a controlled, single-segment Layer 2 path. The most common production use cases include:

- iSCSI Storage Networks: iSCSI encapsulates SCSI commands inside TCP/IP packets. Storage I/O often involves 64 KB or larger block transfers. With standard MTU, a single 64 KB write is fragmented into approximately 43 frames. With MTU 9000, the same write fits into about 8 frames, dramatically reducing protocol overhead and improving IOPS consistency and latency predictability.

- NFS v3/v4 Datastores: VMware ESXi and other hypervisor platforms use NFS for shared storage datastores. Large file reads and writes benefit from reduced fragmentation, particularly during VM provisioning, snapshot creation, and storage vMotion operations.

- VMware vMotion and vSphere Replication: Live VM migrations move gigabytes of RAM across the network in real time. VMware explicitly recommends enabling jumbo frames on vMotion VMkernel ports to maximize migration bandwidth and minimize the migration window, which reduces the risk of VM stun during cutover.

- Backup and Replication Traffic: Backup agents streaming large sequential datasets to Veeam repositories, NetBackup media servers, or similar targets see measurable throughput improvements when jumbo frames are enabled end to end across the backup network.

- High-Performance Computing (HPC) and MPI: Parallel computing clusters exchanging large message buffers between nodes benefit from reduced interrupt overhead and higher effective bandwidth per round trip that jumbo frames provide.

- Inter-Switch Trunk Links and Port Channels: Trunk links between access, distribution, and core switches carrying aggregated traffic from multiple storage and compute VLANs are good candidates for jumbo frame support to prevent MTU-related drops at the aggregation layer.

The End-to-End Requirement: Every Hop Must Match

The single most important rule about jumbo frames is that every device in the forwarding path must support and be configured for the same MTU. Jumbo frames cannot traverse a standard-MTU link without either being fragmented at the IP layer or dropped entirely. Most modern storage and virtualization stacks set the IP Don't Fragment (DF) bit on their traffic, which means that frames too large for a downstream hop are silently dropped rather than fragmented — a failure mode that is notoriously difficult to diagnose.

A classic failure scenario: a server NIC is configured for MTU 9000, the access-layer switch port supports MTU 9000, but the SVI on the distribution switch is left at the default 1500 bytes. Large frames arrive at the distribution switch, the DF bit is set, and the switch drops the oversized frames. If ICMP rate limiting or ACLs suppress the resulting fragmentation-needed messages, the sender never learns it must reduce its segment size. The TCP three-way handshake completes normally because SYN and ACK packets are well within 1500 bytes, but the connection stalls or resets the moment the first large data transfer begins — a textbook MTU black hole symptom that wastes significant troubleshooting time.

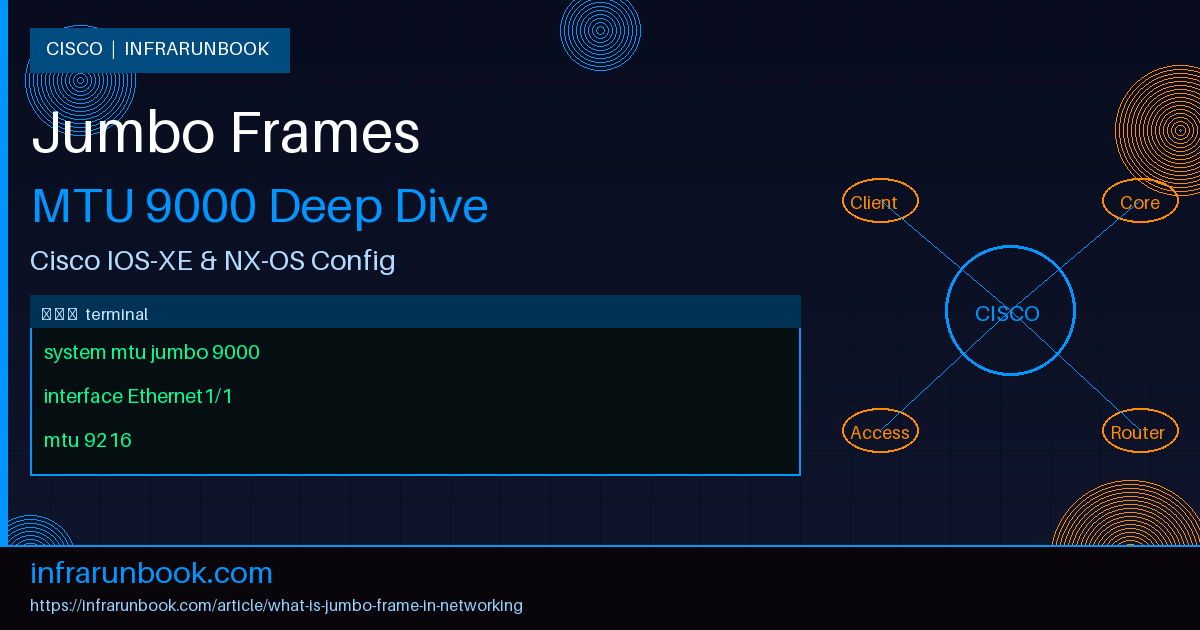

Configuring Jumbo Frames on Cisco IOS-XE Catalyst Switches

On Cisco Catalyst switches running IOS-XE, the jumbo frame MTU is a system-wide setting rather than a per-interface one. The

system mtu jumbocommand sets the Layer 2 switching MTU for all gigabit and higher-speed ports. This command requires a switch reload before it takes effect — a critical detail that is frequently missed in production deployments.

sw-infrarunbook-01# configure terminal

sw-infrarunbook-01(config)# system mtu jumbo 9000

Changes to the system jumbo MTU will not take effect until the next reload is done

sw-infrarunbook-01(config)# end

sw-infrarunbook-01# write memory

Building configuration...

[OK]

sw-infrarunbook-01# reload

Proceed with reload? [confirm]For Layer 3 routed interfaces on IOS-XE, the IP MTU must be configured per interface using the

ip mtucommand. The

ip mtuvalue must not exceed the interface's Layer 2 MTU. If the interface is a routed port or SVI, ensure the system MTU has already been set to accommodate the desired IP MTU before configuring the interface:

sw-infrarunbook-01# configure terminal

sw-infrarunbook-01(config)# interface Vlan10

sw-infrarunbook-01(config-if)# ip mtu 9000

sw-infrarunbook-01(config-if)# exit

sw-infrarunbook-01(config)# interface GigabitEthernet1/0/1

sw-infrarunbook-01(config-if)# ip mtu 9000

sw-infrarunbook-01(config-if)# end

sw-infrarunbook-01# write memoryAfter the reload, verify the system-level and interface-level MTU values to confirm the configuration is active:

sw-infrarunbook-01# show system mtu

System MTU size is 1500 bytes

System Jumbo MTU size is 9000 bytes

System Alternate MTU size is 1500 bytes

Routing MTU size is 1500 bytes

sw-infrarunbook-01# show interfaces GigabitEthernet1/0/1 | include MTU

MTU 9000 bytes, BW 1000000 Kbit/sec, DLY 10 usec,Configuring Jumbo Frames on Cisco NX-OS Nexus Switches

Cisco NX-OS handles MTU configuration differently from IOS-XE. On Nexus platforms, MTU is configured per interface rather than globally, and changes take effect immediately without requiring a reload. NX-OS also uses a slightly larger default jumbo value of 9216 bytes to accommodate FCoE, QinQ, and other encapsulation overhead that is common in data center environments.

sw-infrarunbook-01# configure terminal

sw-infrarunbook-01(config)# interface Ethernet1/1

sw-infrarunbook-01(config-if)# mtu 9216

sw-infrarunbook-01(config-if)# exit

sw-infrarunbook-01(config)# interface Ethernet1/2

sw-infrarunbook-01(config-if)# mtu 9216

sw-infrarunbook-01(config-if)# end

sw-infrarunbook-01# copy running-config startup-configMany Nexus deployments also enforce MTU through network QoS policy maps, which is the default behavior on Nexus 5000, 7000, and 9000 platforms. In these environments, you must explicitly configure the jumbo MTU within the network QoS policy in addition to setting it on individual interfaces:

sw-infrarunbook-01# configure terminal

sw-infrarunbook-01(config)# policy-map type network-qos jumbo-qos

sw-infrarunbook-01(config-pmap-nqos)# class type network-qos class-default

sw-infrarunbook-01(config-pmap-nqos-c)# mtu 9216

sw-infrarunbook-01(config-pmap-nqos-c)# exit

sw-infrarunbook-01(config-pmap-nqos)# exit

sw-infrarunbook-01(config)# system qos

sw-infrarunbook-01(config-sys-qos)# service-policy type network-qos jumbo-qos

sw-infrarunbook-01(config-sys-qos)# end

sw-infrarunbook-01# copy running-config startup-configVerify the configured MTU and confirm QoS policy application on NX-OS:

sw-infrarunbook-01# show interface Ethernet1/1 | include MTU

MTU 9216 bytes, BW 10000000 Kbit, DLY 10 usec

sw-infrarunbook-01# show policy-map system type network-qosTesting Jumbo Frame Connectivity End to End

After configuring jumbo frames across the infrastructure, always test end-to-end connectivity using oversized ICMP packets with the DF bit set. The Cisco IOS extended ping command supports a

sizeargument and a

df-bitoption for exactly this purpose. Set the payload to 8972 bytes — which is 9000 bytes minus the 20-byte IP header and 8-byte ICMP header — to generate a 9000-byte IP packet on the wire:

sw-infrarunbook-01# ping 192.168.10.50 size 8972 df-bit repeat 10

Type escape sequence to abort.

Sending 10, 8972-byte ICMP Echos to 192.168.10.50, timeout is 2 seconds:

Packet sent with the DF bit set

!!!!!!!!!!

Success rate is 100 percent (10/10), round-trip min/avg/max = 1/1/2 msIf the ping fails or returns partial success, use a binary search approach to narrow down the maximum working MTU: try sizes 4000, 7000, 8000, and 8500 to identify the hop with the misconfigured MTU. From a Linux host, the equivalent test is run using the

pingutility with the

-M doflag to set the DF bit:

[infrarunbook-admin@storage-host ~]$ ping -M do -s 8972 192.168.10.50 -c 10

PING 192.168.10.50 (192.168.10.50) 8972(9000) bytes of data.

8980 bytes from 192.168.10.50: icmp_seq=1 ttl=64 time=0.312 ms

8980 bytes from 192.168.10.50: icmp_seq=2 ttl=64 time=0.287 ms

8980 bytes from 192.168.10.50: icmp_seq=3 ttl=64 time=0.301 ms

--- 192.168.10.50 ping statistics ---

10 packets transmitted, 10 received, 0% packet loss, time 9011ms

rtt min/avg/max/mdev = 0.287/0.300/0.312/0.009 msCommon Misconfigurations and Pitfalls

- Missing the IOS-XE reload: The

system mtu jumbo

command on Catalyst switches does not take effect until after a reload. Theshow system mtu

output will display the new value immediately, but the hardware continues to enforce the old MTU until the switch restarts. This is a frequent source of confusion during commissioning. - Mixed MTU on port channels: All member links in an EtherChannel or LACP bundle must share the same MTU. A mismatch causes intermittent failures where some flows succeed and others fail based on which physical link the hashing algorithm selects for a given flow.

- Forgetting SVIs and routed interfaces: Configuring jumbo MTU on physical switchports while leaving SVIs and routed interfaces at the default 1500 bytes is one of the most common misconfigurations in data center networks. Every Layer 3 boundary in the path — SVI, routed port, subinterface — must have its IP MTU explicitly configured.

- Server NIC and OS configuration: Even with the switch infrastructure correctly configured, each server NIC and its operating system must also be set to MTU 9000. On Linux, this is done with

ip link set eth0 mtu 9000

. On Windows Server, the jumbo frame setting is found in the NIC driver's Advanced properties tab. Forgetting this step means the server continues to send standard-sized frames regardless of the switch configuration. - WAN and firewall boundaries: Jumbo frames should be restricted to controlled Layer 2 domains. Internet-facing links almost universally enforce a 1500-byte MTU, and firewall appliances may have their own MTU constraints. Always verify MTU capabilities at every boundary before enabling jumbo frames on a segment that connects to external or multi-tenant infrastructure.

Path MTU Discovery and Jumbo Frame Environments

Path MTU Discovery (PMTUD), defined in RFC 1191 for IPv4 and RFC 8899 for IPv6, is the mechanism by which TCP sessions automatically discover the smallest MTU along the end-to-end path. PMTUD works by sending packets with the DF bit set and listening for ICMP Type 3 Code 4 (Fragmentation Needed) messages when an intermediate router encounters a packet too large for the outgoing link MTU.

PMTUD is why a single misconfigured hop causes subtle, difficult-to-diagnose failures rather than immediate connection failures. The TCP three-way handshake succeeds because SYN and ACK packets are small. The first large data segment hits the undersized hop, which should generate a fragmentation-needed ICMP back to the sender. If that ICMP is filtered by a firewall or ACL — which is common in environments that block all ICMP for security reasons — the sender never reduces its segment size. The connection appears established but transfers stall indefinitely. This is the classic PMTUD black hole, and it is one of the most time-consuming issues to trace in a jumbo frame rollout.

Best practice in jumbo frame environments is to explicitly permit ICMP Type 3 Code 4 on all ACLs and firewall policies, even when all other ICMP is restricted. This single exception prevents the entire class of MTU black hole failures and has no meaningful security impact.