What Is a Reverse Proxy?

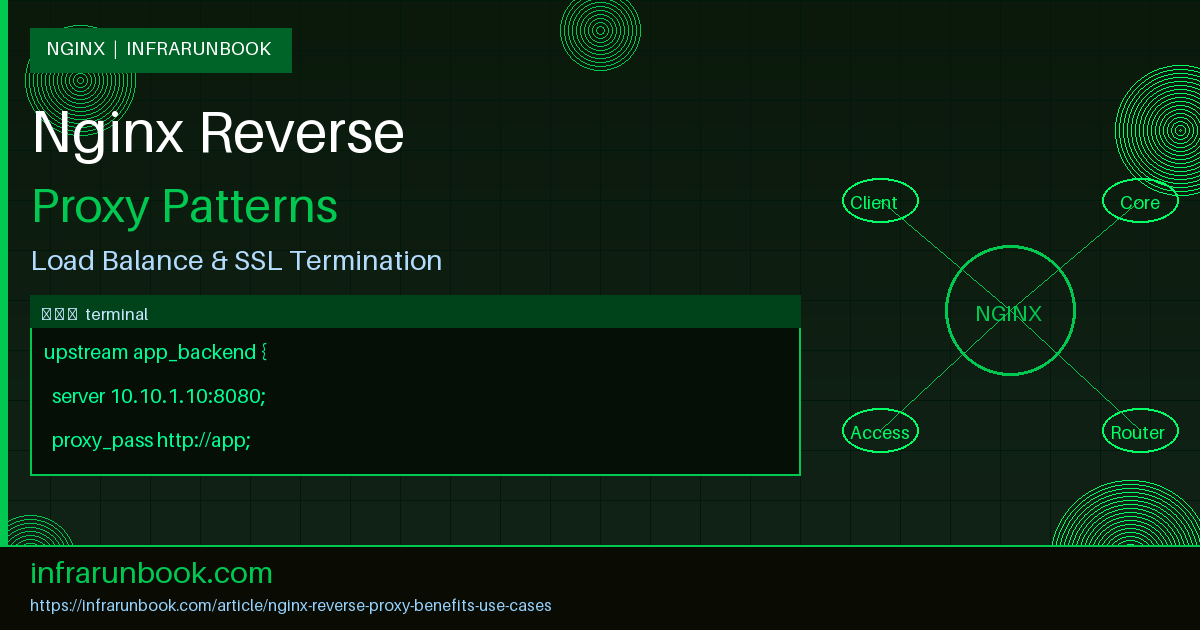

A reverse proxy sits between external clients and one or more backend servers, intercepting requests on behalf of those servers. Unlike a forward proxy—which acts on behalf of clients to reach external destinations—a reverse proxy accepts connections from the internet and forwards them internally, typically to application servers, APIs, or microservices that are not directly reachable from the outside world.

Nginx has become the dominant choice for reverse proxy deployments because of its event-driven, non-blocking architecture. A single Nginx worker process can handle tens of thousands of simultaneous connections with minimal memory overhead, making it dramatically more efficient than thread-per-connection models under heavy load. This guide covers the most important production use cases with real configuration examples tested on sw-infrarunbook-01 running Ubuntu 22.04 LTS with Nginx 1.24.

Core Architecture of an Nginx Reverse Proxy

When Nginx operates as a reverse proxy it listens on one or more public-facing ports—commonly 80 and 443—and uses the

proxy_passdirective to forward matched requests to upstream backends. The upstream backend pool can be a single server or a named group defined inside an

upstreamblock, giving you a single place to manage your backend fleet.

A minimal but production-ready reverse proxy configuration looks like this:

# /etc/nginx/nginx.conf

worker_processes auto;

events {

worker_connections 4096;

use epoll;

multi_accept on;

}

http {

include /etc/nginx/mime.types;

default_type application/octet-stream;

sendfile on;

keepalive_timeout 65;

upstream app_backend {

server 10.10.1.10:8080;

server 10.10.1.11:8080;

server 10.10.1.12:8080;

}

server {

listen 80;

server_name solvethenetwork.com www.solvethenetwork.com;

location / {

proxy_pass http://app_backend;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_connect_timeout 10s;

proxy_read_timeout 60s;

proxy_send_timeout 60s;

}

}

}This distributes incoming traffic across three application servers on the 10.10.1.0/24 subnet. The

proxy_set_headerdirectives ensure backend applications receive accurate client metadata rather than seeing the proxy's own IP address, which is essential for logging, geolocation, and security auditing on the backend side.

Benefit 1: SSL/TLS Termination

SSL termination is one of the most operationally valuable use cases for an Nginx reverse proxy. Backend application servers are frequently written without TLS support, or they use plain HTTP internally for performance reasons. Nginx accepts encrypted connections from clients, decrypts them at the proxy layer, then forwards plain HTTP to the backend. This centralizes certificate management and offloads all cryptographic work from application servers.

server {

listen 443 ssl;

server_name solvethenetwork.com;

ssl_certificate /etc/ssl/certs/solvethenetwork.com.crt;

ssl_certificate_key /etc/ssl/private/solvethenetwork.com.key;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384;

ssl_prefer_server_ciphers on;

ssl_session_cache shared:SSL:10m;

ssl_session_timeout 1d;

ssl_stapling on;

ssl_stapling_verify on;

add_header Strict-Transport-Security "max-age=63072000; includeSubDomains; preload" always;

add_header X-Frame-Options DENY always;

add_header X-Content-Type-Options nosniff always;

location / {

proxy_pass http://10.10.1.10:8080;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto https;

}

}

server {

listen 80;

server_name solvethenetwork.com;

return 301 https://$host$request_uri;

}The

ssl_session_cache shared:SSL:10mdirective creates a shared memory zone that stores TLS session parameters across all worker processes, dramatically reducing handshake overhead for returning clients. OCSP stapling removes the latency of client-side OCSP lookups by allowing Nginx to serve cached certificate validity responses directly. The HTTP-to-HTTPS redirect in the second server block ensures all cleartext traffic is promoted to an encrypted connection before any application logic runs.

Benefit 2: Load Balancing Across Backend Pools

The

upstreamblock supports multiple load balancing algorithms. Selecting the right one for your workload can meaningfully improve throughput and reduce tail latency.

- Round Robin (default): Each request goes to the next server in sequence. Appropriate for stateless applications where all backends have equal capacity and request duration is uniform.

- Least Connections (

least_conn): Routes each request to the server with the fewest active connections. Significantly better for workloads where individual request duration varies widely, such as file uploads or database-heavy queries. - IP Hash (

ip_hash): Uses the client IP address to consistently route requests to the same backend. Provides session stickiness without requiring application-level shared session storage. - Generic Hash (

hash): Allows hashing on any Nginx variable, including$request_uri

, enabling consistent routing to maximize upstream cache hit rates.

upstream api_cluster {

least_conn;

server 10.10.1.10:9000 weight=3;

server 10.10.1.11:9000 weight=2;

server 10.10.1.12:9000 weight=1;

server 10.10.1.19:9000 backup;

keepalive 64;

}

server {

listen 443 ssl;

server_name api.solvethenetwork.com;

ssl_certificate /etc/ssl/certs/solvethenetwork.com.crt;

ssl_certificate_key /etc/ssl/private/solvethenetwork.com.key;

ssl_protocols TLSv1.2 TLSv1.3;

location /api/ {

proxy_pass http://api_cluster;

proxy_http_version 1.1;

proxy_set_header Connection "";

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_next_upstream error timeout http_502 http_503;

proxy_next_upstream_tries 2;

}

}The

keepalive 64directive maintains a pool of idle keep-alive connections to each upstream, eliminating TCP handshake overhead for every proxied request. Clearing the

Connectionheader along with

proxy_http_version 1.1enables HTTP/1.1 persistent connections toward the backend. The

proxy_next_upstreamdirective provides automatic failover: if a backend returns a 502 or 503, Nginx immediately retries the request on another server, transparent to the client.

Benefit 3: Response Caching

Nginx can cache upstream responses to disk and serve subsequent identical requests without contacting the backend at all. This reduces end-user latency and dramatically decreases backend load for cacheable content such as static assets, rendered HTML pages, and public API responses.

http {

proxy_cache_path /var/cache/nginx/stn_cache

levels=1:2

keys_zone=stn_cache:64m

max_size=10g

inactive=60m

use_temp_path=off;

server {

listen 443 ssl;

server_name solvethenetwork.com;

ssl_certificate /etc/ssl/certs/solvethenetwork.com.crt;

ssl_certificate_key /etc/ssl/private/solvethenetwork.com.key;

location /static/ {

proxy_pass http://app_backend;

proxy_cache stn_cache;

proxy_cache_valid 200 302 10m;

proxy_cache_valid 404 1m;

proxy_cache_use_stale error timeout updating http_500 http_502 http_503;

proxy_cache_lock on;

add_header X-Cache-Status $upstream_cache_status;

}

location /api/ {

proxy_pass http://app_backend;

proxy_no_cache $http_authorization;

proxy_cache_bypass $http_authorization;

}

}

}The

proxy_cache_use_staledirective is particularly important for availability: when the upstream is slow or returning server errors, Nginx serves stale cached content rather than returning an error page to the client. The

X-Cache-Statusresponse header exposes whether each response was a HIT, MISS, or BYPASS, which is essential for tuning cache efficiency. The

/api/block bypasses caching entirely when an

Authorizationheader is present, preventing authenticated user responses from leaking to other clients.

Benefit 4: WebSocket Proxying

WebSocket connections begin as standard HTTP/1.1 requests carrying an

Upgrade: websocketheader. By default, Nginx treats

Upgradeand

Connectionas hop-by-hop headers and strips them before forwarding. Explicit configuration is required to proxy WebSocket traffic correctly.

upstream ws_backend {

server 10.10.1.10:8765;

server 10.10.1.11:8765;

}

server {

listen 443 ssl;

server_name ws.solvethenetwork.com;

ssl_certificate /etc/ssl/certs/solvethenetwork.com.crt;

ssl_certificate_key /etc/ssl/private/solvethenetwork.com.key;

location /ws/ {

proxy_pass http://ws_backend;

proxy_http_version 1.1;

proxy_set_header Upgrade $http_upgrade;

proxy_set_header Connection "upgrade";

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_read_timeout 3600s;

proxy_send_timeout 3600s;

proxy_buffering off;

}

}Setting both

proxy_read_timeoutand

proxy_send_timeoutto 3600 seconds prevents Nginx from terminating idle WebSocket connections during quiet periods, which is standard for chat applications, live dashboards, and collaborative tools. Disabling

proxy_bufferingensures WebSocket frames are forwarded immediately without being held in Nginx's internal buffers, which is critical for real-time message delivery.

Benefit 5: Path-Based Request Routing to Multiple Services

A single Nginx instance on sw-infrarunbook-01 can route requests to entirely different backend services based solely on the URL path. This is the foundation of microservice gateway patterns, where one external domain fronts dozens of independent internal services.

server {

listen 443 ssl;

server_name solvethenetwork.com;

ssl_certificate /etc/ssl/certs/solvethenetwork.com.crt;

ssl_certificate_key /etc/ssl/private/solvethenetwork.com.key;

# Authentication service on 10.10.1.10

location /auth/ {

proxy_pass http://10.10.1.10:8001/;

proxy_set_header Host $host;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

# User profile API on 10.10.1.11

location /users/ {

proxy_pass http://10.10.1.11:8002/;

proxy_set_header Host $host;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

}

# Metrics endpoint restricted to management subnet only

location /metrics {

allow 192.168.10.0/24;

deny all;

proxy_pass http://10.10.1.12:9090/metrics;

}

# Static assets served directly from disk

location /assets/ {

root /var/www/solvethenetwork;

expires 30d;

add_header Cache-Control "public, immutable";

}

}The trailing slash on

proxy_pass http://10.10.1.10:8001/;causes Nginx to strip the

/authprefix before forwarding the request, so the backend receives

/loginrather than

/auth/login. Without that trailing slash the full original path including the location prefix is forwarded. This distinction is one of the most common sources of misconfiguration when building microservice gateways with Nginx.

Benefit 6: Security Header Enforcement and Header Sanitization

The reverse proxy layer is the ideal enforcement point for security-relevant HTTP response headers. Because Nginx applies them uniformly regardless of what each backend application returns, you get consistent security policy across a heterogeneous application fleet without modifying individual services.

server {

listen 443 ssl;

server_name solvethenetwork.com;

ssl_certificate /etc/ssl/certs/solvethenetwork.com.crt;

ssl_certificate_key /etc/ssl/private/solvethenetwork.com.key;

# Strip headers that reveal internal technology stack

proxy_hide_header X-Powered-By;

proxy_hide_header X-AspNet-Version;

more_clear_headers Server;

# Enforce security policy across all responses

add_header Content-Security-Policy "default-src 'self'; script-src 'self'; object-src 'none'" always;

add_header Permissions-Policy "geolocation=(), microphone=()" always;

add_header Referrer-Policy "strict-origin-when-cross-origin" always;

add_header X-Frame-Options "SAMEORIGIN" always;

add_header X-Content-Type-Options "nosniff" always;

location / {

proxy_pass http://app_backend;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

}

}The

alwaysflag on

add_headerensures security headers are included on error responses (4xx, 5xx) as well as successful ones—without it, Nginx only adds headers to 200-class responses. The

more_clear_headersdirective requires the

ngx_headers_moremodule; without it, use

proxy_hide_header Serverto suppress backend server fingerprinting.

Benefit 7: Rate Limiting and Connection Control

Nginx's

limit_reqand

limit_connmodules implement token-bucket rate limiting and connection count enforcement at the proxy layer, before requests ever reach application servers. This provides a critical defense against brute-force credential attacks, API abuse, and traffic floods.

http {

limit_req_zone $binary_remote_addr zone=api_ratelimit:10m rate=30r/m;

limit_conn_zone $binary_remote_addr zone=conn_limit:10m;

server {

listen 443 ssl;

server_name solvethenetwork.com;

ssl_certificate /etc/ssl/certs/solvethenetwork.com.crt;

ssl_certificate_key /etc/ssl/private/solvethenetwork.com.key;

# Strict rate limit on the login endpoint

location /auth/login {

limit_req zone=api_ratelimit burst=5 nodelay;

limit_conn conn_limit 10;

limit_req_status 429;

proxy_pass http://10.10.1.10:8001/login;

}

# Admin panel restricted to RFC 1918 management networks

location /admin/ {

allow 192.168.10.0/24;

allow 10.10.0.0/16;

deny all;

proxy_pass http://10.10.1.12:8080/admin/;

}

}

}The

burst=5 nodelaycombination allows short traffic bursts up to five requests above the defined rate to be processed immediately without artificial delay. Without

nodelay, burst requests are queued and released at the defined rate, which adds latency for legitimate users during brief spikes. The

$binary_remote_addrvariable is used instead of

$remote_addrbecause the binary representation is more compact, reducing the memory footprint of the shared zone by roughly 50 percent.

Upstream Monitoring and Structured Access Logging

Capturing upstream-specific timing variables in your access log is fundamental to diagnosing reverse proxy performance problems. Nginx exposes granular upstream metrics that reveal exactly where latency originates in the request path.

http {

log_format upstream_timing

'$remote_addr - $remote_user [$time_local] '

'"$request" $status $body_bytes_sent '

'rt=$request_time uct=$upstream_connect_time '

'uht=$upstream_header_time urt=$upstream_response_time '

'cs=$upstream_cache_status ups=$upstream_addr';

access_log /var/log/nginx/solvethenetwork.access.log upstream_timing buffer=32k flush=5s;

error_log /var/log/nginx/solvethenetwork.error.log warn;

}The variable

$upstream_connect_timemeasures the time spent establishing the TCP connection to the backend.

$upstream_header_timemeasures the time from connection establishment to receipt of the first response byte.

$upstream_response_timemeasures the full round trip including body transfer. When

$upstream_connect_timeis high, you have a network or backend capacity problem. When

$upstream_header_timeis high but connect time is low, the backend application is slow to respond. The

buffer=32k flush=5sparameters batch log writes to reduce I/O pressure on the proxy host.

Passive Health Checks and Automatic Failover

Open-source Nginx performs passive health checking by tracking backend error rates. When a server exceeds the configured failure threshold within a time window, Nginx temporarily removes it from the active rotation and stops sending traffic until the cooldown period expires.

upstream app_backend {

server 10.10.1.10:8080 max_fails=3 fail_timeout=30s;

server 10.10.1.11:8080 max_fails=3 fail_timeout=30s;

server 10.10.1.12:8080 max_fails=1 fail_timeout=60s backup;

keepalive 32;

}

If either of the primary servers accumulates three failures within a 30-second window, it is marked unavailable for 30 seconds before Nginx retries it. The server at 10.10.1.12 is marked as

backup, meaning it only receives traffic when all non-backup servers are simultaneously unavailable. This creates a hot standby at the load balancer layer with no external orchestration required. For active health checks—HTTP probes sent on a configurable schedule independent of real user traffic—the commercial Nginx Plus product or the community

nginx_upstream_check_modulethird-party module is required.