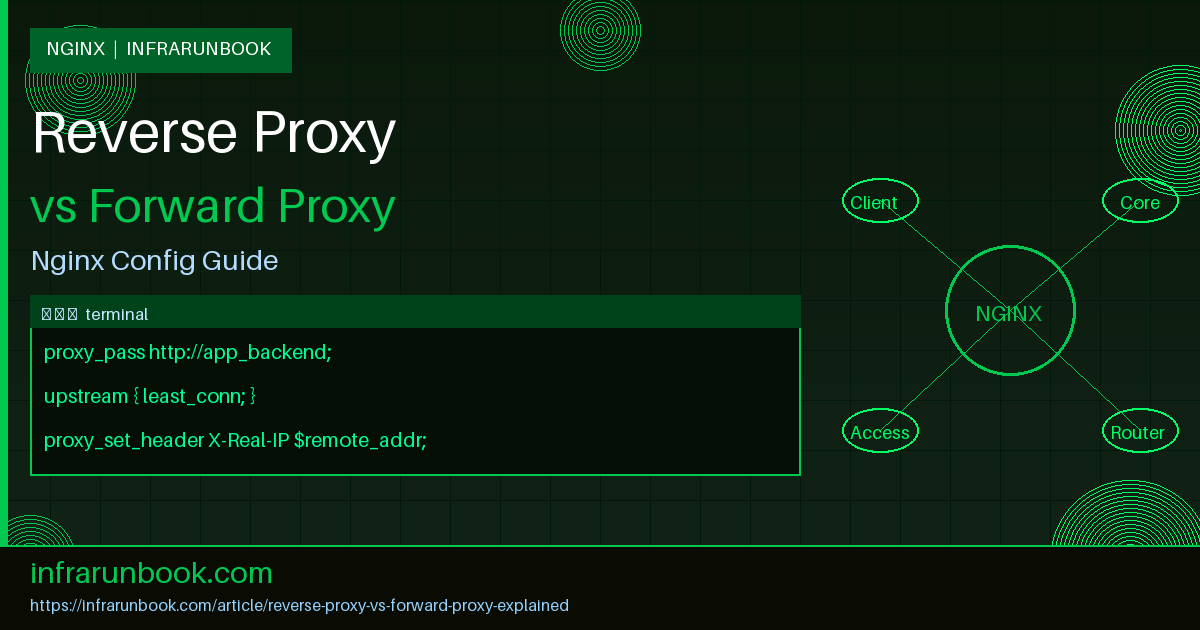

Understanding the difference between a reverse proxy and a forward proxy is foundational knowledge for any infrastructure engineer working with Nginx, HAProxy, or modern service meshes. Both types of proxies sit between clients and servers and relay traffic, but they serve fundamentally different architectural roles, face different directions in the network topology, and solve completely different operational problems. In this article we break down exactly how each works, when to use one versus the other, and how to configure Nginx on sw-infrarunbook-01 for both scenarios.

What Is a Proxy Server

A proxy server is an intermediary that accepts network requests from one party and forwards them to another. The proxy can inspect, modify, cache, log, or block traffic as it passes through. The key distinction between forward and reverse proxies lies in who the proxy represents and which side of the connection initiates contact with the proxy.

- A forward proxy acts on behalf of clients. Clients are configured to send their outbound requests through the proxy, which then contacts the destination server on their behalf.

- A reverse proxy acts on behalf of servers. External clients contact the proxy directly — often without knowing it exists — and the proxy forwards those requests to one or more backend servers.

Forward Proxy: Deep Dive

A forward proxy sits between internal clients and the public internet. Workstations on a private network — say, hosts at 192.168.10.0/24 — are configured to route outbound HTTP and HTTPS traffic through a central proxy server at 192.168.10.5 running on sw-infrarunbook-01. From the perspective of the external destination server, every request appears to originate from 192.168.10.5, not from the individual workstations behind it.

This architecture provides several operational benefits:

- Anonymity and IP masking: The real client IP is hidden from the destination. This is useful for privacy-sensitive workflows or regulated industries that must not expose internal RFC 1918 address ranges to external systems.

- Content filtering and access control: The proxy can inspect URLs, block specific domains (social media, gambling sites, malware C2 infrastructure), and enforce acceptable-use policies without installing agents on every client device.

- Caching of outbound requests: Frequently accessed external resources — Linux package repositories, container image layers, npm packages — can be cached locally at 192.168.10.5, reducing WAN bandwidth consumption and improving download speeds across the organization.

- Egress logging and auditing: Every outbound request is logged centrally on sw-infrarunbook-01, providing a full audit trail for compliance investigations and incident response.

- SSL inspection: Forward proxies can perform TLS inspection by re-signing certificates using a trusted internal CA, allowing deep packet inspection of HTTPS traffic for data loss prevention or malware detection.

Forward proxies require explicit client configuration. Browsers, package managers, and applications must be pointed at the proxy using environment variables like

http_proxy,

https_proxy, or via WPAD (Web Proxy Auto-Discovery). Alternatively, network administrators deploy transparent forward proxies that intercept traffic at the gateway using iptables rules, though this approach has well-known limitations with modern HTTPS traffic.

Configuring Nginx as a Forward Proxy

Nginx is not a full-featured forward proxy out of the box — it lacks native HTTP CONNECT method handling required for HTTPS tunneling without the

ngx_http_proxy_connect_modulepatch. However, for plain HTTP forward proxy scenarios, the following configuration on sw-infrarunbook-01 functions correctly:

server {

listen 192.168.10.5:3128;

server_name sw-infrarunbook-01.solvethenetwork.com;

resolver 192.168.10.1 valid=30s;

location / {

proxy_pass $scheme://$host$request_uri;

proxy_set_header Host $host;

proxy_set_header X-Forwarded-For $remote_addr;

proxy_connect_timeout 10s;

proxy_read_timeout 30s;

# Restrict access to internal clients only

allow 192.168.10.0/24;

allow 10.0.0.0/8;

deny all;

}

}The

resolverdirective is mandatory for forward proxy operation because Nginx must resolve destination hostnames dynamically at request time rather than at startup. Without a configured resolver, Nginx cannot perform DNS lookups for arbitrary hostnames submitted in client request URIs and will return errors for all outbound requests.

Reverse Proxy: Deep Dive

A reverse proxy sits in front of one or more backend application servers and accepts incoming connections from external clients. The client connects to the reverse proxy — for instance, solvethenetwork.com resolves to the public-facing IP of sw-infrarunbook-01 — and has no visibility into what backend infrastructure lies behind it. The reverse proxy forwards the request to a selected backend, collects the response, and returns it to the client as if it were the origin server itself.

Reverse proxies are the backbone of modern web infrastructure and enable the following capabilities:

- Load balancing: Distribute incoming traffic across multiple backend application servers at 10.10.0.11, 10.10.0.12, and 10.10.0.13 using algorithms like round-robin, least connections, or IP hash.

- SSL/TLS termination: Handle the computational overhead of TLS handshakes at the proxy layer. Backend servers receive plain HTTP over the trusted 10.10.0.0/24 internal network, simplifying certificate management and reducing CPU load on application hosts.

- Response caching: Store and serve responses for frequently requested static assets, reducing backend load and dramatically improving latency for end users.

- Compression: Apply gzip or Brotli compression to responses at the proxy layer before delivery, offloading this CPU work from application servers.

- Security enforcement: Absorb and filter malicious traffic before it reaches application code. Rate limiting, IP blocklists, and geo-based access controls can all be applied centrally at the reverse proxy.

- Header manipulation: Inject, strip, or rewrite HTTP headers to normalize requests before they reach application code and to sanitize responses before they reach clients.

- Health checking and failover: Automatically remove unhealthy backends from the upstream pool and route traffic only to healthy instances without operator intervention.

Configuring Nginx as a Reverse Proxy

The following configuration on sw-infrarunbook-01 demonstrates a production-grade Nginx reverse proxy setup. It terminates TLS, enforces HTTPS, load-balances across three backends, applies security headers, and caches static assets:

upstream app_backend {

least_conn;

server 10.10.0.11:8080 weight=3;

server 10.10.0.12:8080 weight=3;

server 10.10.0.13:8080 weight=1 backup;

keepalive 32;

}

server {

listen 80;

server_name solvethenetwork.com www.solvethenetwork.com;

return 301 https://$host$request_uri;

}

server {

listen 443 ssl http2;

server_name solvethenetwork.com www.solvethenetwork.com;

ssl_certificate /etc/nginx/ssl/solvethenetwork.com.crt;

ssl_certificate_key /etc/nginx/ssl/solvethenetwork.com.key;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers HIGH:!aNULL:!MD5;

ssl_session_cache shared:SSL:10m;

ssl_session_timeout 10m;

add_header Strict-Transport-Security "max-age=31536000; includeSubDomains" always;

add_header X-Frame-Options DENY always;

add_header X-Content-Type-Options nosniff always;

location / {

proxy_pass http://app_backend;

proxy_http_version 1.1;

proxy_set_header Connection "";

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

proxy_connect_timeout 5s;

proxy_send_timeout 60s;

proxy_read_timeout 60s;

proxy_buffering on;

proxy_buffer_size 16k;

proxy_buffers 8 16k;

}

location ~* \.(jpg|jpeg|png|gif|ico|css|js|woff2)$ {

proxy_pass http://app_backend;

proxy_cache static_cache;

proxy_cache_valid 200 7d;

proxy_cache_use_stale error timeout updating;

expires 7d;

add_header Cache-Control "public, immutable";

}

}The combination of

proxy_http_version 1.1and

proxy_set_header Connection ""is critical for enabling HTTP keepalive connections to the upstream pool defined with

keepalive 32. Without these two directives, Nginx closes the TCP connection to the backend after every proxied request, which defeats connection pooling entirely and adds measurable latency and CPU overhead under sustained load.

Key Differences Side by Side

The following breakdown summarizes the architectural differences between forward and reverse proxies across the most operationally relevant dimensions:

- Direction of representation: A forward proxy represents clients going outward; a reverse proxy represents servers receiving inbound traffic.

- Client awareness: Forward proxy requires explicit client configuration or transparent network-level interception. Reverse proxy is invisible to clients — they believe they communicate directly with the origin server.

- Typical network position: Forward proxy sits at the egress boundary of an internal network. Reverse proxy sits at the ingress boundary, facing external clients at the DMZ edge.

- Who benefits: Forward proxy benefits the client organization through privacy, content filtering, and outbound caching. Reverse proxy benefits the server organization through scalability, TLS offload, and centralized security enforcement.

- DNS relationship: Forward proxy must resolve arbitrary destination hostnames dynamically per request. Reverse proxy terminates traffic for a fixed, known set of domain names configured in its server blocks.

- Caching scope: Forward proxy caches external content for internal users. Reverse proxy caches its own application responses for external users.

- Authentication role: Forward proxies often perform outbound user authentication (LDAP, Kerberos, NTLM). Reverse proxies may handle inbound authentication flows and delegate identity context downstream via headers.

When to Use a Reverse Proxy in Production

A reverse proxy is the correct architecture when you need to:

- Expose multiple backend services through a single public IP or hostname, differentiating by URL path, subdomain, or HTTP header value.

- Terminate TLS centrally on sw-infrarunbook-01 rather than managing certificates individually on each application server at 10.10.0.11 through 10.10.0.13.

- Absorb traffic spikes by caching responses and serving static assets without loading application backends.

- Apply rate limiting and DDoS mitigation before requests reach application code.

- Enable blue-green deployments or canary releases by adjusting upstream pool weights at the proxy layer without touching DNS or application configuration.

When to Use a Forward Proxy in Production

A forward proxy is the correct architecture when you need to:

- Control and audit outbound internet access from internal systems, CI/CD pipelines, or containerized workloads at 192.168.10.0/24.

- Cache package manager traffic — apt, yum, pip, npm — to reduce WAN bandwidth costs in air-gapped or bandwidth-constrained environments.

- Enforce content filtering and acceptable-use policies across the organization without deploying endpoint agents.

- Hide internal RFC 1918 address ranges from external services for privacy or compliance purposes.

- Perform SSL inspection to detect data exfiltration or malware C2 traffic embedded within encrypted outbound sessions.

Upstream Health Checks in Reverse Proxy Deployments

Passive health checking determines which backends in the upstream pool receive traffic. Nginx open source removes a backend after a configurable number of consecutive failures and restores it after a timeout. The following upstream block configures passive health checking with a 30-second penalty window:

upstream app_backend {

server 10.10.0.11:8080 max_fails=3 fail_timeout=30s;

server 10.10.0.12:8080 max_fails=3 fail_timeout=30s;

server 10.10.0.13:8080 backup;

}The

backupflag on 10.10.0.13 ensures it only receives traffic when all primary upstreams are simultaneously unavailable — making it an effective standby node that preserves capacity during normal operation while providing a failsafe when both active backends fail their health checks.

Access Logging Strategy for Each Proxy Type

Logging requirements differ substantially between the two proxy types. On a forward proxy, the most operationally useful fields are the client IP, destination host, HTTP method, and response code — enabling security teams to track which internal hosts are contacting which external endpoints. On a reverse proxy, the most critical fields are the upstream backend that served the request, upstream response time, and the real end-user IP recovered from

X-Forwarded-For. The following custom log format captures all reverse proxy-specific fields on sw-infrarunbook-01:

log_format proxy_detailed '$remote_addr - $remote_user [$time_local] '

'"$request" $status $body_bytes_sent '

'"$http_referer" "$http_user_agent" '

'upstream=$upstream_addr '

'upstream_rt=$upstream_response_time '

'total_rt=$request_time';

access_log /var/log/nginx/solvethenetwork_access.log proxy_detailed;Capturing

$upstream_addrand

$upstream_response_timein reverse proxy logs is essential for identifying slow individual backends, debugging load distribution imbalances, and correlating application errors with specific backend instances during incident response on sw-infrarunbook-01.

Chaining Forward and Reverse Proxies

In large enterprise environments, both proxy types frequently coexist and operate independently. Inbound traffic from the internet hits a reverse proxy at the DMZ edge. Backend application servers in the 10.10.0.0/24 network send outbound API calls through a forward proxy at 192.168.10.5 to reach external SaaS platforms or cloud APIs. The two proxies address orthogonal concerns — ingress control versus egress control — and their coexistence is both normal and recommended for full traffic visibility in both directions.

A common misconception is that a reverse proxy somehow inherently "knows" it is a reverse proxy, or that a forward proxy has built-in awareness of its role. From the software perspective these are purely configuration and deployment patterns. The same Nginx binary on sw-infrarunbook-01 can serve as a forward proxy, a reverse proxy, or both simultaneously on different listener ports, depending entirely on how the configuration is structured.