Introduction

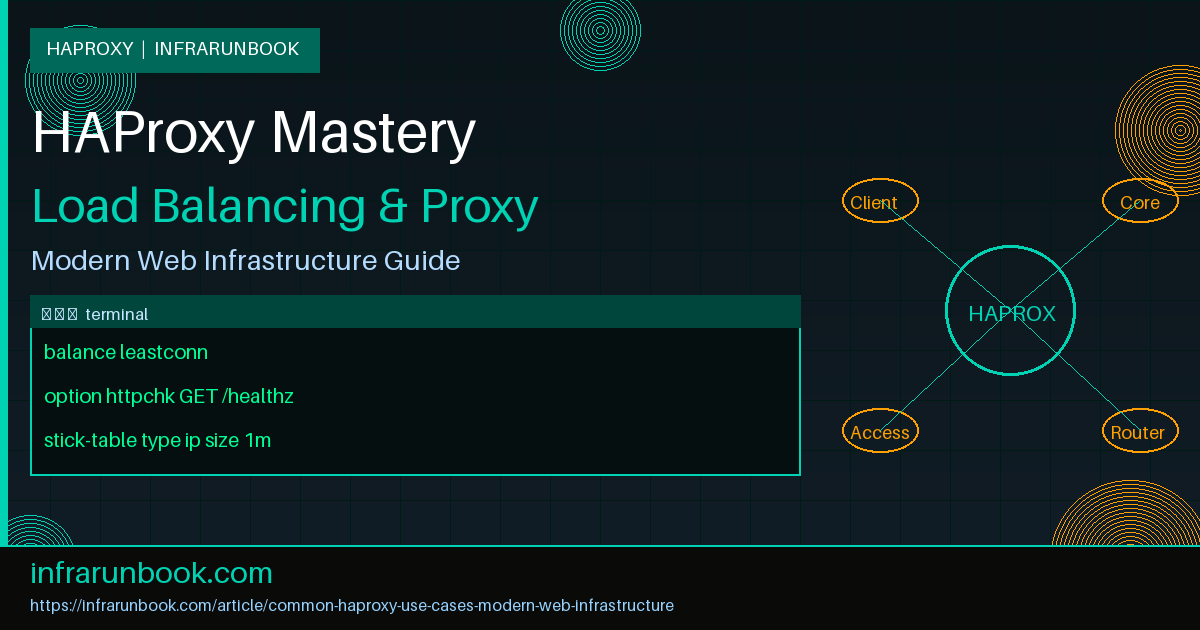

HAProxy (High Availability Proxy) is one of the most widely deployed open-source load balancers and reverse proxies in production environments. Originally released in 2001, it has grown into a battle-tested solution trusted by some of the world's highest-traffic platforms. Whether you are distributing HTTP requests across a web farm, terminating TLS at the edge, enforcing rate limits against abusive clients, or proxying long-lived WebSocket connections, HAProxy provides the low-level primitives needed to build resilient, scalable infrastructure without sacrificing observability or control.

This article walks through the most common HAProxy use cases encountered in modern web infrastructure, complete with annotated configuration examples using realistic hostnames, IP addresses, and domain names that reflect actual production patterns.

Load Balancing Algorithms

The most fundamental use case for HAProxy is distributing incoming connections across a pool of backend servers. HAProxy supports several scheduling algorithms, each suited to different traffic and workload profiles.

Round-Robin

Round-robin is the default algorithm and distributes requests sequentially across all healthy servers in the pool. It works well when all backends have roughly equivalent capacity and when each request takes a similar amount of time to process.

frontend http_front

bind 192.168.1.1:80

default_backend web_servers

backend web_servers

balance roundrobin

server web-01 192.168.10.10:8080 check

server web-02 192.168.10.11:8080 check

server web-03 192.168.10.12:8080 checkLeast Connections

The leastconn algorithm routes each new connection to the backend currently holding the fewest active connections. This is ideal for long-lived or variable-duration workloads such as database proxies, LDAP queries, file upload handlers, or WebSocket sessions where each server's active queue depth matters more than raw request throughput.

backend db_pool

balance leastconn

server db-01 192.168.10.20:5432 check

server db-02 192.168.10.21:5432 checkSource Hash

The source algorithm hashes the client's IP address and maps it deterministically to a backend server. This provides a form of session affinity without storing per-client state on the load balancer. It is commonly used for applications that cache data per server and benefit from consistent routing of the same client to the same instance.

backend api_cluster

balance source

server api-01 192.168.10.30:3000 check

server api-02 192.168.10.31:3000 check

server api-03 192.168.10.32:3000 checkHealth Checks

HAProxy supports both TCP-level and HTTP-level health checks to determine whether a backend is fit to receive traffic. Choosing the right check depth is important: too shallow and you miss application-level failures; too heavy and you add unnecessary load.

TCP Health Checks

A basic TCP check opens a connection to the server's configured port and marks it healthy if the connection succeeds. This verifies that the process is bound and accepting connections but does not validate application-level behavior. TCP checks are appropriate for non-HTTP backends such as Redis, Memcached, or PostgreSQL.

backend cache_servers

server memcache-01 192.168.10.40:11211 check inter 2s rise 2 fall 3

server memcache-02 192.168.10.41:11211 check inter 2s rise 2 fall 3The inter option sets the check interval in seconds. rise defines how many consecutive successes are required to promote a server back to healthy status after a failure. fall defines how many consecutive failures mark a server as down and remove it from the rotation.

HTTP Health Checks

HTTP checks send an actual HTTP request to a dedicated health endpoint and validate the response status code. This ensures the application is not just listening on the port but is actively capable of serving requests and passing its own internal readiness logic.

backend app_servers

option httpchk GET /healthz HTTP/1.1\r\nHost:\ solvethenetwork.com

http-check expect status 200

server app-01 192.168.10.50:8080 check inter 5s

server app-02 192.168.10.51:8080 check inter 5sSSL/TLS Termination

Terminating TLS at the HAProxy layer offloads CPU-intensive cryptographic operations from application servers and centralizes certificate management in one place. HAProxy handles the HTTPS handshake with the client and forwards plain HTTP to the backend over the trusted internal network.

frontend https_front

bind 192.168.1.1:443 ssl crt /etc/haproxy/certs/solvethenetwork.com.pem

http-request redirect scheme https unless { ssl_fc }

default_backend web_servers

backend web_servers

server web-01 192.168.10.10:80 check

server web-02 192.168.10.11:80 checkThe PEM file referenced in the

binddirective must contain the certificate, intermediate chain, and private key concatenated in order. For deployments with multiple virtual hosts, specify a directory of PEM files to support distinct certificates per hostname on a single listener. HAProxy selects the correct certificate via SNI.

Security Note: Always restrict TLS to version 1.2 and above. Add

ssl-default-bind-options ssl-min-ver TLSv1.2 no-tls-ticketsto your global section to enforce this policy across all frontends and eliminate negotiation of deprecated protocol versions.

ACL-Based Routing

Access Control Lists (ACLs) allow HAProxy to inspect request attributes and make conditional routing decisions based on HTTP headers, URL paths, source IPs, request methods, query parameters, and many other factors. ACLs are one of HAProxy's most powerful Layer 7 capabilities and are the foundation of advanced traffic management patterns.

Path-Based Routing

Route API traffic to a dedicated backend cluster while serving all other requests from the main application pool:

frontend http_front

bind 192.168.1.1:80

acl is_api path_beg /api/

use_backend api_servers if is_api

default_backend web_servers

backend api_servers

balance leastconn

server api-01 192.168.10.30:3000 check

server api-02 192.168.10.31:3000 check

backend web_servers

balance roundrobin

server web-01 192.168.10.10:8080 check

server web-02 192.168.10.11:8080 checkHost-Based Routing

Route traffic to different backends based on the HTTP Host header, enabling multi-tenant or multi-application deployments on a single IP address:

frontend http_front

bind 192.168.1.1:80

acl host_admin hdr(host) -i admin.solvethenetwork.com

acl host_app hdr(host) -i app.solvethenetwork.com

use_backend admin_servers if host_admin

use_backend app_servers if host_app

default_backend web_serversIP Allowlisting

Restrict access to an internal management interface to trusted RFC 1918 address space only, denying all public traffic at the proxy layer before it reaches any application code:

frontend mgmt_front

bind 192.168.1.1:8443 ssl crt /etc/haproxy/certs/solvethenetwork.com.pem

acl trusted_nets src 10.0.0.0/8 172.16.0.0/12 192.168.0.0/16

http-request deny unless trusted_nets

default_backend mgmt_serversStick Tables and Session Persistence

Stick tables are HAProxy's built-in distributed key-value store for tracking per-client state across requests. The most common application is session persistence — ensuring that a client is consistently routed to the same backend server throughout a session. Unlike purely stateless algorithms such as source hash, stick tables survive server pool changes gracefully.

Cookie-Based Persistence

backend web_servers

balance roundrobin

cookie SERVERID insert indirect nocache

server web-01 192.168.10.10:8080 check cookie web01

server web-02 192.168.10.11:8080 check cookie web02

server web-03 192.168.10.12:8080 check cookie web03HAProxy injects a

Set-Cookie: SERVERID=web01header on the first response. All subsequent requests carrying that cookie are routed back to the corresponding server. If the target server is marked down, HAProxy transparently reroutes the client to a healthy alternative without exposing the failure to the end user.

Rate Limiting

HAProxy's stick tables combined with ACLs provide granular rate limiting at both the connection and HTTP request level. This is essential for protecting APIs from abusive clients, mitigating credential stuffing attacks, and enforcing fair-use policies without a separate rate-limiting middleware layer.

frontend http_front

bind 192.168.1.1:80

# Track HTTP request rate per source IP using stick counter 0

stick-table type ip size 200k expire 30s store http_req_rate(10s)

http-request track-sc0 src

# Deny clients exceeding 100 requests per 10-second window

acl too_many_requests sc_http_req_rate(0) gt 100

http-request deny deny_status 429 if too_many_requests

default_backend web_serversUsing

deny_status 429returns a proper HTTP 429 Too Many Requests response rather than silently dropping the connection. This is more compliant with RFC 6585, signals to well-behaved clients that they should back off, and makes debugging significantly easier when reviewing application-side logs.

Stats Page

HAProxy includes a built-in statistics dashboard accessible over HTTP. The stats page provides real-time visibility into frontend and backend health, active and queued connection counts, request rates, error percentages, and server weights — all without installing any additional monitoring agent.

frontend stats_front

bind 192.168.1.1:8404

stats enable

stats uri /haproxy-stats

stats realm HAProxy\ Statistics

stats auth infrarunbook-admin:S3cur3P@ssw0rd

stats refresh 5s

stats show-legends

stats show-node sw-infrarunbook-01The

stats show-nodedirective displays the hostname of the HAProxy instance in the dashboard header, which is particularly useful in an active/passive HA pair where you need to confirm which node is currently active. Always restrict the stats frontend to the management network using an ACL as shown in the IP allowlisting example.

High Availability with Keepalived

A standalone HAProxy instance is itself a single point of failure. The standard production pattern for eliminating this is to run two HAProxy instances in an active/passive configuration managed by Keepalived, which uses VRRP to float a virtual IP between nodes. All client traffic is directed to the virtual IP, not to either node directly.

Keepalived on Primary Node (sw-infrarunbook-01)

vrrp_script chk_haproxy {

script "killall -0 haproxy"

interval 2

weight 2

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 51

priority 101

advert_int 1

authentication {

auth_type PASS

auth_pass InfraRunBook2024!

}

virtual_ipaddress {

192.168.1.100/24

}

track_script {

chk_haproxy

}

}Keepalived on Secondary Node

vrrp_instance VI_1 {

state BACKUP

interface eth0

virtual_router_id 51

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass InfraRunBook2024!

}

virtual_ipaddress {

192.168.1.100/24

}

}With this configuration, both HAProxy nodes have 192.168.1.100 configured as their shared VIP. Keepalived holds the VIP on the primary and fails it over to the secondary within one to two seconds if the primary's HAProxy process exits or if the node becomes unreachable on the VRRP multicast group. Clients connecting to 192.168.1.100 experience only a brief interruption during failover equal to the VRRP advertisement interval.

WebSocket Proxying

WebSocket connections begin as a standard HTTP/1.1 upgrade request and then transition to a persistent, bidirectional TCP stream. HAProxy handles WebSocket traffic transparently in HTTP mode as long as connection timeouts are extended to accommodate long-lived idle connections and the connection upgrade headers are passed through correctly.

frontend ws_front

bind 192.168.1.1:443 ssl crt /etc/haproxy/certs/solvethenetwork.com.pem

acl is_websocket hdr(Upgrade) -i websocket

use_backend ws_servers if is_websocket

default_backend web_servers

backend ws_servers

balance source

timeout connect 5s

timeout client 3600s

timeout server 3600s

option http-server-close

server ws-01 192.168.10.60:9000 check

server ws-02 192.168.10.61:9000 checkThe source balance algorithm is a practical default for WebSocket backends because it keeps a given client connected to the same server for the duration of the session without requiring cookie injection. The extended timeout values of 3600 seconds prevent HAProxy from closing idle-but-alive WebSocket connections mid-session.

Logging and Syslog Integration

HAProxy emits detailed per-request log lines in a structured format capturing timestamps, client IPs, response codes, four-phase timing breakdowns, and backend server identity. Shipping these logs to a centralized syslog server is standard practice in production and is the primary input for access analytics and incident investigation.

global

log 10.0.0.1:514 local0

log 10.0.0.1:514 local1 notice

defaults

log global

option httplog

option dontlognull

log-format "%ci:%cp [%t] %ft %b/%s %Tq/%Tw/%Tc/%Tr/%Ta %ST %B %tsc %ac/%fc/%bc/%sc/%rc %{+Q}r"The

dontlognulloption suppresses log entries for connections that transfer no data, significantly reducing noise from health-check traffic and TCP port scanners. The custom

log-formatcaptures all five timing fields — queue time, wait time, connect time, response time, and total active time — which is essential for isolating slow backends from slow networks or slow clients.

HTTP Compression

HAProxy can compress HTTP response bodies on the fly before delivering them to clients. Enabling compression at the proxy layer offloads this CPU work from application servers and ensures consistent behavior across the entire backend cluster regardless of whether individual servers have compression enabled.

backend web_servers

compression algo gzip

compression type text/html text/css text/javascript application/json application/javascript

server web-01 192.168.10.10:8080 check

server web-02 192.168.10.11:8080 checkCompression is only applied when the client sends an

Accept-Encoding: gziprequest header and when the response Content-Type matches one of the explicitly configured types. Binary formats such as images, video, and already-compressed archives should always be excluded, as attempting to re-compress them wastes CPU cycles and may produce a larger output than the original.

Connection Limits

Protecting backend servers from connection overload is a fundamental load balancer responsibility. HAProxy provides overlapping connection limits at the global process level, per-frontend, per-backend, and per-server, allowing fine-grained capacity enforcement at every layer of the stack.

global

maxconn 50000

frontend http_front

bind 192.168.1.1:80

maxconn 10000

default_backend web_servers

backend web_servers

maxconn 5000

server web-01 192.168.10.10:8080 check maxconn 1000

server web-02 192.168.10.11:8080 check maxconn 1000

server web-03 192.168.10.12:8080 check maxconn 1000The global

maxconnsets the absolute process-wide ceiling, above which HAProxy stops accepting new connections. The frontend

maxconnqueues connections that exceed the limit rather than immediately refusing them, smoothing over short traffic spikes. Per-server

maxconnprevents any single backend from being overwhelmed while other healthy servers in the pool absorb additional queued connections.