Nginx has earned its place as one of the most widely deployed web servers and reverse proxies in production infrastructure. Originally written by Igor Sysoev to solve the C10K problem — handling ten thousand concurrent connections on a single server — Nginx achieves high performance through a fundamentally different architecture than thread-per-connection servers. For infrastructure teams managing sites under real traffic load, understanding how Nginx delivers performance gains is not optional; it is foundational knowledge.

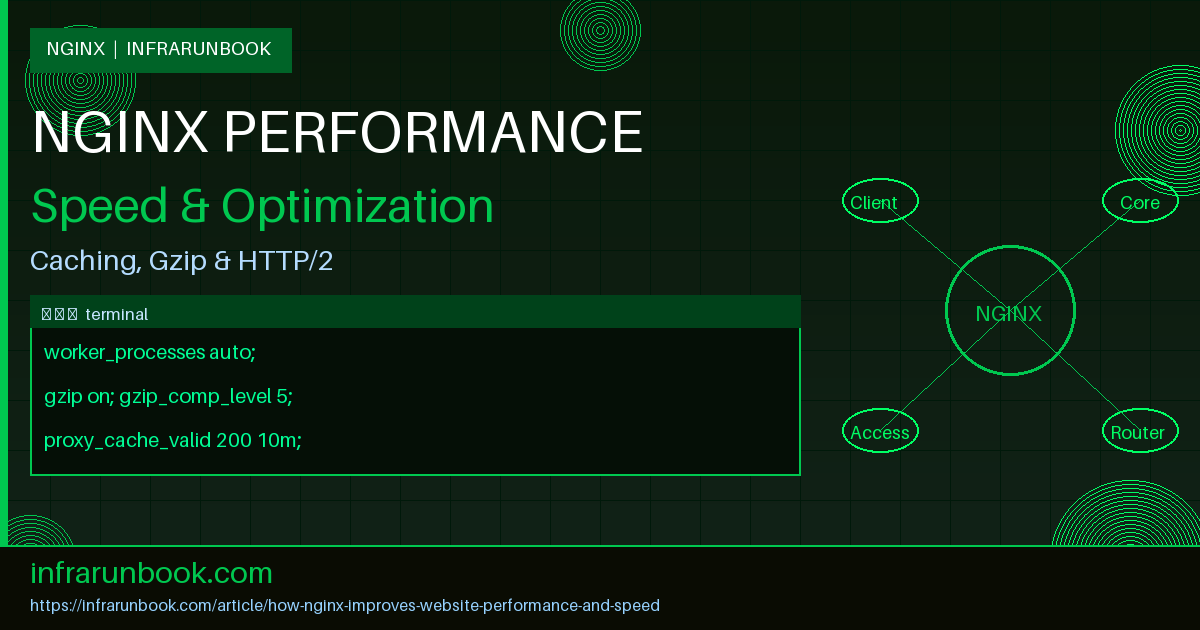

This article walks through the concrete mechanisms Nginx uses to improve website performance and speed: its event-driven core and worker tuning, gzip compression, proxy caching, connection keep-alive, HTTP/2, load balancing, SSL session reuse, and buffer optimization. Each section includes annotated configuration snippets drawn from a production-style setup on sw-infrarunbook-01 serving solvethenetwork.com.

Event-Driven Architecture and Worker Process Tuning

The foundation of Nginx's performance is its event-driven, asynchronous, non-blocking model. Rather than spawning a thread or process per connection — as Apache's prefork MPM does — Nginx uses a small, fixed number of worker processes. Each worker handles thousands of connections through the operating system's event notification interface: epoll on Linux, kqueue on BSD. The practical implication is that Nginx consumes far less memory under load and avoids the context-switching overhead that cripples thread-based servers at scale.

Tuning the worker count and connection limits is the first lever infrastructure engineers pull:

# /etc/nginx/nginx.conf — worker tuning on sw-infrarunbook-01

worker_processes auto; # one worker per logical CPU core

worker_rlimit_nofile 65535; # raise OS file descriptor limit per worker

events {

worker_connections 4096; # max simultaneous connections per worker

use epoll; # explicit epoll on Linux

multi_accept on; # accept all pending connections in one pass

}With worker_processes auto, Nginx reads the CPU count at startup and spawns one worker per core. On a 4-core host, the total concurrency ceiling is 4 x 4096 = 16,384 simultaneous connections before any queuing occurs. Combined with multi_accept on, each worker drains its accept queue in a single system call pass, reducing accept latency under burst traffic.

Gzip Compression to Reduce Transfer Size

Network bandwidth remains a bottleneck for many users, particularly on mobile connections. Gzip compression reduces the byte count of text-based responses — HTML, CSS, JavaScript, JSON, XML — by 60 to 80 percent in typical cases. Nginx compresses responses on the fly before sending them to the client, or can serve pre-compressed .gz files using the gzip_static module.

# Gzip configuration — nginx.conf http context

http {

gzip on;

gzip_vary on; # add Vary: Accept-Encoding header

gzip_proxied any; # compress responses for all proxy requests

gzip_comp_level 5; # balance CPU usage vs compression ratio

gzip_min_length 256; # skip compressing responses under 256 bytes

gzip_types

text/plain

text/css

text/javascript

application/javascript

application/json

application/xml

image/svg+xml

font/woff2;

}gzip_comp_level 5 is a widely adopted production value. Levels above 6 provide diminishing compression gains while consuming noticeably more CPU. The gzip_vary on directive is critical when Nginx sits behind a CDN or Varnish cache — it signals that the cached object differs based on whether the client sent Accept-Encoding: gzip, preventing clients from receiving gzip-encoded content they cannot decode.

Sendfile, TCP_NOPUSH, and TCP_NODELAY

For static file delivery, Nginx can bypass user-space memory entirely using the sendfile() system call. Without sendfile, the kernel copies file data into user-space, then the application copies it back into the socket buffer — two unnecessary memory copies. The sendfile system call performs the transfer entirely within the kernel, reducing CPU time and memory bus pressure.

http {

sendfile on;

tcp_nopush on; # batch response headers with sendfile data

tcp_nodelay on; # disable Nagle algorithm for keepalive connections

keepalive_timeout 65;

keepalive_requests 1000;

}tcp_nopush works in conjunction with sendfile to batch the HTTP response header and the start of the file into a single TCP segment, reducing round trips. tcp_nodelay disables the Nagle algorithm on keep-alive connections so that small responses — API calls, health checks — are not artificially delayed waiting to accumulate enough data to fill a full TCP segment.

Proxy Caching for Upstream Offload

When Nginx acts as a reverse proxy in front of application servers, proxy caching is one of the highest-leverage performance tools available. Cached responses are served from disk or memory without touching the upstream at all, dramatically reducing backend load and Time to First Byte (TTFB). A single cache hit removes an entire upstream round trip.

# /etc/nginx/nginx.conf — cache zone definition

http {

proxy_cache_path /var/cache/nginx/solvethenetwork

levels=1:2

keys_zone=app_cache:64m

max_size=4g

inactive=60m

use_temp_path=off;

server {

listen 443 ssl http2;

server_name solvethenetwork.com;

location /api/public/ {

proxy_pass http://10.10.10.20:8080;

proxy_cache app_cache;

proxy_cache_valid 200 10m;

proxy_cache_valid 404 1m;

proxy_cache_use_stale error timeout updating http_500 http_502 http_503;

proxy_cache_lock on;

add_header X-Cache-Status $upstream_cache_status;

}

}

}The proxy_cache_use_stale directive instructs Nginx to serve a stale cached copy if the upstream returns an error or times out. This is essential for resilience — users see slightly stale data rather than a 502 error during upstream degradation events. proxy_cache_lock on prevents cache stampedes: only one request revalidates an expired entry while others wait and then receive the freshly cached response, protecting the backend from a sudden burst of simultaneous cache-miss requests.

HTTP Keep-Alive and Upstream Keepalive

Each TCP connection involves a three-way handshake, and with TLS, an additional handshake costing multiple round trips. HTTP keep-alive reuses a single connection for multiple requests, eliminating this overhead entirely for subsequent requests from the same client or to the same upstream server.

http {

keepalive_timeout 65;

keepalive_requests 1000;

upstream app_servers {

server 10.10.10.20:8080;

server 10.10.10.21:8080;

keepalive 64; # idle keepalive connections per worker in pool

}

server {

location / {

proxy_pass http://app_servers;

proxy_http_version 1.1;

proxy_set_header Connection "";

}

}

}The keepalive 64 directive in the upstream block maintains a pool of up to 64 idle persistent connections per worker to the backend servers. Without this, Nginx establishes a new TCP connection for every proxied request — significant overhead on high-throughput APIs. The proxy_http_version 1.1 and empty Connection header are mandatory companions: HTTP/1.0 does not support persistent connections, and clearing the Connection header prevents the client's connection disposition from being forwarded to the upstream.

HTTP/2 for Multiplexed Connections

HTTP/2 is the single most impactful protocol-level improvement for browser-facing traffic. It multiplexes multiple requests over a single TCP connection, eliminates head-of-line blocking at the HTTP layer, uses HPACK header compression to reduce redundant header bytes, and supports server push for preloading assets. Enabling it in Nginx requires only adding http2 to the listen directive:

server {

listen 443 ssl http2;

server_name solvethenetwork.com;

ssl_certificate /etc/ssl/solvethenetwork/fullchain.pem;

ssl_certificate_key /etc/ssl/solvethenetwork/privkey.pem;

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384;

ssl_prefer_server_ciphers off;

ssl_session_cache shared:SSL:10m;

ssl_session_timeout 1d;

ssl_session_tickets off;

}ssl_session_cache shared:SSL:10m stores TLS session parameters so returning clients can resume without a full handshake. On solvethenetwork.com, this means returning visitors skip the 1 to 2 RTT TLS negotiation overhead entirely. The cache is shared across all worker processes using 10 MB of shared memory, which holds approximately 40,000 sessions. ssl_session_tickets off is the recommended setting for deployments prioritizing forward secrecy — session ticket key rotation requires careful orchestration to maintain security properties.

Load Balancing Algorithms and Upstream Health Checks

Nginx's upstream module provides several load balancing algorithms relevant to performance. The default round-robin distributes requests evenly. For application servers with varying response times, least_conn routes each new request to the backend with the fewest active connections, preventing queue buildup behind a temporarily slow server.

upstream app_servers {

least_conn;

server 10.10.10.20:8080 weight=2 max_fails=3 fail_timeout=30s;

server 10.10.10.21:8080 weight=1 max_fails=3 fail_timeout=30s;

server 10.10.10.22:8080 backup;

keepalive 64;

}The backup flag marks 10.10.10.22 as a standby server — it only receives traffic when the primary servers are unavailable. Weight values direct proportionally more traffic to the higher-capacity node. Passive health checking is built-in: if a server fails three consecutive requests within 30 seconds, Nginx marks it as unavailable and stops routing to it until the fail_timeout window expires and a probe request succeeds.

Rate Limiting to Protect Performance Under Load

A server saturated by scrapers, credential stuffers, or a sudden traffic spike is not a performant server. Nginx's ngx_http_limit_req_module implements token bucket rate limiting to cap request rates per client IP, protecting backend resources and ensuring fair capacity distribution across legitimate users.

http {

limit_req_zone $binary_remote_addr zone=api_limit:10m rate=30r/s;

limit_req_zone $binary_remote_addr zone=login_limit:10m rate=5r/m;

server {

location /api/ {

limit_req zone=api_limit burst=50 nodelay;

limit_req_status 429;

proxy_pass http://app_servers;

}

location /auth/login {

limit_req zone=login_limit burst=3 nodelay;

limit_req_status 429;

proxy_pass http://app_servers;

}

}

}The burst parameter allows short traffic spikes above the steady-state rate before requests are rejected with 429. nodelay processes burst requests immediately rather than queuing them at the rate limit interval, which reduces perceived latency for legitimate burst traffic while still enforcing the overall rate. Using $binary_remote_addr instead of $remote_addr reduces per-entry memory from up to 15 bytes to 4 bytes for IPv4, making the shared memory zone more efficient under large client populations.

Open File Cache for Static Asset Delivery

Every static file request normally requires multiple system calls: open(), fstat(), and close(). Under high concurrency serving thousands of static assets such as images, fonts, and CSS files, this syscall overhead accumulates. The open_file_cache directive instructs Nginx to cache file descriptors, metadata, and directory lookup results in memory:

http {

open_file_cache max=10000 inactive=30s;

open_file_cache_valid 60s;

open_file_cache_min_uses 2;

open_file_cache_errors on;

}File descriptors and metadata for up to 10,000 files are cached. Entries are evicted after 30 seconds of inactivity. A file must be accessed at least twice before entering the cache, preventing one-off large file requests from displacing frequently accessed assets. open_file_cache_errors on caches negative lookups as well — preventing redundant filesystem calls for missing files that are repeatedly probed by bots scanning for common CMS paths.

Buffer Tuning for Proxy Throughput

Nginx buffers upstream responses before forwarding them to clients. If buffers are too small, Nginx overflows to temporary disk files, introducing disk I/O latency into the response path. Properly sized buffers keep the entire transaction in memory and allow Nginx to release the upstream connection sooner:

location /api/ {

proxy_pass http://app_servers;

proxy_buffering on;

proxy_buffer_size 16k;

proxy_buffers 8 32k;

proxy_busy_buffers_size 64k;

proxy_temp_path /var/cache/nginx/temp;

proxy_max_temp_file_size 0; # disable temp file overflow for API responses

}proxy_buffer_size 16k covers the upstream response header. The pool of 8 x 32k buffers handles the body. Setting proxy_max_temp_file_size 0 forces all buffering to remain in memory for this location, appropriate when API responses are known to be compact. For large media downloads, leave temp files enabled and place the temp path on fast NVMe storage to minimize the penalty when the buffer overflows.

Performance-Aware Access Logging

Performance tuning requires observability. Nginx's flexible log format captures upstream response time, cache status, and connection timing data that feeds directly into monitoring dashboards and latency alerting:

http {

log_format performance

'$remote_addr - $remote_user [$time_local] '

'"$request" $status $body_bytes_sent '

'rt=$request_time '

'uct=$upstream_connect_time '

'uht=$upstream_header_time '

'urt=$upstream_response_time '

'cs=$upstream_cache_status';

access_log /var/log/nginx/solvethenetwork-access.log

performance buffer=64k flush=5s;

}The buffer=64k flush=5s parameters batch log writes rather than flushing after every request, reducing disk I/O at high request rates. The $upstream_response_time field is the most actionable metric for identifying slow backend responses that inflate overall TTFB. Feeding this log into a tool like Loki or Elasticsearch lets the operations team correlate latency spikes with deployments, traffic surges, or database query regressions in near real time.