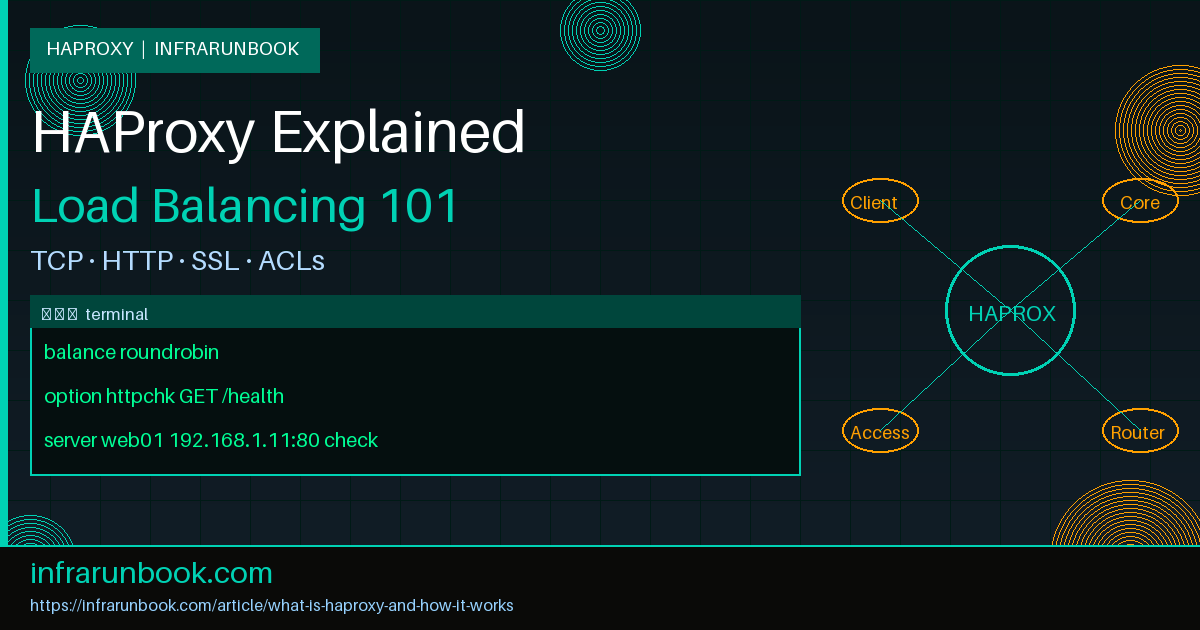

HAProxy — short for High Availability Proxy — is a free, open-source software solution that delivers high-performance TCP and HTTP load balancing, proxying, and health checking for networked applications. Originally written by Willy Tarreau in 2001, it has grown into the de facto standard for production-grade load balancing on Linux. It is deployed at massive scale by organizations ranging from small startups to hyperscalers, and powers the ingress layer of countless cloud-native platforms.

At its core, HAProxy sits between clients and your backend servers. It distributes incoming traffic, monitors service health, and guarantees that no single server is overwhelmed. If a backend becomes unresponsive, HAProxy removes it from rotation automatically — all without the client knowing anything went wrong. This article breaks down how HAProxy works, how to configure its most important features, and what makes it the right choice for serious infrastructure.

The Frontend/Backend Model

HAProxy's entire configuration is organized around two primary constructs: frontends and backends.

- Frontend: Defines how HAProxy listens for incoming connections — which IP address, port, and protocol (TCP or HTTP). A frontend inspects traffic attributes and decides which backend pool to forward each request to.

- Backend: Defines the pool of real servers that handle requests. Each backend carries a load balancing algorithm, health check configuration, and one or more server entries with their IP addresses and ports.

There is also a listen block that merges frontend and backend into one section, useful for simpler TCP proxy scenarios. Most HTTP deployments keep them separate for clarity.

Below is a minimal working configuration for host sw-infrarunbook-01, load balancing HTTP traffic across three web servers:

global

log /dev/log local0

log /dev/log local1 notice

maxconn 50000

user infrarunbook-admin

group infrarunbook-admin

daemon

defaults

log global

mode http

option httplog

option dontlognull

timeout connect 5s

timeout client 30s

timeout server 30s

frontend http_front

bind 192.168.1.100:80

default_backend web_servers

backend web_servers

balance roundrobin

option httpchk GET /healthz

server web01 192.168.1.11:80 check

server web02 192.168.1.12:80 check

server web03 192.168.1.13:80 checkHAProxy listens on

192.168.1.100:80and distributes requests in round-robin order across the three backend servers. The

checkkeyword on each server line activates health monitoring.

Load Balancing Algorithms

HAProxy supports several load balancing algorithms. The right choice depends on your workload's connection duration and statefulness.

- roundrobin: Distributes requests sequentially across all available servers. Weights allow higher-capacity servers to receive proportionally more traffic. This is the default and works well for short-lived HTTP requests.

- leastconn: Routes each new connection to the server with the fewest active connections. Ideal for long-lived connections such as database sessions, WebSocket tunnels, or any workload with highly variable processing time.

- source: Hashes the client's source IP address to always direct that client to the same backend server. Useful for stateful applications that do not use centralized session storage.

- uri: Hashes the full request URI. Ensures the same URL always hits the same server — excellent in front of caching layers.

- first: Routes to the first available server until its connection limit is saturated, then spills over to the next. Useful for minimizing idle server wake-ups during off-peak hours.

- random: Picks servers pseudo-randomly. Statistically approximates roundrobin at high volumes without the coordination overhead.

backend api_pool

balance leastconn

server api01 192.168.1.21:8080 check weight 10

server api02 192.168.1.22:8080 check weight 10

server api03 192.168.1.23:8080 check weight 5Here

api03carries half the weight of the others, so HAProxy will send it approximately half the connections that each of its peers handles.

Health Checks: TCP and HTTP

HAProxy continuously monitors backend servers. When a server fails its configured number of consecutive checks, it is pulled from rotation. When it recovers enough consecutive successes, it is reinstated.

TCP health checks open a TCP connection to the server's port and close it immediately. If the TCP handshake succeeds, the server is considered up. This is the lightest check, appropriate for non-HTTP backends like databases or raw TCP services.

backend mysql_pool

mode tcp

balance leastconn

option tcp-check

server db01 192.168.1.31:3306 check inter 2s fall 3 rise 2

server db02 192.168.1.32:3306 check inter 2s fall 3 rise 2HTTP health checks send a real HTTP request to the backend and validate the response status code. This is far more meaningful — a server can be accepting TCP connections but returning HTTP 500 errors, and a TCP check would never expose that.

backend web_servers

balance roundrobin

option httpchk GET /health HTTP/1.1\r\nHost:\ solvethenetwork.com

http-check expect status 200

server web01 192.168.1.11:80 check inter 3s fall 2 rise 3

server web02 192.168.1.12:80 check inter 3s fall 2 rise 3Key check parameters to understand:

- inter: Interval between checks (default 2s).

- fall: Consecutive failures required before marking a server down.

- rise: Consecutive successes required before marking a server back up.

SSL/TLS Termination

HAProxy can terminate TLS on behalf of backend servers. This offloads CPU-intensive cryptographic operations to a single location, simplifies certificate management, and lets backend servers communicate over plain HTTP on a trusted internal segment.

frontend https_front

bind 192.168.1.100:443 ssl crt /etc/haproxy/certs/solvethenetwork.com.pem

http-request set-header X-Forwarded-Proto https

redirect scheme https code 301 if !{ ssl_fc }

default_backend web_servers

backend web_servers

balance roundrobin

server web01 192.168.1.11:80 check

server web02 192.168.1.12:80 checkThe PEM file at

/etc/haproxy/certs/solvethenetwork.com.pemmust contain the server certificate, private key, and any intermediate CA certificates concatenated in that order. HAProxy automatically performs SNI-based certificate selection, so a single bind address can serve multiple domains each with their own certificate.

To enforce modern cipher suites and disable weak protocol versions globally:

global

ssl-default-bind-ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-GCM-SHA384

ssl-default-bind-options ssl-min-ver TLSv1.2 no-tls-ticketsACL-Based Routing

Access Control Lists (ACLs) are among HAProxy's most powerful features. They let you inspect virtually any attribute of a request — hostname, URL path, HTTP method, header values, source IP range — and make routing decisions accordingly.

frontend http_front

bind 192.168.1.100:80

acl is_api path_beg /api/

acl is_static path_end .jpg .png .gif .css .js .woff2

acl is_metrics path_beg /metrics

use_backend api_pool if is_api

use_backend static_pool if is_static

http-request deny if is_metrics

default_backend web_serversACLs support boolean logic with

if,

!(NOT), and implicit AND when multiple conditions appear on the same line:

acl internal_network src 192.168.1.0/24 10.0.0.0/8

acl is_admin_path path_beg /admin/

http-request deny if is_admin_path !internal_networkThis blocks access to

/admin/for any client whose source IP falls outside the listed RFC 1918 ranges. Internal staff on

192.168.1.0/24or

10.0.0.0/8pass through unimpeded.

Stick Tables and Session Persistence

When an application requires a user to consistently reach the same backend server — session affinity — HAProxy provides two mechanisms: cookie-based persistence and stick tables.

Cookie-based persistence inserts a cookie into the HTTP response that encodes the chosen server. On all subsequent requests, HAProxy reads this cookie and routes the client back to the correct server.

backend web_servers

balance roundrobin

cookie SERVERID insert indirect nocache

server web01 192.168.1.11:80 check cookie web01

server web02 192.168.1.12:80 check cookie web02

server web03 192.168.1.13:80 check cookie web03Stick tables store a key-to-server mapping in memory on the HAProxy process itself. They can key on source IP, cookie value, or any fetched sample:

backend web_servers

balance roundrobin

stick-table type ip size 100k expire 30m

stick on src

server web01 192.168.1.11:80 check

server web02 192.168.1.12:80 checkThe

expire 30mdirective evicts entries that have been idle for 30 minutes, preventing unbounded memory growth.

Rate Limiting with Stick Tables

Stick tables have a second major role: rate limiting. By tracking request counters per source IP, HAProxy can drop or throttle clients that exceed a defined threshold without involving any external system.

frontend http_front

bind 192.168.1.100:80

stick-table type ip size 100k expire 10s store http_req_rate(10s)

http-request track-sc0 src

http-request deny deny_status 429 if { sc_http_req_rate(0) gt 100 }

default_backend web_serversThis tracks HTTP request rates per source IP over a rolling 10-second window. Any client exceeding 100 requests in that window receives an HTTP

429 Too Many Requestsresponse. The table itself expires entries after 10 seconds of inactivity, keeping memory consumption bounded.

The HAProxy Stats Page

HAProxy ships with a built-in real-time statistics dashboard. It displays frontend and backend health status, active and queued connection counts, session rates, bytes transferred, error counters, and the current state of every server in every pool.

listen stats

bind 192.168.1.100:8404

mode http

stats enable

stats uri /haproxy-stats

stats realm HAProxy\ Statistics

stats auth infrarunbook-admin:Ch@ngeMe!

stats refresh 5s

stats show-legends

stats show-node

stats hide-versionNavigate to

http://192.168.1.100:8404/haproxy-statsand authenticate with the credentials above. Server rows are color-coded: green means the server is up and healthy, red means it has failed its health checks and is out of rotation, and orange means it is in

MAINTmode (manually disabled). The stats page also allows you to enable or drain servers interactively without editing the configuration file.

Security note: Bind the stats page only to a management VLAN interface (e.g.,192.168.100.1:8404) and never expose it on a public-facing interface.

Logging and Syslog Integration

HAProxy does not write log files itself. Instead, it emits structured log entries to a syslog daemon (rsyslog, syslog-ng, or systemd-journal) over UDP or a Unix socket. This architecture keeps HAProxy non-blocking and lightweight — the logging path never stalls request processing.

global

log 127.0.0.1:514 local0

log 127.0.0.1:514 local1 notice

defaults

log global

option httplog

option dontlognull

option log-health-checksOn the rsyslog side, capture HAProxy's facility:

# /etc/rsyslog.d/49-haproxy.conf

$ModLoad imudp

$UDPServerRun 514

local0.* /var/log/haproxy/access.log

local1.* /var/log/haproxy/error.log

& stopEach HTTP log line contains: client IP, accept timestamp, frontend name, backend name, chosen server name, four phase timings (Tq/Tw/Tc/Tr and total Tt in milliseconds), HTTP status code, bytes transferred, captured cookies, connection termination flags, and the raw request line. This structured format is directly consumable by Elasticsearch, Grafana Loki, Splunk, or any syslog aggregator.

Connection Limits and Timeouts

Proper timeout configuration prevents resource exhaustion and stuck connections from accumulating. HAProxy provides granular control over every phase of connection lifetime.

- timeout connect: Maximum time to establish a TCP connection to a backend server.

- timeout client: Maximum inactivity on the client-side half of the connection.

- timeout server: Maximum inactivity on the server-side half of the connection.

- timeout http-request: Maximum time to receive a complete HTTP request from the client. Protects against slow-loris attacks.

- timeout tunnel: Used for upgraded connections (WebSocket, CONNECT). Must be set to minutes or hours rather than seconds.

defaults

timeout connect 5s

timeout client 25s

timeout server 25s

timeout http-request 10s

timeout tunnel 3600s

frontend http_front

bind 192.168.1.100:80

maxconn 20000

default_backend web_servers

backend web_servers

maxconn 600

server web01 192.168.1.11:80 check maxconn 200

server web02 192.168.1.12:80 check maxconn 200

server web03 192.168.1.13:80 check maxconn 200The

maxconndirective at the backend and per-server level causes HAProxy to queue excess connections internally rather than sending them to an already saturated server. This is particularly important in front of database backends where connection storms cause cascading failures.

WebSocket Support

WebSocket connections begin as a standard HTTP upgrade request and then transition to a long-lived bidirectional TCP stream. HAProxy handles this transparently in HTTP mode once

timeout tunnelis set appropriately.

frontend wss_front

bind 192.168.1.100:443 ssl crt /etc/haproxy/certs/solvethenetwork.com.pem

acl is_websocket hdr(Upgrade) -i websocket

use_backend ws_pool if is_websocket

default_backend web_servers

backend ws_pool

balance leastconn

timeout tunnel 1h

option http-server-close

server ws01 192.168.1.41:8080 check

server ws02 192.168.1.42:8080 checkUsing

leastconnfor WebSocket pools ensures new connections land on the server with the fewest active tunnels, which is almost always the right distribution strategy for long-lived connections.

Keepalived Integration for High Availability

A single HAProxy instance is itself a single point of failure. Production deployments pair two HAProxy nodes with Keepalived, which implements VRRP (Virtual Router Redundancy Protocol) to share a floating Virtual IP (VIP). If the primary node fails or HAProxy dies, Keepalived promotes the standby and the VIP migrates to it within one to two seconds.

# /etc/keepalived/keepalived.conf on sw-infrarunbook-01 (MASTER node)

vrrp_script chk_haproxy {

script "killall -0 haproxy"

interval 2

weight 2

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 51

priority 101

advert_int 1

authentication {

auth_type PASS

auth_pass S3cretVRRP!

}

virtual_ipaddress {

192.168.1.100/24

}

track_script {

chk_haproxy

}

}The standby node runs an identical configuration with

state BACKUPand

priority 100. The VIP

192.168.1.100is always held by the node with the highest priority that has a live HAProxy process. DNS for

solvethenetwork.compoints to this VIP, so failover requires no DNS change.

Reloading HAProxy Without Dropping Connections

One of HAProxy's most operationally important features is its ability to reload configuration with zero connection loss. HAProxy uses a socket-based mechanism: the new process inherits listening sockets from the old one, drains existing connections gracefully, and the old process exits once all sessions are complete.

# Validate config before reloading

haproxy -c -f /etc/haproxy/haproxy.cfg

# Graceful reload via systemd

systemctl reload haproxy

# Or directly using the master-worker process model

haproxy -f /etc/haproxy/haproxy.cfg -sf $(cat /run/haproxy/haproxy.pid)Always validate the configuration file before reloading. A syntax error will cause the reload to fail, leaving the existing (possibly stale) configuration running.

Putting It All Together

HAProxy's power comes not from any single feature but from how cleanly all these components compose. A production deployment on sw-infrarunbook-01 might simultaneously:

- Terminate TLS at the frontend, enforcing TLS 1.2 minimum and injecting

Strict-Transport-Security

headers. - Use ACLs to route

/api/

traffic to a containerized microservices pool, static assets to an origin cache, and WebSocket upgrade requests to a dedicated pool with a 1-hour tunnel timeout. - Enforce per-IP rate limiting against brute-force attempts on

/auth/login

using a stick table. - Use

leastconn

for the database proxy backend with strictmaxconn

limits to prevent connection storms. - Expose the stats dashboard on an internal management interface only.

- Ship structured access logs via UDP syslog to a centralized Loki instance for dashboards and alerting.

- Run in an active/standby pair with Keepalived for sub-two-second failover and a shared VIP.

Each layer is independently configurable, independently testable, and independently observable. That composability — combined with decades of production hardening and a zero-allocation hot path — is why HAProxy remains the load balancer of choice for engineers who need both simplicity and uncompromising reliability.