Introduction to Load Balancers

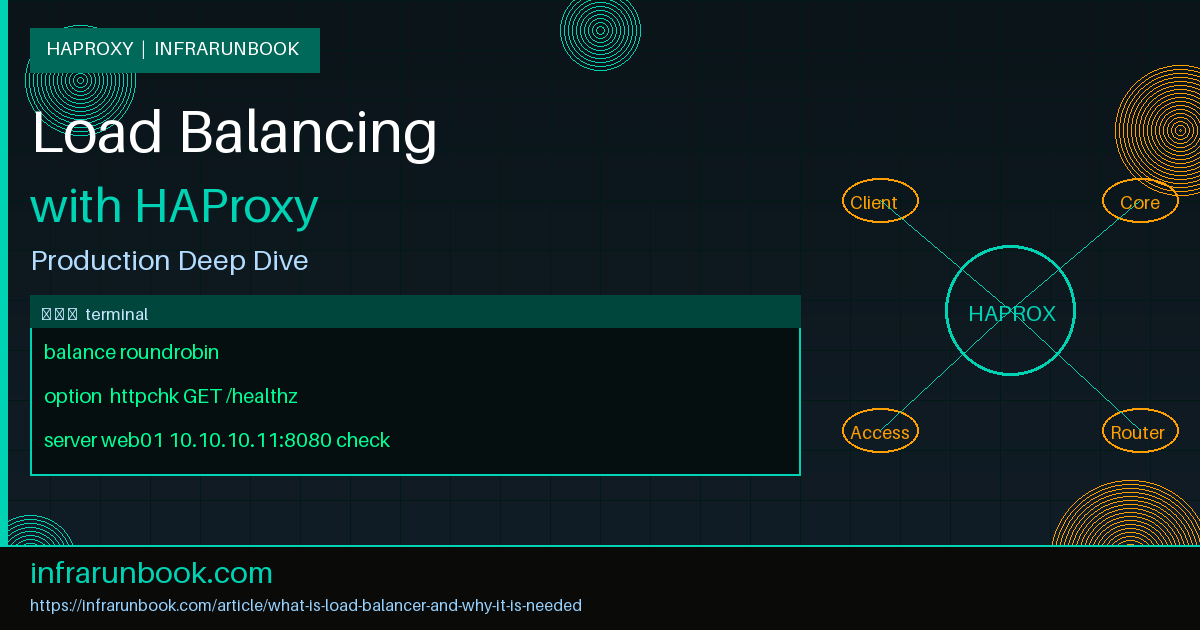

A load balancer is a network infrastructure component that distributes incoming client connections across a pool of backend servers. Rather than directing every request to a single host — which would eventually exhaust its CPU, memory, and file descriptors — a load balancer acts as an intelligent reverse proxy, routing traffic according to configurable algorithms, real-time server health, and session state. In modern infrastructure, load balancers sit at the entry point of web tiers, API clusters, database proxy layers, and microservice meshes, serving as the single virtual IP that clients connect to regardless of how many physical servers exist behind it.

For teams operating production services on bare-metal or virtual machines, HAProxy (High Availability Proxy) is the de facto open-source solution. Written in C and purpose-built for high throughput, HAProxy handles hundreds of thousands of concurrent connections on commodity hardware with sub-millisecond overhead. Understanding what a load balancer does — and why every serious infrastructure requires one — is foundational knowledge for any systems or network engineer.

How a Load Balancer Works

A load balancer maintains a frontend that accepts inbound connections and a backend pool of servers that fulfill requests. When a client connects to the load balancer's virtual IP (VIP), the load balancer selects a backend server based on the active algorithm, opens a connection to that server, and proxies traffic bidirectionally. To the client, the load balancer is the application endpoint. To the backend server, the load balancer is the client.

HAProxy supports two proxy modes:

- TCP mode — Layer 4 operation. HAProxy handles raw TCP streams without inspecting application-layer content. Used for TLS pass-through, MySQL, PostgreSQL, Redis, and SMTP.

- HTTP mode — Layer 7 operation. HAProxy fully parses HTTP/1.x and HTTP/2, enabling header manipulation, URL-based routing, cookie insertion, compression, and content-aware ACLs.

Why a Load Balancer Is Needed

The motivations for deploying a load balancer span availability, scalability, security, and operational control. Each concern exists in virtually every production environment beyond a trivial single-server deployment.

High Availability and Fault Tolerance

Without a load balancer, a single application server is a single point of failure. A kernel panic, an OOM event, a failed package upgrade — any of these takes the entire service offline. A load balancer continuously monitors backend health via configurable health checks. When a backend fails its check threshold, HAProxy removes it from rotation automatically and redistributes active traffic to the remaining healthy servers, all without operator intervention and without client-visible errors (provided at least one healthy backend remains).

Horizontal Scalability

Vertical scaling — adding CPU cores and RAM to one server — has a hard ceiling. Horizontal scaling is theoretically unlimited but only practical when a load balancer routes traffic across all nodes. Adding a new backend server and registering it in the HAProxy configuration absorbs additional traffic without any change to DNS, client configuration, or application code.

Session Persistence

Stateful applications store session data locally on each server. If a user's requests are spread across multiple backends, the session is lost on every hop. HAProxy's stick tables and cookie-based persistence solve this by binding a client to a specific backend for the duration of a session without requiring shared session storage.

SSL/TLS Offloading

TLS handshakes are computationally expensive. Offloading TLS termination to the load balancer means backend servers receive plain HTTP, freeing them from crypto overhead. HAProxy terminates TLS centrally, enforces modern cipher suites, and forwards traffic to backends over a trusted internal network segment.

Security Enforcement

A load balancer at the network edge is the natural place to enforce connection limits, rate limiting, and ACL-based access control before traffic reaches the application. HAProxy can block source IPs, enforce per-client request rate limits using stick tables, and reject malformed HTTP requests at the proxy layer — reducing backend exposure significantly.

HAProxy Architecture and Base Configuration

HAProxy's configuration is divided into sections: global (process-wide settings), defaults (inherited by all proxies unless overridden), frontend (listeners), backend (server pools), and optionally listen (combined frontend and backend). Below is a production-representative base configuration for host sw-infrarunbook-01 serving the solvethenetwork.com application tier.

global

log 127.0.0.1 local0

log 127.0.0.1 local1 notice

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

maxconn 50000

user haproxy

group haproxy

daemon

stats socket /var/run/haproxy/admin.sock mode 660 level admin

tune.ssl.default-dh-param 2048

defaults

log global

mode http

option httplog

option dontlognull

option forwardfor

option http-server-close

timeout connect 5s

timeout client 30s

timeout server 30s

retries 3

errorfile 503 /etc/haproxy/errors/503.http

frontend http_in

bind 10.10.10.1:80

bind 10.10.10.1:443 ssl crt /etc/haproxy/certs/solvethenetwork.com.pem

http-request redirect scheme https unless { ssl_fc }

default_backend web_servers

backend web_servers

balance roundrobin

option httpchk GET /healthz HTTP/1.1\r\nHost:\ solvethenetwork.com

http-check expect status 200

server web01 10.10.10.11:8080 check inter 3s rise 2 fall 3

server web02 10.10.10.12:8080 check inter 3s rise 2 fall 3

server web03 10.10.10.13:8080 check inter 3s rise 2 fall 3In this configuration, sw-infrarunbook-01 listens on VIP

10.10.10.1on ports 80 and 443. Port 80 traffic is immediately redirected to HTTPS. The backend pool contains three application nodes. HAProxy issues an HTTP GET to

/healthzevery 3 seconds; a server must pass 2 consecutive checks to enter rotation and fail 3 consecutive checks before being removed.

Load Balancing Algorithms

HAProxy provides multiple scheduling algorithms, each suited to different workload characteristics. Selecting the right algorithm for a given backend is one of the most impactful tuning decisions an operator can make.

Round Robin

roundrobin distributes each new connection to the next server in sequence, cycling through the pool evenly. It is the simplest algorithm and performs well when all backend servers have equivalent capacity and requests have roughly equal processing cost. The

weightparameter adjusts the proportion of traffic directed to each server.

backend api_servers

balance roundrobin

server api01 10.10.20.11:3000 check weight 1

server api02 10.10.20.12:3000 check weight 1

server api03 10.10.20.13:3000 check weight 2In this example,

api03receives twice the requests of the other two servers — appropriate when one node has greater compute capacity.

Least Connections

leastconn routes each new connection to the server with the fewest active connections at that instant. This algorithm is ideal for long-lived connections such as WebSocket sessions, database proxying, or large file downloads, where round-robin would lead to uneven load accumulation on slower servers.

backend db_proxy

balance leastconn

option tcp-check

server db01 10.10.30.11:5432 check

server db02 10.10.30.12:5432 check

server db03 10.10.30.13:5432 check backupThe

backupflag on

db03designates it as a standby: it only receives traffic when all non-backup servers are unavailable, functioning as a last-resort failover node.

Source Hash

source hashes the client IP address and maps it deterministically to a backend server. The same client always reaches the same server while the pool is stable. This provides sticky routing without cookie insertion or a stick table and is commonly used when session state cannot easily be replicated.

backend session_servers

balance source

hash-type consistent

server sess01 10.10.40.11:8080 check

server sess02 10.10.40.12:8080 check

server sess03 10.10.40.13:8080 checkhash-type consistentuses consistent hashing so that adding or removing one server only disrupts a fraction of sessions rather than reshuffling all client mappings simultaneously.

Health Checks

Health checks are the mechanism by which HAProxy determines which backend servers are eligible to receive traffic. Three types are available:

- TCP health check — HAProxy opens a TCP connection to the server port. A successful three-way handshake means the server is reachable. Configured with

option tcp-check

. - HTTP health check — HAProxy sends an HTTP request and validates the status code or response body. Configured with

option httpchk

andhttp-check expect

. - Agent check — An external agent process on the backend sends a custom status string to HAProxy via a dedicated TCP port, allowing the application itself to signal capacity or drain state — useful for graceful deployments.

backend app_pool

option httpchk GET /api/health HTTP/1.1\r\nHost:\ solvethenetwork.com

http-check expect rstring "\"status\":\"ok\""

server app01 10.10.50.11:8080 check inter 5s fall 2 rise 3

server app02 10.10.50.12:8080 check inter 5s fall 2 rise 3HAProxy checks

/api/healthevery 5 seconds and expects the response body to match the regex

"status":"ok". A server is marked down after 2 consecutive failures (

fall 2) and returned to rotation after 3 consecutive successes (

rise 3).

ACL-Based Routing

HAProxy's Access Control Lists (ACLs) enable Layer 7 routing decisions based on any inspectable attribute of the HTTP request — URL path, HTTP method, host header, source IP, query string parameters, or arbitrary headers. This allows a single HAProxy frontend to fan out to multiple purpose-specific backend pools.

frontend https_router

bind 10.10.10.1:443 ssl crt /etc/haproxy/certs/solvethenetwork.com.pem

acl is_api path_beg /api/

acl is_static path_beg /static/ /assets/ /images/

acl is_admin path_beg /admin

acl is_admin src 10.10.0.0/16

use_backend api_cluster if is_api

use_backend cdn_origin if is_static

use_backend admin_backend if is_admin

default_backend web_serversRequests to

/api/route to

api_cluster, static assets go to

cdn_origin, and

/adminis restricted to clients within the internal

10.10.0.0/16range. All other requests fall through to

web_servers. The two ACL lines for

is_adminform an implicit AND — both the path prefix and the source IP must match.

Stick Tables and Session Persistence

For applications requiring strict session affinity, HAProxy's stick tables maintain client-to-server bindings in an in-memory key-value store. Entries are created on first contact and expire after a configurable TTL, preventing unbounded memory growth.

backend sticky_app

balance roundrobin

cookie SERVERID insert indirect nocache

stick-table type string len 32 size 100k expire 30m

stick on cookie(SERVERID)

server node01 10.10.60.11:8080 check cookie node01

server node02 10.10.60.12:8080 check cookie node02

server node03 10.10.60.13:8080 check cookie node03HAProxy injects a

SERVERIDcookie on the first response. On subsequent requests, it reads the cookie, consults the stick table, and forwards the request to the mapped backend. If that backend is down, HAProxy selects a new one via the load balancing algorithm and updates the cookie transparently.

Rate Limiting with Stick Tables

Stick tables also power HAProxy's rate limiting by tracking request counters per source IP within a configurable sliding window. Clients exceeding the threshold receive an HTTP 429 response before reaching any application server.

frontend http_ratelimit

bind 10.10.10.1:80

stick-table type ip size 200k expire 60s store http_req_rate(10s)

http-request track-sc0 src

http-request deny deny_status 429 if { sc_http_req_rate(0) gt 100 }

default_backend web_serversThis configuration tracks the HTTP request rate for each source IP over a 10-second window. Clients making more than 100 requests in any 10-second window are denied with 429 Too Many Requests. The stick table stores up to 200,000 entries; entries expire 60 seconds after the last request from that IP.

SSL/TLS Termination

HAProxy terminates TLS on the

bindline of the frontend. The certificate bundle must contain the private key, server certificate, and any intermediate CA certificates concatenated in a single PEM file. HAProxy supports SNI-based multi-certificate hosting, ALPN negotiation for HTTP/2, and granular cipher suite control.

frontend tls_termination

bind 10.10.10.1:443 ssl crt /etc/haproxy/certs/solvethenetwork.com.pem \

ciphers ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384 \

no-sslv3 no-tlsv10 no-tlsv11 \

alpn h2,http/1.1

http-response set-header Strict-Transport-Security \

"max-age=63072000; includeSubDomains; preload"

default_backend web_serversThis configuration enforces TLS 1.2 as the minimum protocol version, restricts cipher negotiation to AEAD suites with forward secrecy, enables HTTP/2 via ALPN, and injects HSTS headers into every response. Backend servers on the internal

10.10.10.0/24network receive plain HTTP, eliminating per-server TLS configuration overhead.

HAProxy Stats Page

HAProxy ships a built-in web statistics dashboard providing real-time visibility into frontend and backend health, active and queued connection counts, request rates, error rates, response time distributions, and server weights. It is an indispensable operational tool requiring no external monitoring agent.

listen stats

bind 10.10.10.1:8404

stats enable

stats uri /haproxy?stats

stats realm HAProxy\ Statistics

stats auth infrarunbook-admin:S3cur3P@ssw0rd!

stats refresh 5s

stats show-node

stats show-legends

stats hide-versionThe dashboard is accessible at

http://10.10.10.1:8404/haproxy?stats. Authentication is enforced via HTTP Basic Auth with the credentials shown.

hide-versionprevents the HAProxy version string from appearing in the page source, reducing exposure to version-specific vulnerability scanners.

show-nodedisplays the hostname of the HAProxy node serving the page — critical in a Keepalived HA pair to confirm which node is active.

Logging and Syslog Integration

HAProxy writes structured access logs to syslog using a highly configurable format string. The default HTTP log line includes timestamps, client IP and port, request processing times broken into connect/wait/response phases, HTTP status code, bytes transferred, backend and server names, and termination state codes.

global

log 127.0.0.1:514 local0 info

defaults

log global

option httplog

log-format "%ci:%cp [%tr] %ft %b/%s %TR/%Tw/%Tc/%Tr/%Ta %ST %B %tsc %ac/%fc/%bc/%sc/%rc %{+Q}r"On sw-infrarunbook-01, configure rsyslog to receive UDP syslog on the loopback interface and write HAProxy traffic to a dedicated log file:

# /etc/rsyslog.d/49-haproxy.conf

$ModLoad imudp

$UDPServerRun 514

$UDPServerAddress 127.0.0.1

local0.* /var/log/haproxy/access.log

local1.* /var/log/haproxy/error.log

& stopConnection Limits

Uncontrolled connection growth exhausts kernel file descriptors, starves the process of sockets, and causes cascading failures across all backends. HAProxy enforces limits at three scopes: the global process, individual frontends, and individual backend servers.

global

maxconn 50000

frontend http_in

bind 10.10.10.1:80

maxconn 20000

backend web_servers

timeout queue 10s

server web01 10.10.10.11:8080 check maxconn 500

server web02 10.10.10.12:8080 check maxconn 500

server web03 10.10.10.13:8080 check maxconn 500When a backend server reaches its

maxconnlimit, HAProxy queues new connections rather than forcing additional connections beyond the server's capacity. The

timeout queuedirective controls how long a connection waits in queue before HAProxy returns a 503 Service Unavailable, protecting clients from indefinite hangs.

Keepalived HA for HAProxy Itself

A single HAProxy instance is a single point of failure. The standard high-availability pattern pairs two HAProxy nodes with Keepalived, which implements VRRP to manage a floating VIP. The active node holds the VIP; when it fails, the standby assumes the VIP within one to two seconds.

# /etc/keepalived/keepalived.conf on sw-infrarunbook-01 (MASTER)

vrrp_script chk_haproxy {

script "killall -0 haproxy"

interval 2

weight -20

}

vrrp_instance VI_1 {

state MASTER

interface eth0

virtual_router_id 51

priority 110

advert_int 1

authentication {

auth_type PASS

auth_pass infraRunB00k!

}

virtual_ipaddress {

10.10.10.1/24

}

track_script {

chk_haproxy

}

}The

vrrp_scriptblock sends

SIGZEROto the HAProxy process every 2 seconds. If HAProxy dies, the check fails and the MASTER node's priority drops by 20 points — falling below the BACKUP node's base priority of 100 — triggering immediate VIP migration. The VIP

10.10.10.1moves to the standby node and all DNS resolutions remain valid without any external change.

Operational note: Always configure HAProxy's runtime socket (/var/run/haproxy/admin.sock) when running Keepalived. This socket allows thevrrp_scriptand external tooling to inspect HAProxy state, drain servers, and adjust weights without a full reload — critical for zero-downtime deployments.